The global memory crunch is no longer a background issue for Big Tech. It’s quickly becoming one of the biggest line items in hyperscaler budgets, reshaping how massive data center and AI infrastructure projects are planned and funded. Yet in the middle of skyrocketing DRAM prices and tight supply, NVIDIA appears to be sitting in an unusually comfortable position.

Over the past few quarters, DRAM contract prices have climbed sharply, and shortages have spilled into multiple markets, from AI servers to consumer devices. For hyperscalers running enormous cloud and AI platforms, the challenge is simple: there aren’t many alternatives. If they want to keep building, they often have to buy memory at whatever the market will bear, whether through expensive spot purchases or long-term contracts that are no longer cheap.

Supply chain reporting and analysis point to a dramatic shift in how much memory now influences total hyperscaler capital expenditures. Memory reportedly made up roughly 8% of overall hyperscaler spend during CY2023 and CY2024, but estimates suggest it could surge to around 30% by CY2026, with the potential to climb even higher in CY2027. If those projections hold, it would represent a near fourfold change in just a few years, effectively turning DRAM and other memory components into a dominant cost driver for next-generation infrastructure.

What’s fueling this increase isn’t just price inflation. Modern hyperscaler architectures are becoming more memory-hungry by design. The rise of rack-scale systems, memory pooling, and configurations connected through CXL switching is pushing demand upward. At the same time, widespread adoption of technologies like DDR5 and LPDDR5 in data center environments has intensified competition for general-purpose DRAM capacity. Memory is also central to hyperscalers’ custom silicon strategies and their ongoing efforts to optimize performance per watt and performance per dollar at massive scale.

Despite the pressure building across the industry, spending hasn’t shown clear signs of slowing. That suggests hyperscalers consider memory costs painful but unavoidable, especially as AI services and model training workloads continue expanding.

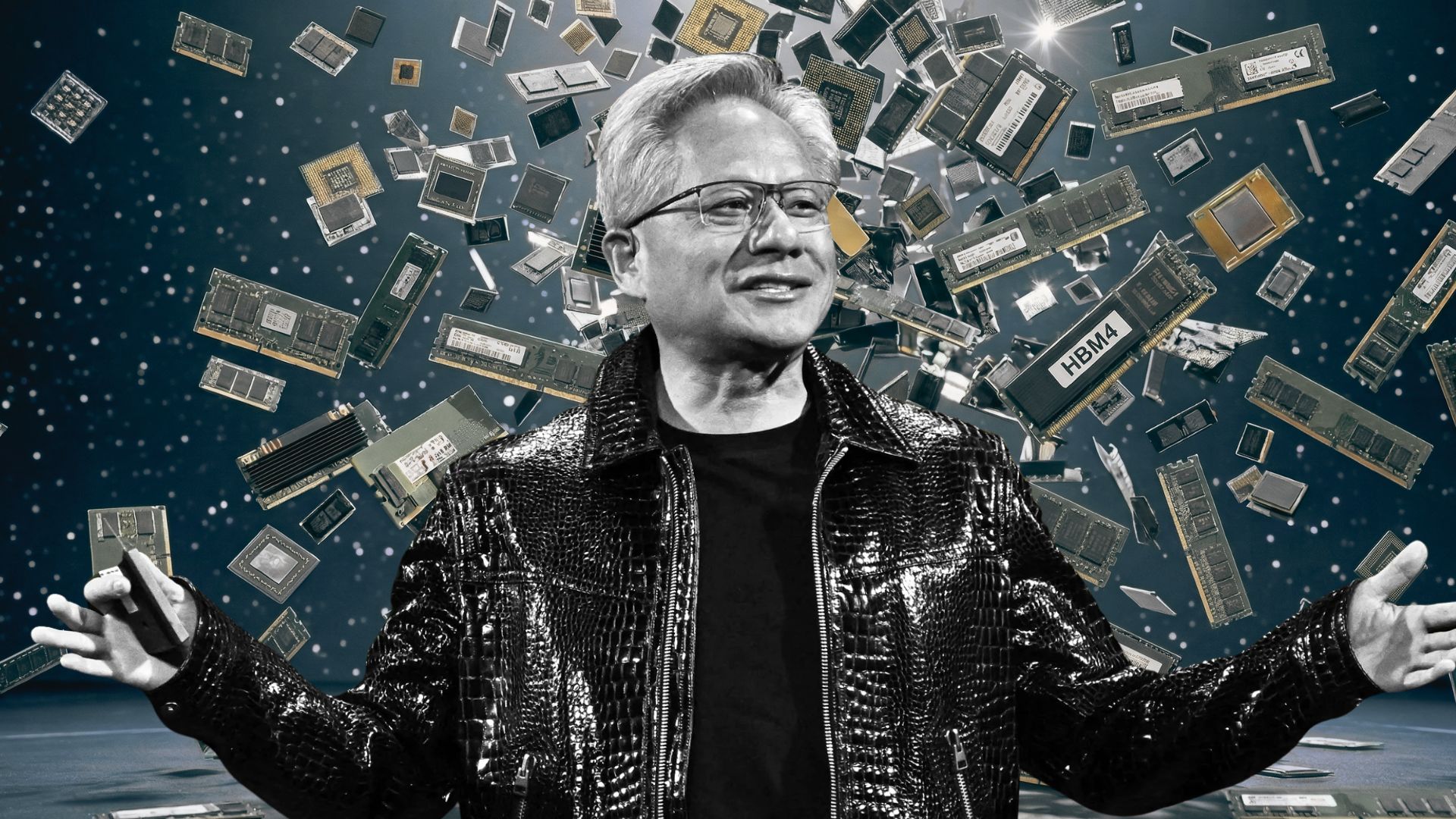

One of the most notable takeaways from recent supply chain discussion is that NVIDIA is believed to have secured an especially favored position with DRAM suppliers. The company is described as having a “Very Very Preferred” customer status, which can translate into priority access to capacity and more favorable pricing terms compared to other buyers. In a market defined by constrained supply, that kind of leverage can be decisive.

This also matches NVIDIA leadership’s earlier view that the company anticipated the surge in demand ahead of many others and moved quickly to lock in supply through extensive agreements. While many organizations are now scrambling to secure enough memory at reasonable terms, NVIDIA’s proactive strategy appears to have insulated it from the worst of the shortages, at least for now.

The bigger message is that NVIDIA’s advantage in today’s AI buildout extends beyond GPUs. Strong relationships across the supply chain can matter just as much as headline-grabbing hardware. From memory access to advanced packaging and broader semiconductor capacity dynamics, NVIDIA’s position highlights an uncomfortable reality for competitors: catching up in AI infrastructure isn’t only about building a faster chip. It’s also about securing the parts, pricing, and production priorities needed to deliver systems at scale while others fight over limited supply.