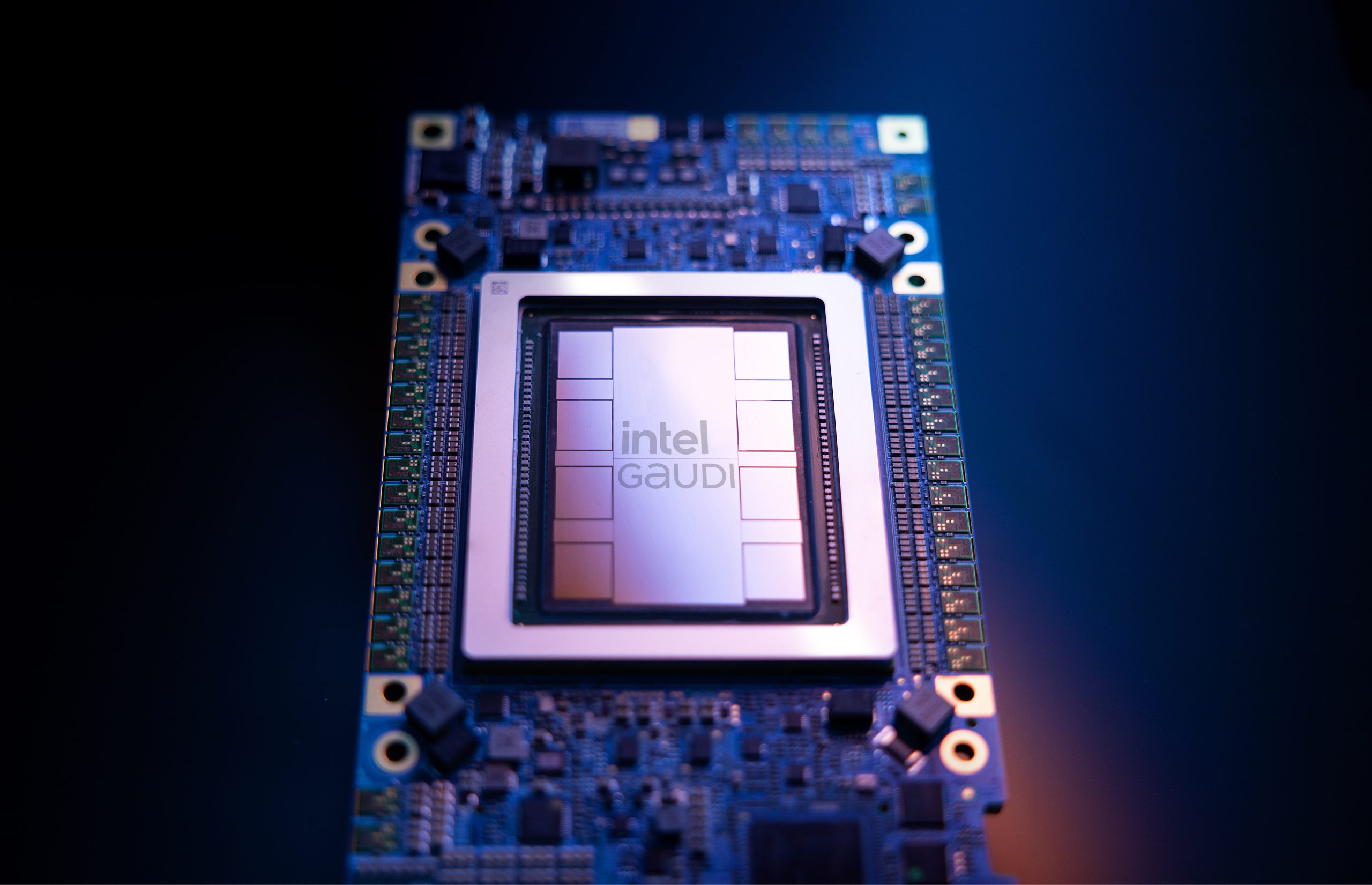

The world of Artificial Intelligence (AI) acceleration has witnessed a significant development as Intel steps up its game with the all-new Gaudi 3 AI Accelerator. Utilizing a state-of-the-art 5nm process node, this next-gen technology aims to take on NVIDIA’s H100 GPUs, promising to deliver an impressive 50% faster AI performance while being 40% more efficient on average.

Intel’s announcement of the Gaudi 3 accelerator comes as a breakthrough, positioning itself as a formidable competitor in the AI segment, which has long been dominated by NVIDIA. Intel’s Gaudi AI accelerators have consistently offered a streamlined alternative, with previous models such as the Gaudi 2 providing a strong balance between cost and performance. However, the H100 GPUs have held the reputation as the overall leader in AI performance—until now.

On April 9, 2024, at the Intel Vision event held in Phoenix, Arizona, the tech giant unveiled the Intel Gaudi 3 AI Accelerator. This new accelerator builds on the success of its predecessor, the Gaudi 2, extending the legacy with enhanced performance and scalability, optimized for generative AI applications.

The design of the Gaudi 3 features cutting-edge advancements like the 5th Generation Tensor Core architecture encompassing 64 tensor cores distributed over two compute dies. Furthermore, the accelerator boasts a generous 96 MB cache pool shared between the dies, complemented by eight HBM sites equipped with 8-Hi stacks of 16 Gb HBM2e DRAM, providing up to 128 GB of memory capacity and 3.7 TB/s bandwidth. This incredible performance is crafted using TSMC’s 5nm manufacturing process and includes 24 200GbE interconnect links for extensive networking capabilities.

Intel will offer the Gaudi 3 AI accelerators in different form factors, including the Mezzanine OAM (HL-325L) capable of reaching up to 900W in its standard configuration and liquid-cooled variants exceeding 900W, as well as the PCIe AIC variant designed to fit in full-height, double-width spaces with passive cooling and up to 600W TDP.

Supporting this hardware, Intel announced its HLB-325 baseboard and the HLFB-325L integrated subsystem, which can house as many as eight Gaudi 3 accelerators within a 19-inch chassis, handling a combined TDP of 7.6 Kilowatts.

Delving into the custom architecture, the Gaudi 3 accelerator has been built to cater specifically to efficient large-scale AI computing. Each unit comes equipped with a heterogeneous compute engine that includes 64 AI-custom, programmable Tensor Processor Cores (TPCs) and eight Matrix Multiplication Engines (MMEs). Every Intel Gaudi 3 MME can execute an astounding 64,000 parallel operations, showcasing remarkable computational efficiency for deep learning matrix operations.

To address the vast memory demands for processing generative AI datasets, the Gaudi 3 has been outfitted with an expansive 128GB HBM2e memory capacity, 3.7TB/s of memory bandwidth, and 96MB of onboard SRAM. This specification allows handling large language and multimodal models with improved performance and data center cost-efficiency.

In terms of system scaling, the Gaudi 3 stands out with its 24 integrated 200Gb Ethernet ports for flexible and open-standard networking, promoting seamless scaling from single-node configurations to expansive compute clusters.

For developers, Intel is providing software that fully integrates with the PyTorch framework, offering compatibility with popular AI models from the Hugging Face community. This ensures that the Gaudi 3 can support a variety of data types including FP8 and BF16, and the new PCIe add-in card variant introduces an efficient solution for tasks like fine-tuning and inferencing.

The Intel Gaudi 3 is set to become available to Original Equipment Manufacturers (OEMs) in the second quarter of 2024 and is expected to hit general availability by the third quarter of the same year. The PCIe add-in card is scheduled for release in the last quarter of 2024.

Notably, several industry players such as Dell Technologies, HPE, Lenovo, and Supermicro are poised to incorporate the Gaudi 3 into their offerings. This new accelerator is also set to power cost-effective cloud LLM infrastructures, promising a competitive edge in price-performance metrics for various organizations, including NAVER.

The unveiling of the Gaudi 3 represents a leap forward for Intel in the AI acceleration space, aiming to democratize enterprise generative AI with higher performance, efficiency, and flexibility. The Gaudi 3 AI Accelerator not only boosts Intel’s presence in this niche but also offers a fresh challenge to NVIDIA’s dominance, introducing more choice and potential innovation to the industry.