A team of MIT engineers has found a clever way to turn one of electronics’ biggest headaches into something useful: waste heat. Instead of treating rising temperatures as a problem that needs to be pushed out with fans, heatsinks, or throttling, the researchers demonstrate that heat itself can be used to carry information and perform real computation.

In work published in Physical Review Applied, the team introduced microscopic silicon devices that can execute key machine-learning mathematics using heat rather than electrical current. These tiny structures are about the size of a dust particle, yet they’re designed to guide thermal energy through intricate pathways to produce meaningful computational results.

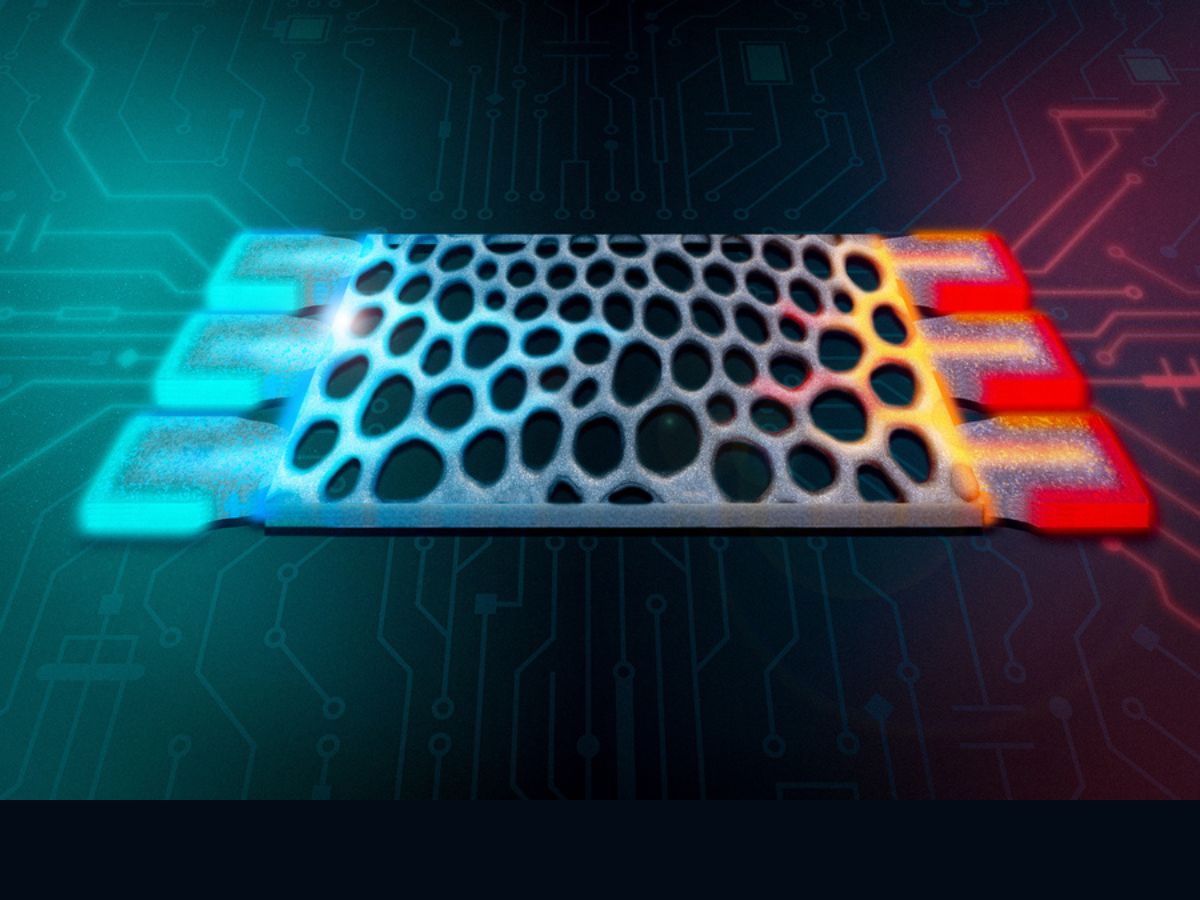

At the center of the breakthrough is a design approach known as inverse design. Rather than manually drawing a device layout and hoping it behaves as intended, the researchers specify the function they want—then software and optimization algorithms generate a complex geometry that can achieve it. The result is a porous, maze-like silicon structure that directs heat flow in highly controlled ways.

What makes this especially interesting for artificial intelligence is the type of calculation these devices can perform. The structures are built to carry out matrix-vector multiplication, the foundational operation behind machine-learning systems, including deep neural networks and large language models. In simulations, the thermal computing approach reached over 99% accuracy for the target computations, suggesting that heat-driven math can be far more precise than many people might assume.

Using heat as a computational signal does come with a basic physics challenge: heat naturally flows only from hot to cold. To work around this limitation, the team split the target matrices into positive and negative parts and ran them through separate structures. They also fine-tuned the thickness of the silicon to better control thermal conduction, giving them a more accurate handle on how heat moves through the device.

While this heat-based computing concept isn’t positioned to replace conventional processors anytime soon—bandwidth and scalability remain major hurdles, especially for large, multi-layer deep-learning workloads—it may offer strong near-term value in an area every device cares about: thermal management.

Because these microscopic structures can respond directly to temperature changes, they could potentially be integrated into electronics to detect overheating, identify hotspots, or sense temperature gradients without needing external power, traditional digital sensors, or extra computing overhead. In other words, future devices may be able to “feel” and respond to dangerous heat patterns using the heat itself.

Next, the researchers are aiming to push the idea further by creating programmable thermal structures capable of performing sequential operations, a step that could expand what thermal analog computing can do in practical systems.

If this research continues to scale, it could open the door to a new class of ultra-efficient computing elements that reuse energy already produced inside chips—turning wasted heat into a functional resource for smarter, more resilient electronics.