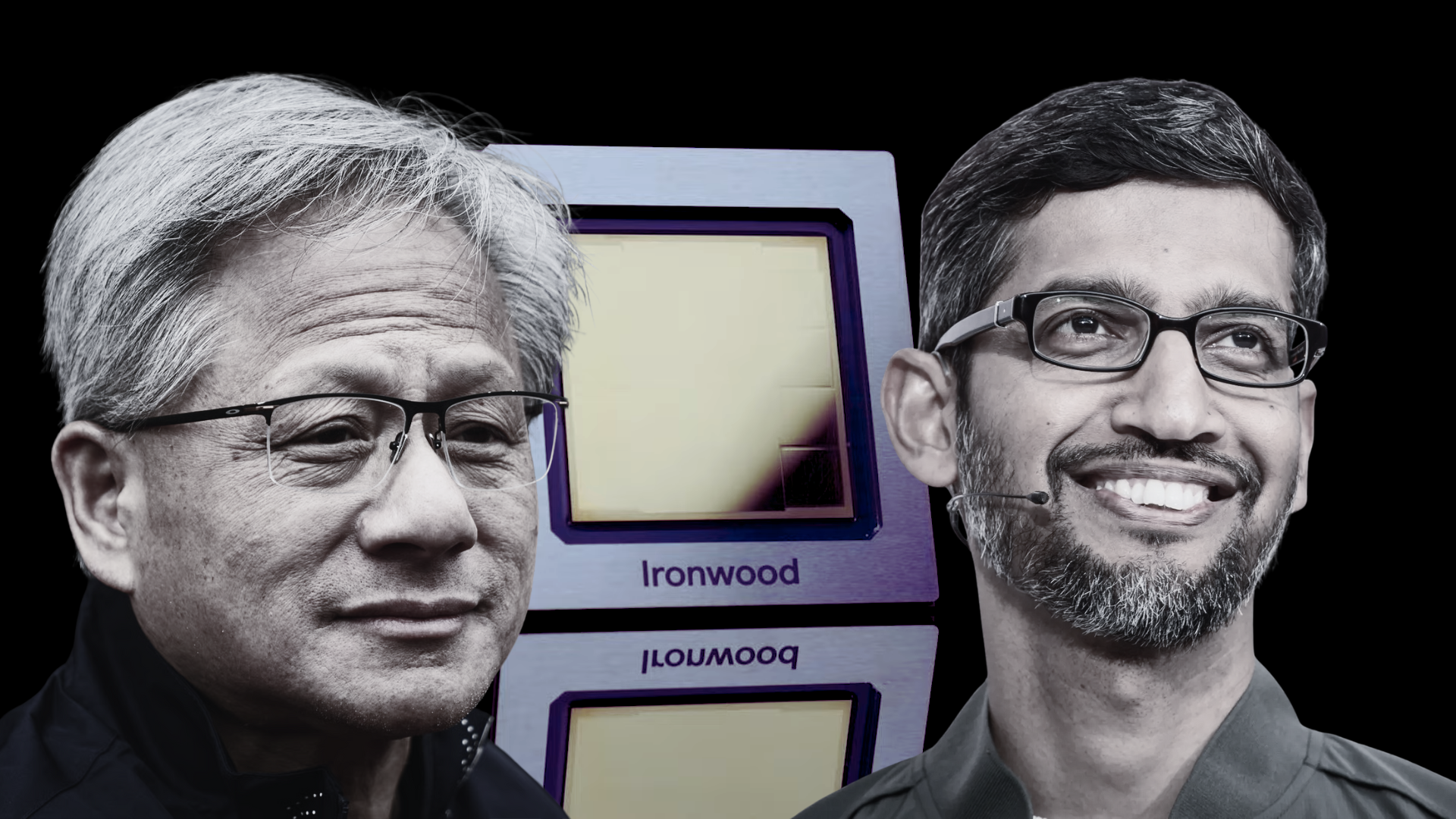

NVIDIA’s fiercest challenger in AI isn’t who you might expect. While AMD and Intel remain in the race, Google has quietly built a commanding position with its custom TPU lineup, and its newest generation—TPU v7, codenamed Ironwood—signals a serious momentum shift. Even NVIDIA’s Jensen Huang has acknowledged how hard-hitting Google’s custom silicon has become.

Google has been iterating on AI accelerators since 2016, long before most of the industry took custom AI chips seriously. That early start is paying off. The Ironwood TPU arrives as an inference-first platform designed for the phase AI is entering now: serving massive numbers of real-time queries for already-trained models. This is where latency, throughput, energy efficiency, and cost per query matter more than raw training FLOPs.

What makes Ironwood stand out

– Up to 10x peak performance over TPU v5p

– Around 4x better per‑chip performance for both training and inference versus TPU v6e (Trillium)

– Approximately 16x uplift over TPU v4 in peak compute

– The most energy‑efficient, highest‑performance custom silicon Google has built to date

Key specifications that drive those gains

– About 4,614 TFLOPS of FP8 compute per chip

– 192 GB of HBM3e per chip with roughly 7.2–7.4 TB/s of bandwidth

– Up to 9,216 chips in a single SuperPod

– Around 42.5 exaFLOPS of FP8 compute per SuperPod

– Roughly 1.77 PB of total HBM across a maxed‑out pod

– A high‑density InterChip Interconnect (ICI) running about 1.2 Tb/s per link

– 3D torus topology for efficient, large‑scale, low‑latency communication

Why this matters in the age of inference

Enterprises now run far more inference queries than new model training jobs. That shifts the performance target from “more FLOPs” to “more answers, faster, cheaper.” Ironwood’s design choices acknowledge that reality:

– Huge on‑package memory: 192 GB of HBM3e per chip helps keep large model contexts local, cuts chatter between chips, and reduces latency—a decisive advantage for serving big models at scale.

– High interconnect density: Google’s ICI and 3D torus layout emphasize scalability and predictable performance across thousands of chips, an area where Google positions itself as more flexible than alternatives built around traditional GPU interconnects.

– Power efficiency: Hyperscale inference runs 24/7. Google claims roughly 2x better efficiency versus its previous generation, which translates directly into lower cost per query and more sustainable deployments.

Strategic implications for the AI landscape

Ironwood isn’t just about speed; it’s about economics and control. By focusing on inference and offering the hardware through its cloud, Google can tightly integrate chips, software, and services—an ecosystem play that could pull customers deeper into its platform. That’s a direct challenge to the longstanding AI leadership of NVIDIA, which built its dominance in the training era.

To be clear, the competition isn’t one‑sided. NVIDIA is adapting with rack‑scale solutions like Rubin CPX aimed at inference efficiency and deployment simplicity. Still, Google’s trajectory with TPUs—now in their seventh generation—positions it as the most credible rival in AI compute right now, with others playing catch‑up.

The bottom line

TPU v7 Ironwood marks a turning point: it aligns perfectly with the industry’s pivot from training to inference. With massive memory per chip, a scale‑friendly interconnect, strong FP8 performance, and a focus on power efficiency, Google is attacking the metrics that matter for serving models at hyperscale. Even NVIDIA’s leadership recognizes how formidable custom AI accelerators have become. As Ironwood rolls out across workloads in the coming weeks, the “Google versus NVIDIA” rivalry is set to define the next phase of AI infrastructure.