Foxconn, best known as one of NVIDIA’s biggest supply chain partners for AI hardware, is now reportedly expanding its role in the AI server world by taking on new orders tied to Google’s TPU-based AI clusters. If the report is accurate, it signals a meaningful pivot: Foxconn won’t just be associated with GPU-powered AI infrastructure, but will also help scale Google’s custom silicon platform as demand for alternative AI accelerators grows.

Interest in ASIC-based AI hardware has been rising quickly, especially as Google’s latest TPU generation pushes into the spotlight. What’s new is that TPUs are increasingly viewed as more than an internal Google-only solution. They’re now being discussed as a viable option for external customers as well, with rumors suggesting that major companies are evaluating them for broader deployment. That growing momentum helps explain why manufacturing capacity and supply chain partnerships around TPU infrastructure are becoming more important.

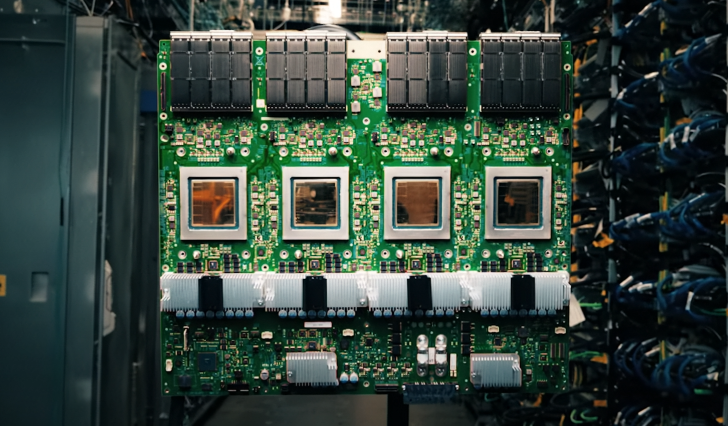

According to a report from Taiwan’s business press, Foxconn has received orders related to Google’s TPU compute trays and may also take part in work connected to Google’s “Intrinsic” robotics initiative. The same report describes how Google’s TPU-based AI servers are organized into two primary rack types: one rack focused on the TPU hardware itself, and another rack dedicated to computing trays. The proposed shipping structure is said to be straightforward—each TPU rack shipment is paired with a corresponding rack of computing trays, creating a 1:1 supply ratio. In this setup, Foxconn’s production responsibilities would align directly with the number of TPU racks ordered.

Google’s newest, seventh-generation TPU platform isn’t only about a faster chip. It’s built around a scalable rack-level system the company calls a “Superpod,” designed to deliver large amounts of compute by tightly integrating thousands of chips. The configuration is described as reaching 9,216 chips per pod, with aggregate FP8 performance of up to 42.5 exaFLOPS for relevant workloads. Google also relies on its InterChip Interconnect (ICI) and a 3D torus network layout to enable dense, high-bandwidth connections across massive TPU deployments—an architectural choice aimed at keeping large-scale AI workloads moving efficiently across the cluster.

The timing also makes sense. As AI shifts from training-heavy cycles to more inference-dominant demand, companies are rethinking their infrastructure to maximize inference throughput while keeping total cost of ownership under control. That’s one reason Google’s TPUs are increasingly being positioned as a strong candidate for AI application and inference phases, especially for organizations looking beyond the typical GPU route.

For the broader market, this development adds fuel to an ongoing question: will custom silicon from big tech companies meaningfully reduce reliance on NVIDIA over time? Regardless of how that debate plays out, one thing is clear from the supply chain angle—interest in TPU-based solutions is rising, and manufacturers like Foxconn are preparing to serve both sides of the AI infrastructure boom.