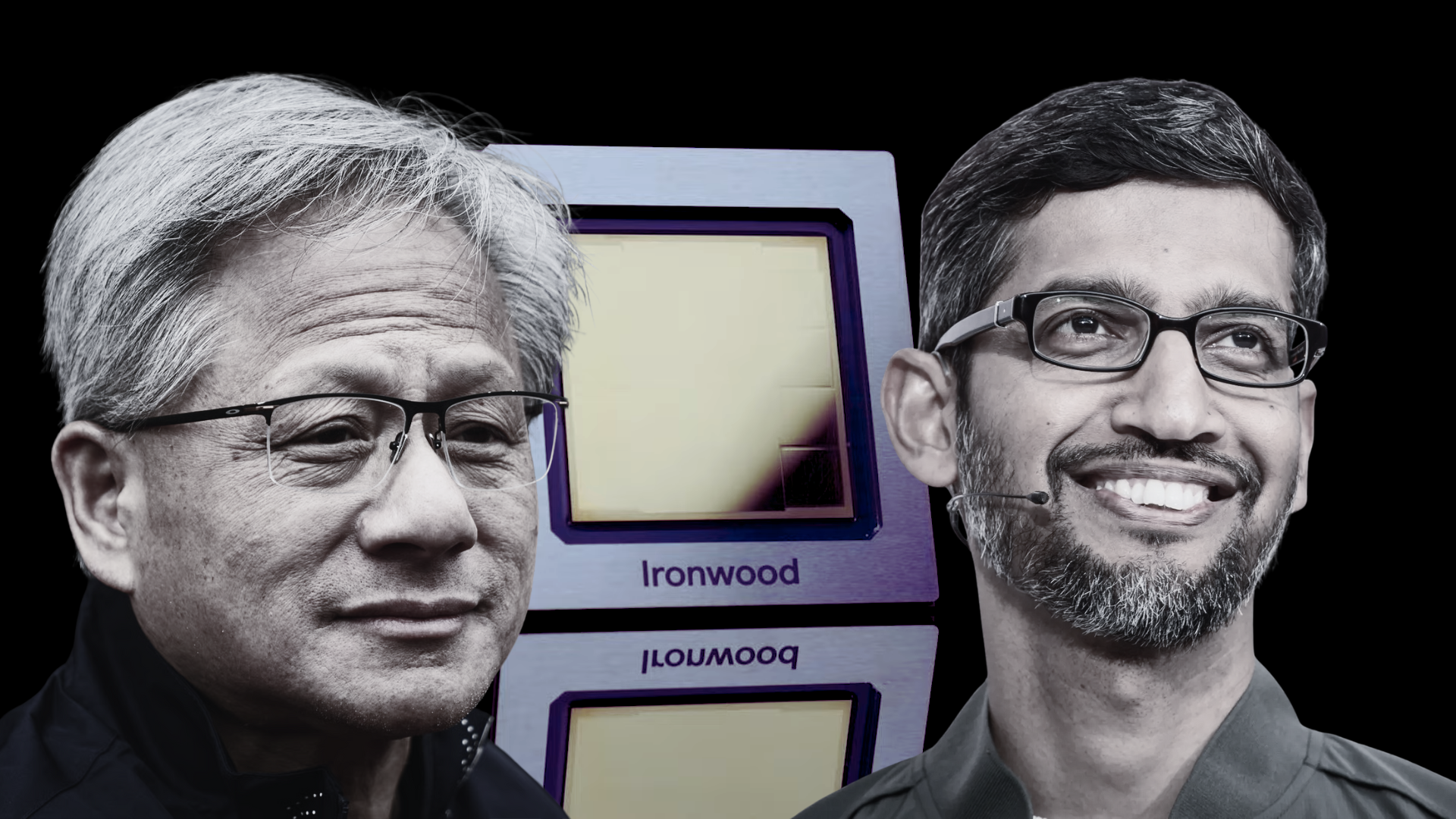

NVIDIA is pushing back against the growing buzz around Google’s TPU accelerators, especially as reports suggest the custom chips could be expanding beyond Google’s own infrastructure and into the broader AI market.

Interest in Google TPUs has surged lately following claims that major AI players such as Meta and Anthropic are exploring or adopting TPU hardware for certain workloads. That chatter has fueled a bigger narrative: that specialized AI chips, known as ASICs, might be ready to challenge NVIDIA’s long-standing dominance in AI computing.

In response, NVIDIA said it welcomes Google’s progress in AI and emphasized that it continues to supply hardware to Google as well. At the same time, the company drew a clear line between what it believes its platform offers and what purpose-built ASICs can realistically deliver.

NVIDIA’s core argument is that ASICs are typically built to excel at a narrow set of tasks—often tied to specific AI frameworks or functions—while NVIDIA positions its GPUs and software stack as a more universal platform. According to the company, that broader approach matters because modern AI isn’t just one workload. It spans everything from pre-training and post-training to fine-tuning large language models, plus deployment at scale across data centers and other environments.

The renewed attention on TPUs stems in part from a report claiming Meta could spend billions of dollars on Google’s TPU offerings for AI workloads. That same report projected that broader, external adoption of TPUs could potentially amount to a meaningful slice of NVIDIA’s AI revenue over time. The underlying idea is that Google has shown how effective vertically integrated AI infrastructure can be—especially for inference, where efficiency and cost-per-query become crucial—and that this approach may deliver performance advantages in certain scenarios.

Google is also viewed as one of the strongest competitors in the ASIC race simply because it has invested in TPU development for nearly a decade. The company has had time to refine its chips, optimize them around its internal AI stack, and prove them in real-world production environments.

Still, NVIDIA is leaning on a familiar advantage: flexibility. Rather than targeting one framework or a specific inference path, NVIDIA is betting that companies want AI hardware that can handle a wide variety of models and workloads, scale across different computing environments, and remain “fungible” as needs change—whether that means training today, inference tomorrow, or adapting to whatever model architectures come next.

It’s also worth noting that Google remains a significant buyer of NVIDIA AI hardware, which suggests the battle isn’t a simple replacement story. For many large organizations, the future may look more like a mix of platforms—where ASICs handle certain optimized workloads while GPUs continue to power broader AI development and experimentation.

As AI shifts further toward real-world deployment, inference is becoming the major battleground. That’s where cost, throughput, latency, and efficiency will define winners—and where the competition between NVIDIA GPUs and custom ASIC accelerators like Google TPUs is likely to intensify.