NVIDIA’s Rubin CPX chip was expected to be a notable part of the company’s next-wave AI hardware story, but it ended up being a no-show at this year’s GTC event. That absence immediately sparked speculation that Rubin CPX had been shelved. A newer update now suggests it hasn’t been canceled outright—yet it’s not arriving anytime soon, either. Instead, Rubin CPX appears to be delayed and effectively pushed into a future generation aligned with NVIDIA’s Feynman platform.

So what happened to Rubin CPX, and why does it matter? NVIDIA has been working to strengthen its position in AI inference, especially as purpose-built ASIC solutions started gaining real momentum around the second half of last year. Rubin CPX was one of the company’s more specialized ideas: a rack-focused chip designed to speed up parts of inference work, particularly prefill workloads. It also stood out because it was positioned as one of the first rack-oriented solutions to use GDDR7 memory onboard—a notable choice for a data center inference product.

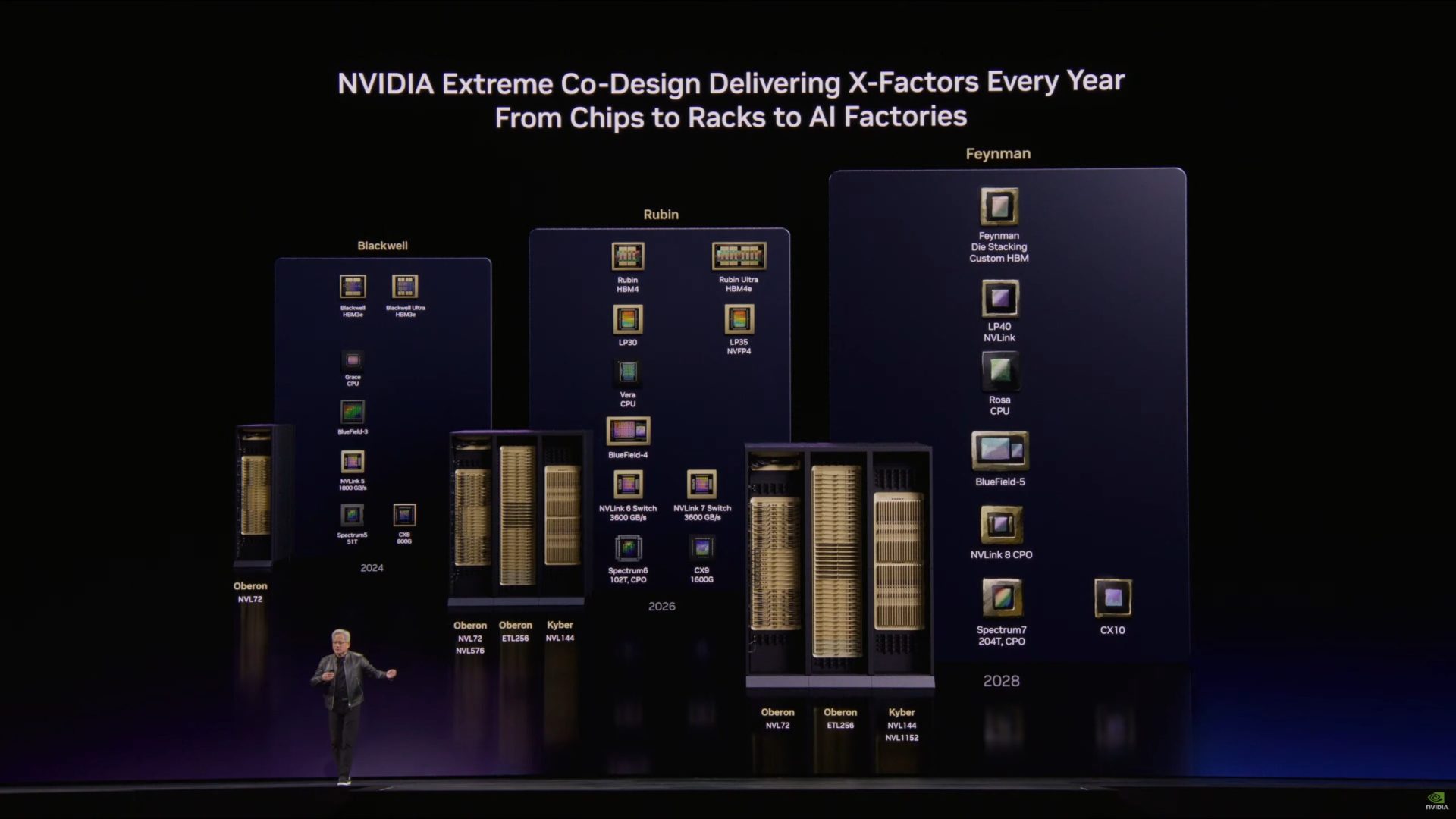

But when NVIDIA CEO Jensen Huang presented the Rubin family at GTC, the Rubin CPX chip wasn’t included. That omission lined up with the idea that the product was either delayed or being rethought. NVIDIA VP Ian Buck has now clarified that the concept is still alive, but the timing has shifted. According to Buck, a CPX-like solution is now expected to debut alongside Feynman, which is slated for several years from now.

The delay seems tied to how quickly inference priorities are changing. Instead of optimizing primarily for long-context handling, the industry has increasingly emphasized metrics like time to first token (TTFT), which measures how quickly a model can begin responding. As those demands evolve, NVIDIA appears to be rebalancing its approach toward the parts of the inference pipeline that matter most right now.

That’s where the Rubin LPX tray comes into the picture—along with Groq. NVIDIA has been leaning hard into its partnership story here, positioning Groq’s LPU-based hardware as a key element in covering the inference “decode” stage, where tokens are generated after the initial prompt processing. In other words, as the original CPX plan targeted prefill, the LPX approach is gaining relevance by focusing on decoding throughput and responsiveness.

NVIDIA’s messaging indicates it’s enthusiastic about the performance potential. LPUs use an SRAM-based approach, and the stated bandwidth numbers are massive: up to 150 TB/s at the individual level, scaling to around 640 TB/s of scale-up bandwidth across the rack. Those figures help explain why NVIDIA would prioritize the LPX tray strategy rather than push Rubin CPX to market in its earlier form.

There’s another important twist: reports also indicate NVIDIA had been revisiting the Rubin CPX design, potentially moving away from GDDR7 and toward HBM memory instead. If that shift happens, the eventual Feynman-era CPX may look significantly different from what people originally expected under the Rubin name.

For NVIDIA, all of this fits into a bigger goal: defending a leadership position in inference performance. Jensen has even referred to NVIDIA as the “inference king,” and the Groq-backed LPX direction is one way the company seems intent on keeping that claim credible as competition heats up.

And there’s a small side effect that could be welcomed outside the data center world. If Rubin CPX is delayed, that may free up GDDR7 supply that otherwise would have gone into an AI-focused rack chip—potentially easing pressure on availability for other products that use GDDR7, including gaming hardware.

In short, Rubin CPX hasn’t disappeared, but it’s no longer an imminent part of NVIDIA’s AI roadmap. The company is adapting to shifting inference workloads, leaning into decode-focused acceleration today, and possibly saving the reworked CPX concept for its Feynman generation.