As GTC 2026 approaches, the AI industry is bracing for what could be one of the biggest shifts in modern computing. Over the past few years, the race to build better AI infrastructure has accelerated quickly, pushing major chipmakers to rethink what “performance” really means. Since 2022, large-scale training has dominated, and NVIDIA’s Hopper and Blackwell platforms helped define that era. Now, heading into 2026, momentum is building around agentic AI workloads, and that change is expected to shape the most important announcements at GTC.

Expect one phrase to pop up repeatedly: agentic performance. The idea is simple but powerful—AI workloads are evolving beyond classic training and inference into systems that plan, act, and iterate. That evolution demands new approaches to compute, memory, and interconnects, and NVIDIA appears to be positioning its next wave of products around that reality.

A key development to watch is NVIDIA’s growing alignment with Groq and how it may move beyond a GPU-only strategy. Going into GTC 2026, expectations are rising that this collaboration will become more tangible, potentially showing up as real product configurations rather than just strategic talk. One potential direction is a hybrid design that pairs Groq’s LPU (Language Processing Unit) technology with NVIDIA’s Vera Rubin systems.

If that happens, it would signal a notable shift: instead of relying on GPUs alone, NVIDIA could begin offering disaggregated inference configurations where different processors handle different stages of an AI request more efficiently. Speculation suggests LPUs could appear in compute trays in multiple sizes, such as 64, 128, or 256 LPU units per tray, connected to Rubin GPUs using NVLink Fusion. The goal would be to optimize specific steps of inference, such as decode, by assigning them to the most suitable hardware.

This also fits with NVIDIA leadership’s previous comparisons between Groq’s strategic value and earlier game-changing moves like Mellanox, where networking and system-scale engineering became a major competitive advantage. With Rubin CPX already positioned as a solution for prefill-heavy workloads, a mixed GPU + LPU approach could give NVIDIA coverage across two major stages of typical inference requests—prefill and decode—while opening the door to more workload-specific system configurations.

That platform mindset is at the heart of why GTC 2026 is being watched so closely. AI is no longer one-size-fits-all, and NVIDIA appears increasingly focused on offering a portfolio of targeted configurations rather than treating the GPU as the only answer to every problem.

While Vera Rubin is entering full production, attention is also turning to what’s next. NVIDIA is expected to share more about its next-generation architecture, Feynman, which was teased previously but may get a deeper technical spotlight at GTC 2026. One of the most intriguing angles is that Feynman is rumored to lean more heavily on process-node scaling, with claims pointing to TSMC’s A16 (often discussed in the context of 1.6nm-class technology). There’s also chatter that NVIDIA could be an exclusive or especially prominent customer for that node, since its use may be limited for broader market segments early on.

Feynman is also expected to embrace advanced packaging and 3D integration. Reports point toward hybrid bonding approaches, with possibilities including technologies like SoIC, and broader discussion around stacking and dense chip-to-chip connections. In parallel, there’s speculation that Groq’s LPUs could play a deeper role in this future platform, potentially in forms that go beyond “side-by-side” integration—possibly even stacked or tightly coupled with compute dies, depending on design feasibility.

There have also been rumors about NVIDIA exploring Intel’s 14A process for parts of its future roadmap, although nothing is confirmed. Even without confirmation, the broader signal is clear: Feynman may represent a major microarchitecture and manufacturing-era change, and rack-scale systems are expected to evolve quickly alongside it.

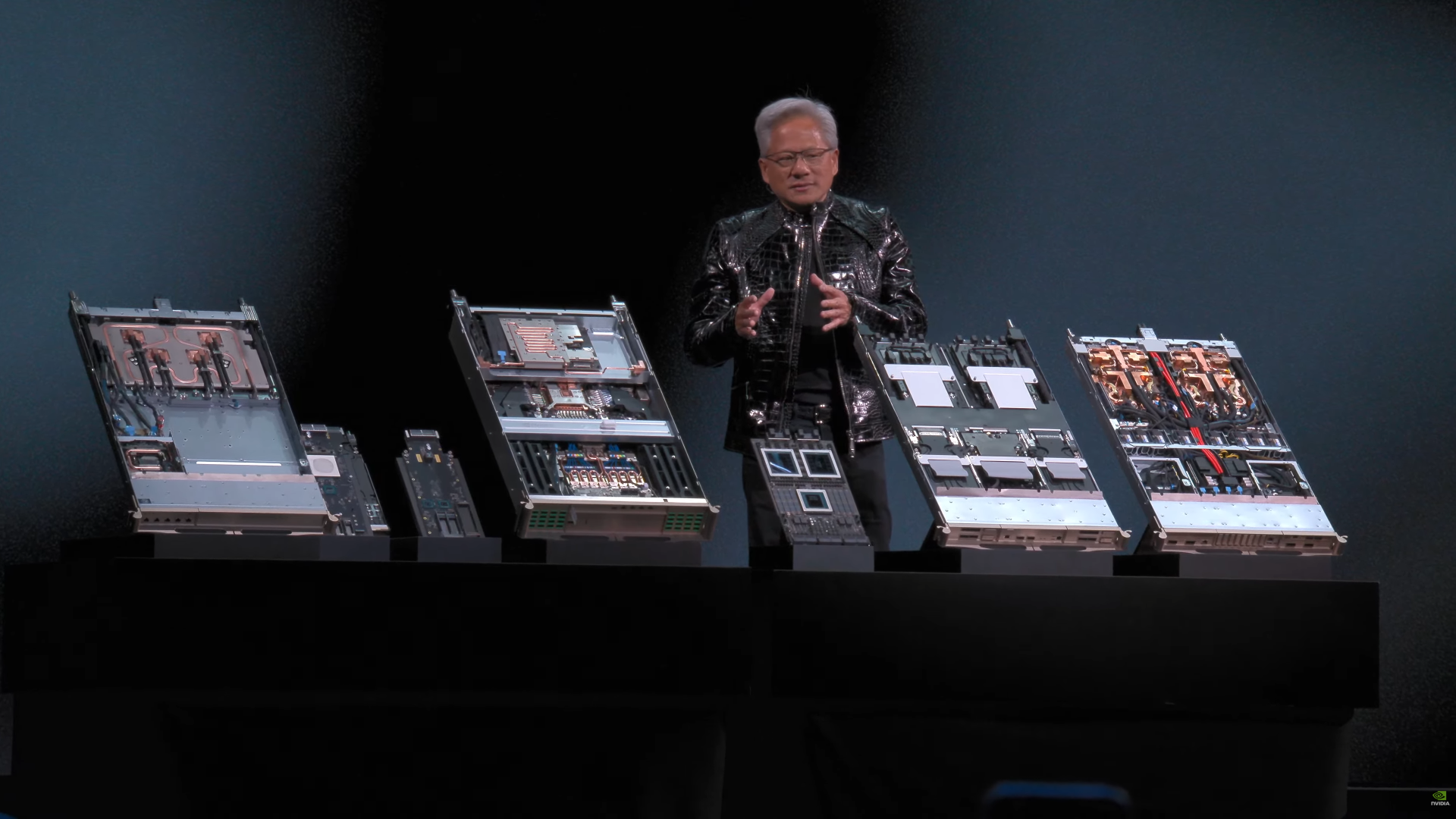

Even so, Vera Rubin is far from the end of the story. NVIDIA still has a wide Rubin lineup to expand, and rack-scale platforms remain central to its AI infrastructure strategy. The NVL72 rack, shown earlier as a 72-chip configuration, is widely viewed as a baseline. Scaling paths beyond that have been discussed, including NVL144 and the much larger NVL576, but expectations suggest NVIDIA’s customers may be pulling demand toward bigger, more ambitious racks.

Rubin CPX is another important piece of the lineup, designed as a context-focused, prefill-oriented rack-scale option, though real-world deployment details remain limited so far.

The most attention-grabbing system could be NVL576, especially as it is associated with a transition to a new “Kyber” generation of rack design. That shift reportedly includes vertically mounted compute trays, described as vertical blades, along with an 800 VDC facility-to-rack power delivery approach. NVL576 is expected to tie into Rubin Ultra GPUs, where changes to chiplet configuration and system topology may play a major role in how NVIDIA scales performance without simply multiplying the same building blocks.

Interconnect technology is also expected to become a defining factor at this scale. With 576 GPUs in a single rack-scale configuration, copper-based links become increasingly constrained by thermals and signal integrity. That’s why NVIDIA’s push around CPO (Co-Packaged Optics) switches is so important. Moving toward optics inside the fabric would aim to relieve thermal bottlenecks while improving throughput, switching capacity, and latency—exactly the kind of upgrades that matter when AI infrastructure is stretched to extreme densities.

Given the trajectory, it wouldn’t be shocking if NVIDIA teases even larger rack concepts beyond NVL576. But for now, the spotlight is likely to remain on Rubin and Rubin Ultra before Feynman takes center stage. There are also expectations of notable CPU-related announcements, including talk of collaboration involving Intel, which could further shape how NVIDIA builds complete AI platforms rather than only accelerators.

GTC 2026 begins March 16, with Jensen Huang’s keynote scheduled for 11:00 AM PT. If the current signals hold, this event won’t just be another product update. It could mark a turning point in how AI compute is packaged, scaled, and optimized for the next wave of agentic AI workloads.