“AI” might be the most overused word in tech today, but the real story behind artificial intelligence is far more compelling than the buzzword version floating around online. AI didn’t suddenly “arrive” when modern chatbots became popular, and it definitely wasn’t a single invention that popped into existence fully formed. It’s the result of decades of trial and error: bold breakthroughs, painful dead ends, long quiet periods, and surprising comebacks.

What makes AI’s evolution so interesting is that it hasn’t marched forward in a straight line. Instead, it has moved in waves, constantly swinging between two competing philosophies. One side believes intelligence can be built from explicit structure: rules, logic, and symbolic reasoning. The other side argues that intelligence emerges from learning statistical patterns in data. Even today, the industry is still balancing those two ideas, often blending them in practical systems. And along the way, one lesson has become impossible to ignore: real progress isn’t just about smarter algorithms—it’s also about how much data and computing power we can throw at the problem.

Before “Artificial Intelligence” Had a Name

Long before AI became an official academic field, researchers were already fascinated by the idea of mechanizing human thought. In 1950, Alan Turing helped push the conversation forward with his famous paper, Computing Machinery and Intelligence. Instead of debating the abstract question “Can machines think?”, Turing reframed it into something testable: if a machine can convincingly imitate a human in conversation, does it count as intelligent? That concept later became known as the Turing Test, and it gave early AI thinkers a concrete target.

By the mid-1950s, optimism was running high. Researchers began treating intelligence as an engineering problem—something that could be broken down into components like memory, decision-making, and search. This momentum led to the Dartmouth workshop, widely viewed as the moment AI was “born” as a formal discipline. The excitement at the time was intense; many genuinely believed human-level machine intelligence could be achieved within a generation. That didn’t happen, but the Dartmouth era set the direction: AI would be a serious, scientific attempt to simulate aspects of human intelligence with computers.

Classical (Symbolic) AI: Rules, Logic, and Search

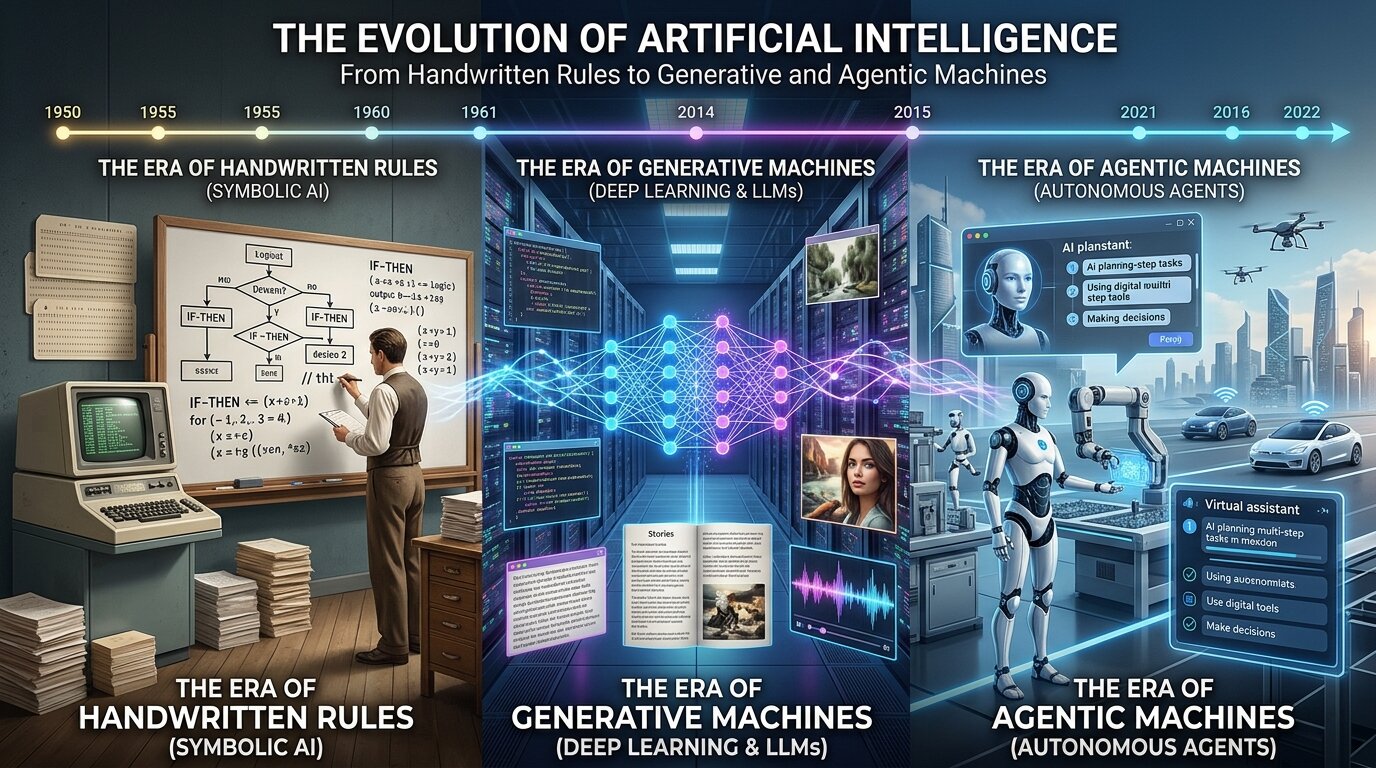

The first major era of AI is often called classical AI or symbolic AI. Its core belief was simple and appealing: if humans reason by manipulating facts and following logical steps, then machines should be able to do the same. In this world, “intelligence” looked like rule-following, structured problem-solving, planning, and searching through possibilities to reach a goal.

This approach produced ideas that still matter today. Search and pathfinding methods became foundational tools in computer science, showing up everywhere from routing problems to robotics and video game navigation. In controlled environments, symbolic systems could look impressive—solving logic puzzles, proving theorems, and performing well in structured games.

But symbolic AI carried a built-in weakness: it worked best when the world could be described perfectly with formal rules. Real life is the opposite—full of ambiguity, shifting contexts, edge cases, and messy exceptions. The moment symbolic systems stepped outside carefully defined environments, they often became brittle and unreliable. That gap between tidy logic and chaotic reality would become a major obstacle for AI for years.

Expert Systems and the First Commercial AI Boom

One of the most notable outcomes of symbolic AI was the expert system. Instead of trying to create general intelligence, expert systems focused on narrow professional domains. The idea was to capture specialist knowledge in large collections of “if-then” rules, allowing software to mimic decision-making in areas like medicine, diagnostics, and business operations.

For a while, expert systems looked like AI’s first true commercial breakthrough. Companies invested heavily, and expectations soared.

Then reality hit. Building these systems required extracting huge amounts of knowledge from human experts and translating it into rules—an exhausting, expensive process that was difficult to scale. Worse, once the rules were written, keeping them updated as real-world knowledge changed became a constant battle. This problem became known as the knowledge acquisition bottleneck. When expert systems failed to match the hype, excitement cooled, funding dried up, and the industry entered one of the infamous “AI winters”—periods when the field’s promises seemed bigger than its results.

The Statistical Turn: Machine Learning Changes the Core Question

Over time, AI researchers began to shift their thinking. Instead of asking, “How do we encode intelligence into rules?”, they started asking, “What if the machine can learn patterns directly from data?”

That shift created the modern foundation of machine learning. Rather than hand-writing logic for every situation, machine learning treats intelligence as generalization: train a model on examples, optimize it to perform well, and expect it to handle new cases it hasn’t seen before.

This era produced practical techniques that became essential across the tech world—decision trees, support vector machines (SVMs), and ensemble methods among them. These systems weren’t marketed as synthetic minds, but they were extremely effective at real business problems such as detecting fraud, filtering spam, and improving search rankings. Machine learning succeeded partly because it was more realistic. It didn’t need to “think like a human.” It just needed to be predictably useful, especially when fed enough high-quality data.

Neural Networks: A Comeback Story Decades in the Making

Neural networks often feel like a modern invention, but the underlying idea is surprisingly old. Researchers explored simplified “computational neurons” as early as the 1940s, and the perceptron drew attention in the 1950s. The promise was exciting: instead of explicitly programming every rule, a system could adjust internal weights and learn its own representations.

The problem was timing. Early neural networks didn’t have what they needed to thrive: massive datasets, affordable processing power, and reliable methods for training deeper architectures. Training multi-layer networks was especially difficult for a long time.

Progress accelerated once techniques like backpropagation and gradient descent made it viable to train more complex networks. Even then, neural networks still had to wait for the rest of the world—hardware, data infrastructure, and real-world demand—to catch up. This is one of the most consistent themes in AI history: the right idea can arrive decades too early, and only becomes transformative when computation and data finally reach the necessary scale.

Deep Learning: When Everything Finally Lined Up

Deep learning isn’t a completely separate category of AI—it’s what happens when neural networks grow large enough, deep enough, and data-hungry enough to learn layered representations on their own. With shallow models, humans often have to hand-design features—give the model an explicit notion of what to look for. With deep models, the network can learn feature hierarchies automatically, building complexity step by step.

A key turning point arrived in 2012, when AlexNet—a convolutional neural network (CNN)—delivered a landmark performance on the ImageNet benchmark. It demonstrated that deep neural networks, trained with large datasets and accelerated computing, could outperform older approaches by a wide margin in computer vision tasks. That moment helped ignite the modern deep learning boom and reshaped how researchers, companies, and investors thought about what was possible.

If you’d like, paste the remaining portion of your original content after the AlexNet line, and I’ll continue the rewrite in the same style while keeping the message consistent and optimizing it for search traffic.AI didn’t become today’s powerhouse just because of smarter algorithms. It took a perfect storm of data, new training ideas, and—most importantly—major breakthroughs in computing hardware. When researchers started pairing massive datasets with the parallel horsepower of GPUs, long-stalled problems like computer vision suddenly began to fall. That shift revealed a core truth that still defines modern machine learning: the AI revolution is as much a hardware story as it is a software story.

GPUs were originally designed to push pixels for games, but they turned out to be ideal for the kind of heavy-duty math deep neural networks depend on—especially matrix multiplication (“matmul”) and other linear algebra operations. As deep learning grew, the industry went further by building specialized features for AI workloads, including Tensor Cores and dedicated AI accelerators such as TPUs. Without these changes, deep learning likely would have stayed in academic circles rather than powering real products.

At the same time deep learning was accelerating, another approach to machine intelligence was proving itself in dramatic fashion: reinforcement learning. Instead of learning from labeled examples, reinforcement learning teaches an “agent” through trial and error. The agent takes actions, receives rewards or penalties, and gradually learns strategies that maximize long-term success—similar to how training works for animals and humans. This loop produced some of the most memorable moments in AI history, including AlphaGo, which combined neural networks with search techniques to master a game many considered out of reach for computers. It also sent a clear message: classic symbolic methods never really disappeared—they were recombined with modern learning. Today, reinforcement learning continues to shape robotics, control systems, optimization, and even techniques used to improve and align language models.

Then came a breakthrough that transformed language AI almost overnight: the Transformer. Before Transformers, most natural language processing relied on recurrent neural networks that processed text sequentially, word by word. That step-by-step approach created major speed and scaling limits. Transformers replaced the bottleneck with attention mechanisms that let models consider many tokens at once, making training far more efficient and enabling much larger systems to be built.

The 2017 paper “Attention Is All You Need” effectively launched the large language model era. Transformers scaled exceptionally well across modern data centers, and their influence now stretches across today’s biggest AI capabilities—large language models, multimodal systems, and many modern image generation tools. If there’s one architecture that defines the current era of AI, it’s the Transformer.

From there, AI shifted from being mostly a prediction engine into something that can create. Generative AI isn’t just one technique—it blends multiple areas such as probabilistic modeling, neural sequence modeling, latent variable approaches, adversarial methods, diffusion processes, and large-scale pre-training. The goal is simple to state but hard to achieve: model real data so well that the system can generate convincing new examples—text, images, and more.

Large language models became the most visible face of this shift. By learning to predict the next token across enormous amounts of text, they developed surprisingly broad skills: summarization, translation, coding assistance, and more. A major milestone was GPT-3, which demonstrated that scaling up models doesn’t merely improve accuracy—it can unlock new capabilities that weren’t explicitly programmed or directly trained as standalone tasks. On the visual side, diffusion models changed the rules by learning how to reverse a noise process, producing images with remarkable detail. Just as important as the models themselves was the interface: natural language became a practical way for people to interact with computing systems, lowering the barrier to using powerful tools.

Now the conversation is moving beyond generation into action. That’s where agentic AI comes in. If generative AI is focused on producing content, agentic AI is designed to achieve goals. These systems don’t stop after responding to a prompt. They use memory, tools, and iterative planning to break tasks into steps, gather information, and adjust as they go. Approaches like ReAct helped formalize this “reason-then-act” cycle, turning language models into something closer to a coordinator that can operate within larger workflows.

Interestingly, agentic AI also feels like a return to early ambitions of AI: planning, tool use, and goal-seeking behavior—the classic focus of symbolic systems. The difference is that today’s “brain” is often a large language model with vast internal knowledge and flexible reasoning patterns, rather than a rigid hand-written rulebook. The direction is increasingly hybrid: large models acting as orchestrators that call specialized tools and systems when needed.

Even with all this progress, AI is still wrestling with long-running weaknesses. Traditional symbolic AI could be fragile and break when conditions changed. Modern deep learning systems can be powerful but opaque—often described as black boxes. Generative AI can hallucinate and produce convincing but incorrect information, and agentic systems can magnify small mistakes into serious failures as they run through multiple steps. These risks are pushing safety and governance into the mainstream technical conversation, including structured frameworks like the AI Risk Management Framework and major regulations like the European Union’s Artificial Intelligence Act, which officially began on August 1, 2024.

So where does AI go next? The most likely answer isn’t a single magical discovery. It’s convergence. AI systems are trending toward being more multimodal, more aware of tools, more persistent over time, and more embedded into real software loops that run continuously rather than finishing after one response. Future agents won’t just “chat.” They’ll coordinate complex workflows across longer time horizons, sometimes working alongside multiple specialized agents at once.

There’s also a growing realization that scale alone may not deliver the next leap. Bigger models helped ignite the current era, but the next stage may be defined by efficiency, grounding, and reliability. In other words, the future may belong less to sheer parameter counts and more to better systems engineering—building robust hybrids that combine neural networks’ pattern recognition with the precision, memory, and verifiability of symbolic tools and external systems. In a twist that feels almost poetic, the future of AI may look like a reunion with its past.

The larger story here is that AI keeps redefining what “intelligence” means. It began with logic, shifted toward statistics, evolved into representation learning, and now is becoming something broader: systems that can generate, retrieve, reason, and act. Each wave solved key problems while introducing new ones. Understanding that arc matters, because it reminds us that today’s AI boom isn’t magic—it’s the latest chapter in a long technical evolution. If history offers any lesson, the next major breakthrough probably won’t come from discarding earlier ideas, but from recombining them in smarter, more reliable ways.