AMD has provided detailed insights into its upcoming Instinct MI300X “CDNA 3” GPUs, aimed at AI workloads, ahead of the MI325X launch scheduled for next quarter. These GPUs represent the third generation of Instinct accelerators designed specifically for AI computing.

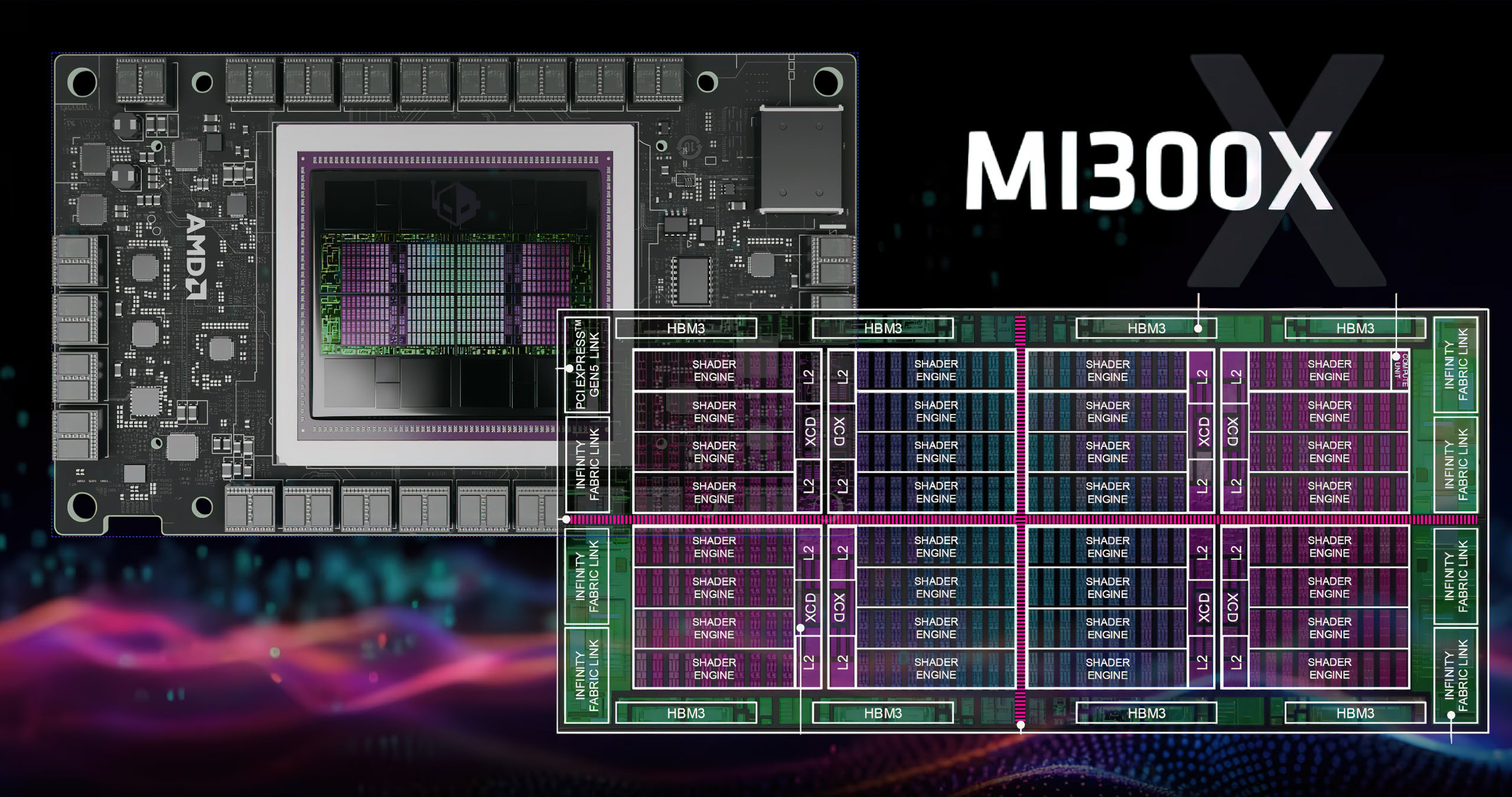

The MI300X features a substantial 153 billion transistors and utilizes a combination of TSMC’s 5nm and 6nm FinFET process nodes. Structurally, the GPU includes eight chiplets, each containing four shared engines, with each engine hosting 10 compute units. This layout results in a total of 320 compute units across the entire package.

Performance-wise, the MI300X offers impressive metrics, including:

– 1.7x speedup compared to the MI250X in Vector FP64 and Matrix FP64.

– 3.4x speedup in Vector FP32 and Matrix FP16/BF16 operations.

– 6.8x speedup in Matrix INT8 operations.

The unique architecture of the MI300X features doubled precision matrix operations, support for structured sparsity, and the capability to handle various numerical formats like INT8, FP8, FP16, and BF16. Each compute unit within this architecture is equipped with a scheduler, local data share, vector registers, vector units, matrix core, and L1 cache.

The MI300X also boasts an innovative 8-stack HBM3 memory design, providing a 1.5x increase in capacity and a 1.6x improvement in bandwidth compared to previous models. This allows for handling larger AI models, with capabilities to support up to 70B in Training and 680B in Inference for large language models.

Additionally, AMD’s Spatial partitioning feature enables the flexible grouping and partitioning of the XCDs, allowing them to operate either collectively as a single processor or as multiple GPUs based on workload demands.

In October, AMD plans to enhance the Instinct platform with the MI325X, which will feature HBM3e memory and increased capacities up to 288 GB. The MI325X is expected to provide:

– 2x Memory

– 1.3x Memory Bandwidth

– 1.3x Peak Theoretical FP16 and FP8

– 2x Model Size per Server

As AMD continues to push forward in the AI space, competitive responses, such as NVIDIA’s forthcoming Blackwell Ultra with similar memory enhancements, are anticipated next year.