AMD is positioning itself as a major contender in the artificial intelligence (AI) accelerator market with the announcement of an enhanced series of Instinct AI Accelerators. Aimed at data centers and the cloud, AMD’s upcoming accelerators, namely Instinct MI325X, MI350X, and MI400, boast improvements in memory, compute performance, and architecture. These accelerators are designed to provide robust competition against NVIDIA’s offerings.

AMD’s Forward-Thinking AI Accelerator Roadmap: MI325X, MI350X, and MI400

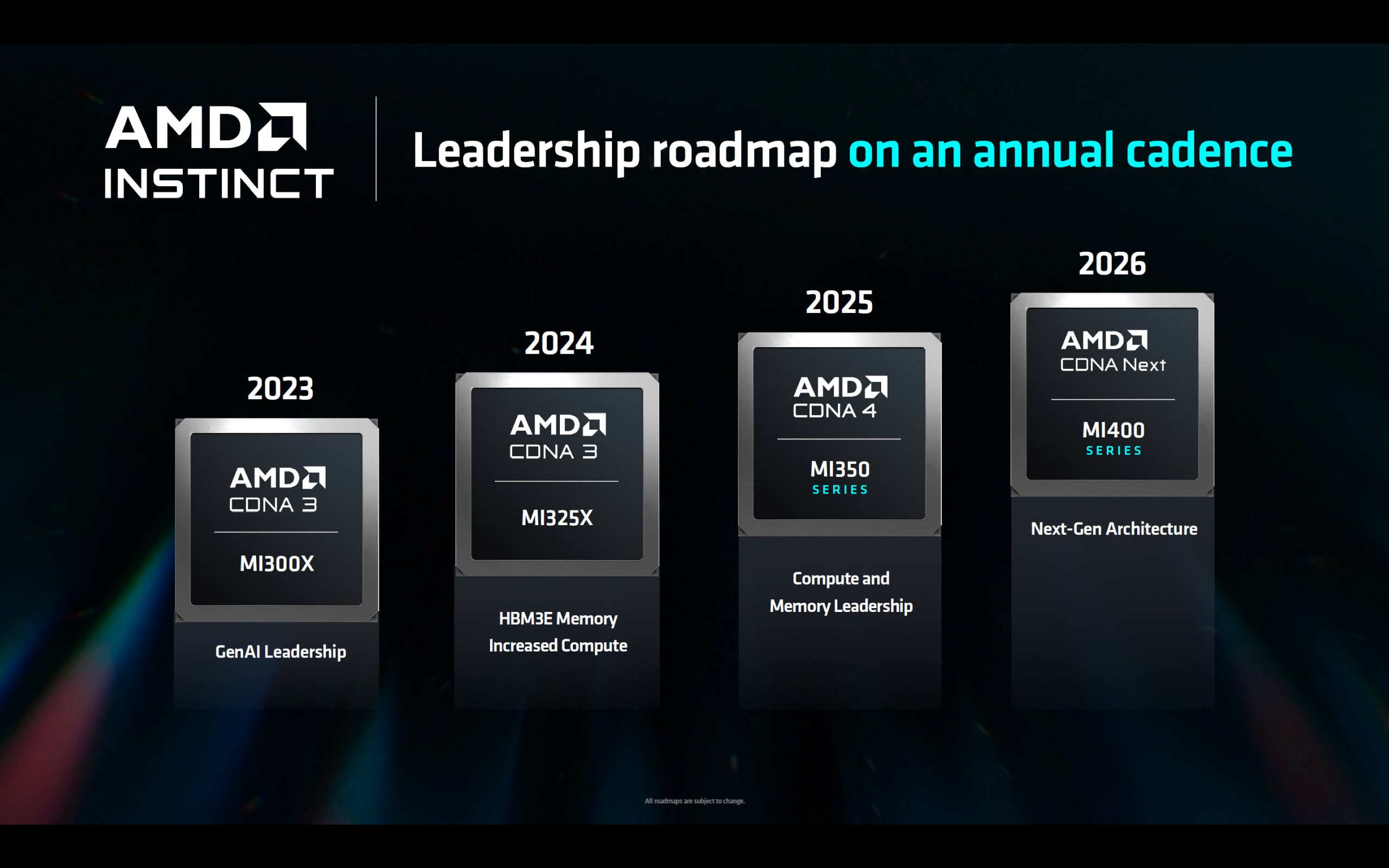

AMD’s road to AI dominance includes a strategic plan to introduce a new or refreshed AI accelerator each year. This aggressive approach follows similar pacing by NVIDIA, spotlighting the intensifying rivalry in AI acceleration.

The Instinct MI325X AI Accelerator: A 2024 Powerhouse

First in line is the AMD Instinct MI325X AI Accelerator, which builds upon the CDNA 3 architecture of the existing MI300 series. The MI325X is expected to launch in the fourth quarter of 2024 and is currently previewed at Computex 2024. This enhanced model features:

– 288 GB of HBM3E memory

– 6 TB/s memory bandwidth

– 1.3 PFLOPs of FP16 compute performance

– 2.6 PFLOPs of FP8 compute performance

– Capability to manage up to 1 trillion parameters per server

In comparison to NVIDIA’s H200, the MI325X is designed to deliver impressive improvements in memory capacity, bandwidth, and compute performance, positioning it as a competitive option for data centers seeking to enhance their AI capabilities.

Looking to the Next Generation: The Instinct MI350X and MI400

Moving beyond 2024, AMD sets its sights on the future with the Instinct MI350 series, planned for 2025. Leveraging a 3nm process node and maintaining up to 288 GB HBM3E memory, these accelerators introduce support for new data types like FP4/FP6. The MI350X series will be aligned with the next-gen CDNA 4 architecture and is poised to offer Open Accelerator Module (OAM) compatibility.

By 2026, AMD plans to unveil the Instinct MI400 series under the banner of “CDNA Next.” This future-focused series aims to bring further advancements to AMD’s AI accelerator lineup, potentially setting a new standard in AI technology deployment for data centers.

Strengthening AMD’s Position in the AI Race

AMD emphasizes that the MI300 has been its fastest escalating product, with numerous partners integrating the technology into their server offerings. This engagement lays a solid foundation for AMD’s ambitious AI endeavors. AMD’s comprehensive roadmap underlines its commitment to delivering increasingly powerful and efficient AI accelerators to meet the growing demands of data centers and cloud infrastructure, challenging the dominant presence of NVIDIA in the market.

As AMD continues to expand its AI acceleration capabilities, data centers and cloud providers can look forward to the enhanced performance and capacity needed to power demanding AI applications. With each new release, AMD not only reinforces its role in the AI accelerator space but also ensures that the technological progression in AI infrastructure stays on an upward trajectory.

With advancements in memory, compute performance, and support for new data types, AMD’s Instinct AI accelerator lineup is poised to drive innovation in machine learning and AI, embodying the company’s strategic outlook for the future of artificial intelligence in the cloud and data centers.