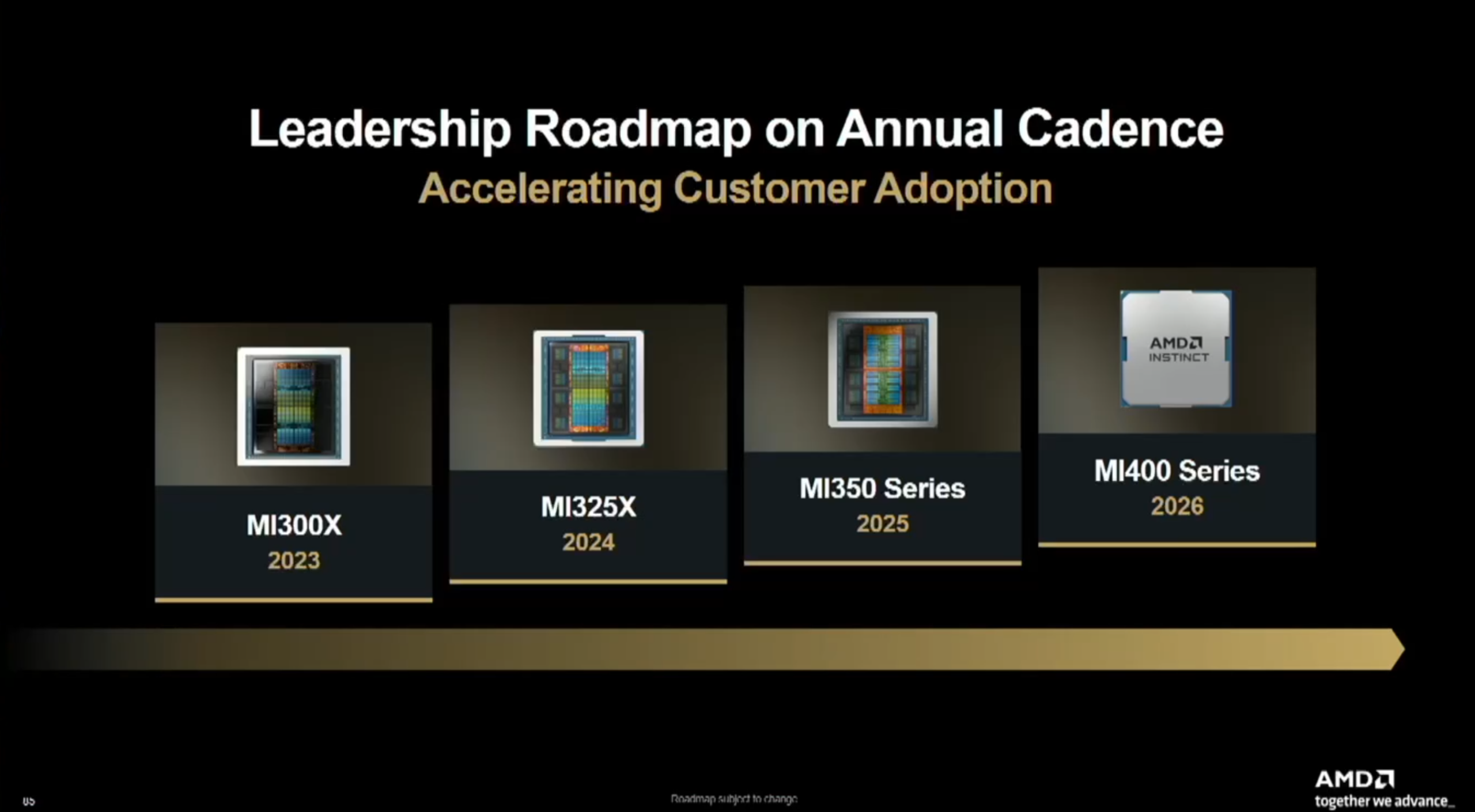

AMD is gearing up for a major push in data center AI with its Instinct MI400 and MI500 accelerators, setting up a direct showdown with the latest from NVIDIA. Unveiled at Financial Analyst Day 2025, these next-gen GPUs underscore AMD’s annual launch cadence and its focus on delivering faster compute, bigger memory, and robust networking for AI training, inference, and HPC at rack scale.

What’s coming with Instinct MI400

The Instinct MI400 family, due next year, is built on the CDNA 5 architecture and is designed to double down on performance and bandwidth for large-scale AI workloads.

Key highlights:

– Up to 40 PFLOPs FP4 and 20 PFLOPs FP8, doubling the compute of the MI350 generation

– HBM4 memory with a 50% capacity jump to 432 GB (from 288 GB HBM3e on MI350)

– Massive 19.6 TB/s memory bandwidth, up from 8 TB/s on MI350

– 300 GB/s scale-out bandwidth per GPU for high-efficiency cluster scaling

– Expanded AI formats for higher throughput

– Standards-based rack-scale networking: UALoE, UAL, and UEC

– 8-chiplet MCM design and OAM form factor with air or liquid cooling options

AMD has positioned MI400 directly against NVIDIA’s Vera Rubin platform. At a high level, MI400 targets:

– 1.5x memory capacity versus competing solutions

– Comparable memory bandwidth, FP4/FP8 FLOPs, and scale-up bandwidth

– 1.5x scale-out bandwidth versus competition

Two MI400 variants target distinct workloads:

– Instinct MI455X for large-scale AI training and inference

– Instinct MI430X for HPC and sovereign AI, adding hardware FP64 and hybrid CPU+GPU compute, with the same HBM4 memory foundation

Why this leap matters

Compared to the MI350 series, MI400 focuses on breaking memory bottlenecks and accelerating cluster-level throughput—two of the biggest constraints in training and serving frontier models. The move to HBM4 and the jump in FP4/FP8 performance directly address the needs of next-gen LLMs, multimodal systems, and high-precision HPC tasks.

Looking ahead to Instinct MI500 in 2027

AMD’s roadmap continues with the Instinct MI500 family slated for 2027. While detailed specs remain under wraps, AMD says MI500 will deliver next-generation gains across compute, memory, and interconnect, powering the next wave of AI racks. The company’s annual cadence means faster, more predictable upgrades for data centers aligning their infrastructure around rapid AI iteration cycles.

Quick generational context

– MI400 (CDNA 5): Up to 40 PFLOPs FP4, 20 PFLOPs FP8; 432 GB HBM4 at 19.6 TB/s; OAM; standards-based networking; 8-chiplet MCM

– MI350X (CDNA 4): Up to 5 PFLOPs FP8; 288 GB HBM3e; up to 8 TB/s memory bandwidth

– MI325X and MI300X (CDNA 3): Up to 2.6 PFLOPs FP8; 256–192 GB HBM3(e)

– MI250X (CDNA 2): 128 GB HBM2e

What it means for AI buyers and builders

If you’re scaling training clusters, deploying high-throughput inference, or building sovereign AI and HPC systems, MI400’s HBM4 capacity, expanded AI formats, and rack-scale networking are the headline advantages. With MI500 on the horizon, AMD’s roadmap signals sustained pressure on the high-end AI accelerator market and more options for data centers that want open, scalable platforms.