AMD is taking the AI hardware scene by storm with its latest release, the Instinct MI325X AI GPU accelerator, introduced at the recent “Advancing AI” event. The MI325X boasts an impressive 256 GB of HBM3e memory, marking a significant enhancement from the MI300X, thanks to AMD’s robust CDNA 3 architecture. If that sounds impressive, hold onto your hat, because AMD plans to up the ante even further next year with the MI355X, which will feature a mind-boggling 288 GB of HBM3e memory.

The Instinct line isn’t just about adding more memory. AMD’s strategic alignment with top-tier AI firms like Meta, OpenAI, and Microsoft underscores its dedication to pioneering performance, seamless integration, and a broad, open ecosystem. This has brought AMD substantial support across leading OEMs and cloud partners, enabling the swift rollout of powerful solutions that meet the ever-expanding demand for AI in various industries.

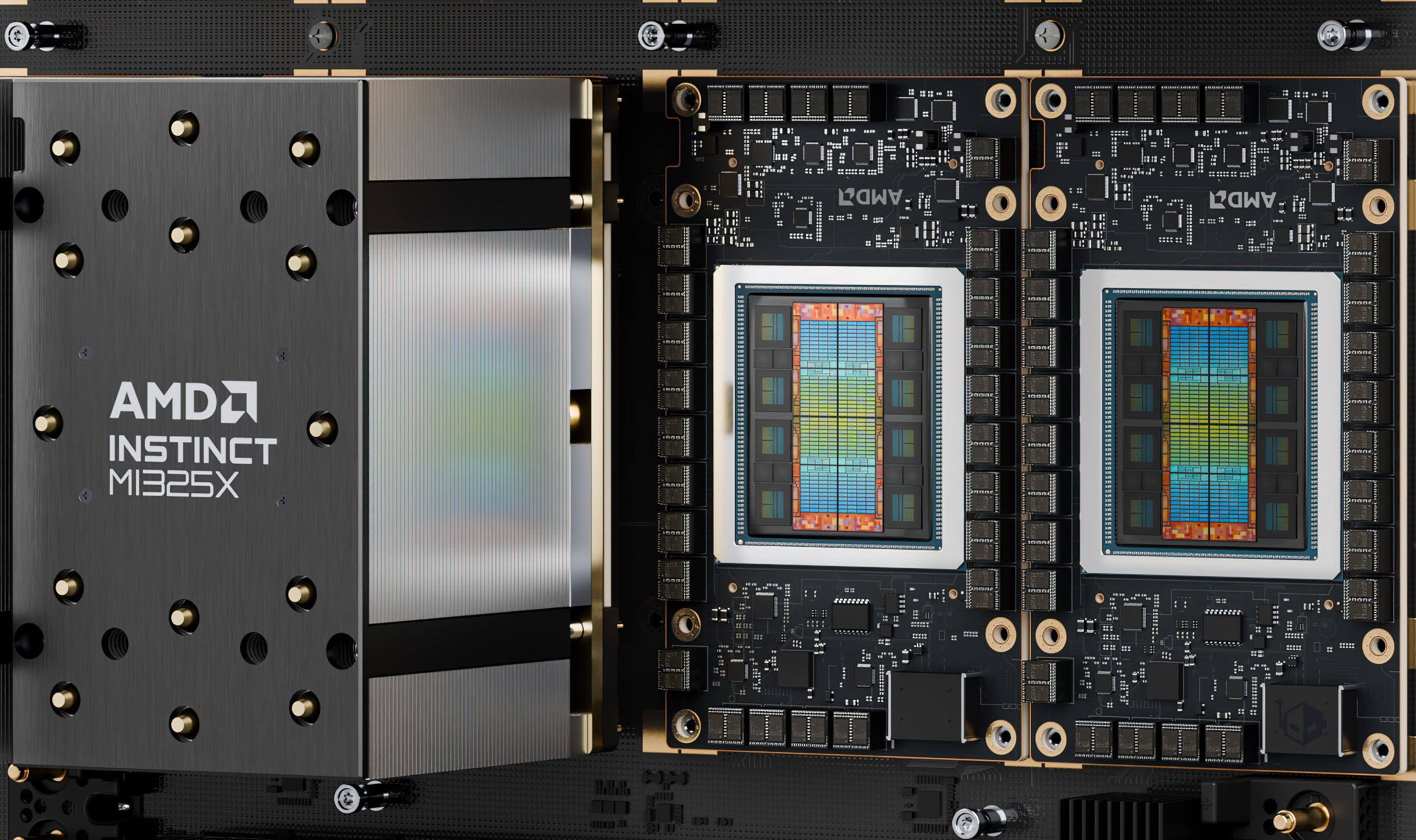

About the MI325X: This powerhouse GPU is a mid-cycle upgrade with 256 GB of HBM3e memory, delivered through 16-Hi stacks, and a staggering 6 TB/s memory bandwidth. The accelerator is built on AMD’s high-performing CDNA 3 architecture, promising 2.6 PFLOPs of FP8 and 1.3 PFLOPs of FP16 performance—all powered by a chip containing 153 billion transistors. Production is slated for the fourth quarter of 2024, with server solutions featuring these GPUs expected in early 2025.

The performance benchmarks are equally exciting. The MI325X aims to outpace NVIDIA’s H200 by offering 40% enhanced speed on certain AI tasks, such as Mixtral 8x7B, and significant improvements in other applications. Its AI training capabilities promise either equal or marginally superior performances to the H200 platforms.

But as AMD executives look beyond the horizon, next year brings the MI355X with CDNA 4 architecture, built on a 3nm process. This GPU extends memory capacity to 288 GB, supporting newer FP4/FP6 data types, and aims for a 35x performance boost over its predecessor, the CDNA 3, all while enhancing memory and networking efficiencies.

The MI355X won’t just push boundaries; it will leap over them. It will achieve 80% more FP16 and FP8 performance than the MI325X and enhance compute performance to a remarkable 9.2 PFLOPs using FP6 and FP4 data formats. Expected to debut in late 2025, platforms featuring the MI355X will embody AMD’s commitment to cutting-edge technology and significant memory and bandwidth upgrades.

On the software side, AMD continues to refine hardware capabilities with ROCm 6.2 ecosystem updates, yielding performance improvements exceeding 2.4x on various AI workloads, whether inferencing or training. This demonstrates AMD’s holistic approach, ensuring hardware innovations are matched by equally robust software advancements.

In a tech landscape where AI proliferation is the name of the game, AMD’s relentless pursuit of innovation places it at the forefront of the industry. With its Instinct GPU accelerators, AMD is poised not only to challenge the competition but establish a new standard for AI computing, setting a course for the future with unparalleled reliability, performance, and innovation. As AMD looks to the future, the company continues to refine and elevate the AI ecosystem, promising thrilling developments in machine learning, deep learning, and beyond.