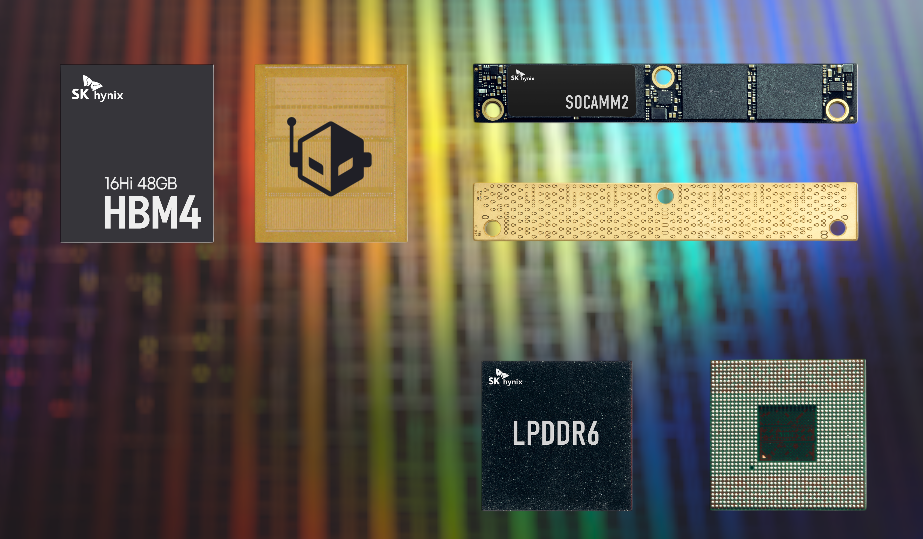

SK hynix is heading into CES 2026 with a clear message: the next wave of AI computing will need faster, denser, and more power-efficient memory across the entire stack, from massive AI servers to on-device AI. The company says it will host a customer exhibition booth at the Venetian Expo in Las Vegas from January 6 to 9, where it plans to spotlight its newest AI-focused memory technologies, including a 48GB HBM4 solution, SOCAMM2 modules, LPDDR6, and high-capacity NAND aimed at data centers.

One of the biggest highlights is SK hynix’s 16-layer HBM4 memory, delivering 48GB per stack. The company describes it as a next-generation HBM product and notes that this is the first time it’s showcasing the 48GB 16-layer HBM4 at the event. It follows the previously demonstrated 12-layer HBM4 36GB design, which reached 11.7Gbps speeds and is still under development to match customer timelines. For AI hardware buyers and platform designers, that combination of higher capacity per stack and aggressive bandwidth targets is a key indicator of where next-gen accelerators and servers are headed.

SK hynix also plans to present its 12-layer HBM3E 36GB product, which it expects to help drive the market this year. To make the real-world impact easier to understand, the company says it will jointly exhibit GPU modules that use HBM3E for AI servers alongside customers, demonstrating how the memory fits into complete AI system designs.

HBM isn’t the only piece of the puzzle. With AI servers expanding rapidly and power budgets becoming a major constraint, SK hynix will also show SOCAMM2, a low-power memory module designed specifically for AI servers. The goal is to underline that AI infrastructure needs more than just top-end bandwidth—it also needs flexible module options that can help data centers scale while keeping energy use under control.

On the consumer and edge side, SK hynix says it will showcase LPDDR6, positioning it as a memory technology optimized for on-device AI. The company emphasizes improvements in data processing speed and power efficiency versus prior generations, a critical combination for devices that need to run AI features locally without draining battery life or relying on constant cloud connectivity.

Storage is also part of SK hynix’s CES 2026 AI story. The company will present a 321-layer 2Tb QLC NAND product designed for ultra-high-capacity enterprise SSDs, targeting the continued surge in AI data center demand. SK hynix says this NAND offers best-in-industry integration and delivers better power efficiency and performance than previous-generation QLC products—an important advantage in AI environments where storage density matters and reducing power consumption can significantly impact operating costs.

Rounding out the showcase is custom HBM, or cHBM. SK hynix says it has prepared a large-scale mock-up so visitors can see the structure more clearly, reflecting growing customer interest. The idea behind cHBM points to a broader shift in AI hardware priorities: competition is no longer only about raw performance, but also about inference efficiency and cost optimization. SK hynix describes cHBM as a new design approach that integrates part of the computation and control functions into HBM—tasks traditionally handled by GPUs or ASICs—suggesting future AI platforms may increasingly blend memory and logic to reduce bottlenecks and boost efficiency.

Taken together, SK hynix’s CES 2026 lineup paints a picture of where AI platforms are going next: higher-capacity HBM for accelerator-class performance, server-focused low-power modules for scalable deployments, LPDDR6 for efficient on-device AI, and denser NAND for the storage-intensive reality of modern AI data centers.