Rambus has unveiled its new LPDDR5X SOCAMM2 memory chipset, a move that signals where next-generation AI data centers are headed: smaller, more power-efficient memory that still delivers the performance and serviceability modern server platforms demand.

SOCAMM2, short for Small Outline Compression Attached Memory Module, is built to enable low-power, high-performance LPDDR5X-based memory modules for AI server platforms. In simple terms, it brings LPDDR-class efficiency into a modular server-friendly format, helping data center operators balance growing compute needs with tighter power and thermal budgets.

This SOCAMM2 chipset launch is also positioned as the first step in a broader roadmap from Rambus focused on LPDDR-based server module solutions. It expands the company’s lineup of memory interface chipsets designed for industry-standard memory modules, aligning with JEDEC specifications for both DDR5 and LPDDR5 ecosystems. The bigger story here is that AI is rapidly diversifying data center workloads, and that shift is forcing server designers to rethink memory around power efficiency, density, scalability, and board space.

SOCAMM2 modules are emerging as a compelling answer to those pressures. Instead of relying on soldered LPDDR memory, SOCAMM2 is designed around detachable, upgradable modules. That change matters in data centers, where serviceability and replacement cycles are a core part of fleet operations. You get the efficiency benefits of LPDDR technology while keeping the practical advantages of modular server memory.

Rambus says its LPDDR5X SOCAMM2 chipset is designed to provide the critical control, telemetry, and power delivery functions required by JEDEC-standard SOCAMM2 modules in demanding AI server environments. The goal is to make this new module type viable at scale, not just technically possible.

Industry voices are already pointing to SOCAMM2 as a meaningful step for future AI system designs. Micron’s Praveen Vaidyanathan, vice president and general manager of Cloud Memory Products, described SOCAMM2 as an important advance toward efficient, scalable, CPU-connected memory for the next wave of AI servers, emphasizing the need for a strong ecosystem around LPDDR-class server memory as AI pushes compute and power limits. IDC’s Soo Kyoum Kim, associate VP for memory semiconductors, also framed SOCAMM2-style memory architectures as a key evolution in balancing performance with efficiency as data centers run into bandwidth, density, and energy constraints.

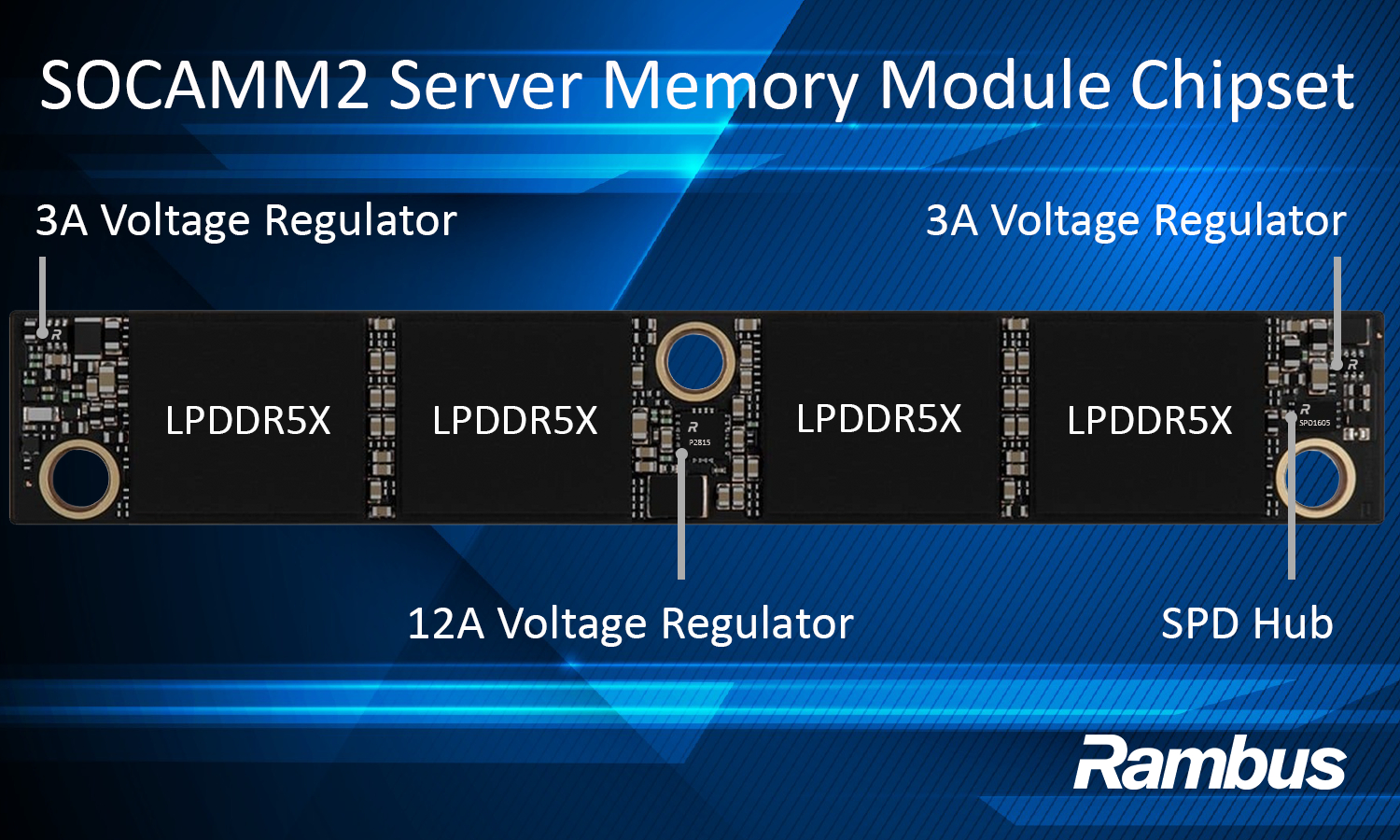

On the technical side, Rambus states the SOCAMM2 chipset supports reliable, power-efficient operation of LPDDR-based server memory modules at data rates up to 9.6 Gb/s. Key features called out include integrated voltage regulation for localized power conversion, with 12-amp and 3-amp voltage regulators, along with an SPD Hub used for module identification, configuration, and telemetry.

For AI data centers hunting for better performance per watt without giving up practical serviceability, SOCAMM2 is shaping up to be one of the more important memory form-factor shifts to watch. Rambus’ chipset is intended to be foundational plumbing that helps make LPDDR5X SOCAMM2 modules a real, deployable option in future AI server platforms.