Longsys fires the starting gun in the next phase of AI memory. The China-based memory specialist has rolled out enterprise SOCAMM2 modules built on LPDDR5/LPDDR5X, positioning itself to capture the first wave of demand from next‑generation AI servers. Industry reports also indicate that Nvidia has decided to bypass SOCAMM1 entirely and align with the SOCAMM2 standard, a move that accelerates momentum for this form factor. Memory heavyweights Samsung, SK Hynix, and Micron are said to be in the midst of sample qualification, signaling that the broader ecosystem is quickly coalescing around the new spec.

Why SOCAMM2 matters right now

The AI data center is starved for memory bandwidth, capacity, and efficiency. SOCAMM2 addresses these pain points by pairing a compact, high-density module design with the proven power efficiency of LPDDR5 and LPDDR5X. That combination is especially attractive for AI accelerators and servers where every watt, millimeter, and gigabyte per second counts. Inference and training workloads are increasingly limited by how fast data can move to and from compute cores; SOCAMM2’s promise is to unlock higher throughput without blowing out power budgets or board space.

A fast-forming ecosystem

Momentum at both the platform and component levels is critical for any new memory standard. Nvidia’s move to skip the first-generation spec and go straight to SOCAMM2 sends a strong signal to server OEMs and cloud providers about where the market is headed. Meanwhile, with Samsung, SK Hynix, and Micron engaged in sample qualification, supply-side readiness looks set to ramp, reducing risk for early adopters and paving the way for broader commercialization.

What Longsys brings to the table

By introducing enterprise-grade SOCAMM2 modules now, Longsys is staking out an early-mover advantage. The company’s focus on LPDDR5/5X aligns with data center priorities:

– High bandwidth to feed AI accelerators efficiently

– Lower power consumption per bit transferred versus traditional server memory

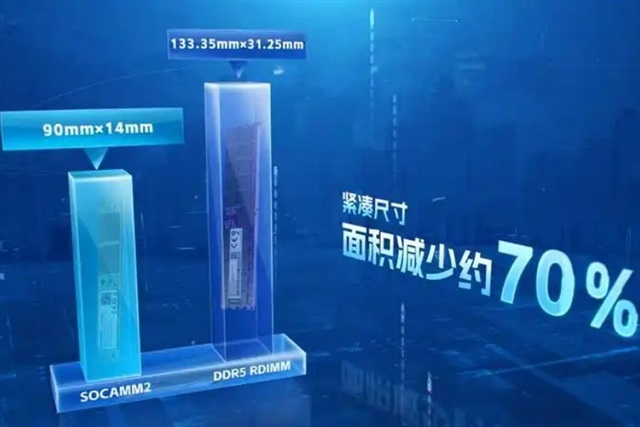

– Slimmer module footprints that enable denser system designs

If these modules deliver the expected gains in performance-per-watt and capacity-per-socket, they could become a go-to choice for AI inference nodes, edge AI servers, and large-scale training clusters alike.

What to watch next

– Performance and capacity tiers: Concrete specs on bandwidth, capacities, and speed grades will determine where SOCAMM2 fits across training vs. inference deployments.

– Thermal and reliability: How vendors handle cooling, error correction, and sustained throughput will be key for mission-critical AI workloads.

– Platform adoption: Broader support from server OEMs and hyperscalers will indicate how quickly SOCAMM2 becomes a mainstream option in data centers.

– Supply chain maturity: As leading DRAM makers complete qualification, availability and pricing should stabilize, speeding up deployments.

The bottom line

The AI memory market is crystallizing faster than expected. With Longsys shipping enterprise SOCAMM2 modules based on LPDDR5/5X, Nvidia aligning directly with the SOCAMM2 standard, and top DRAM vendors advancing through sample qualification, the pieces are falling into place for a rapid shift in how AI servers are configured. Expect SOCAMM2 to gain traction as data centers chase higher bandwidth, tighter power envelopes, and denser form factors to keep up with the surging demands of modern AI workloads.