Elon Musk is betting that the future of artificial intelligence computing may be off-planet. The Tesla CEO has argued that today’s biggest barrier to scaling AI isn’t talent, software, or even demand—it’s electricity. In his view, Earth’s power generation and grid distribution limits could slow the AI boom, pushing companies to look for radically different ways to power massive data center expansions.

Musk’s core point is simple: AI data centers are becoming so power-hungry that the grid may not keep up. As hyperscalers race to build out AI infrastructure, many analysts have warned that the industry could hit a “dot-com moment” for compute—where the ambition to deploy more servers collides with the reality of limited energy supply. If that happens, training and operating advanced AI models could become dramatically more expensive, putting pressure on the economics of the entire AI buildout.

He frames the challenge with a striking comparison. The United States, he says, averages about half a terawatt of electricity consumption. Now imagine AI pushing requirements toward a terawatt-scale jump—effectively asking the country to double its typical power use just to feed new compute demand. Musk’s argument is that building enough new data centers and enough new power plants on the ground fast enough is not a practical long-term plan.

The numbers being discussed by energy researchers reinforce why power is suddenly central to the AI conversation. Forecasts suggest data center electricity demand is set to rise sharply in the coming years, with estimates pointing to a meaningful increase in overall usage within the next four years. Looking toward 2030, projections indicate data centers could take a sizable share of total U.S. electricity generation. Even if the exact percentages vary by study, the trend is clear: AI infrastructure growth is increasingly constrained by access to reliable, affordable power.

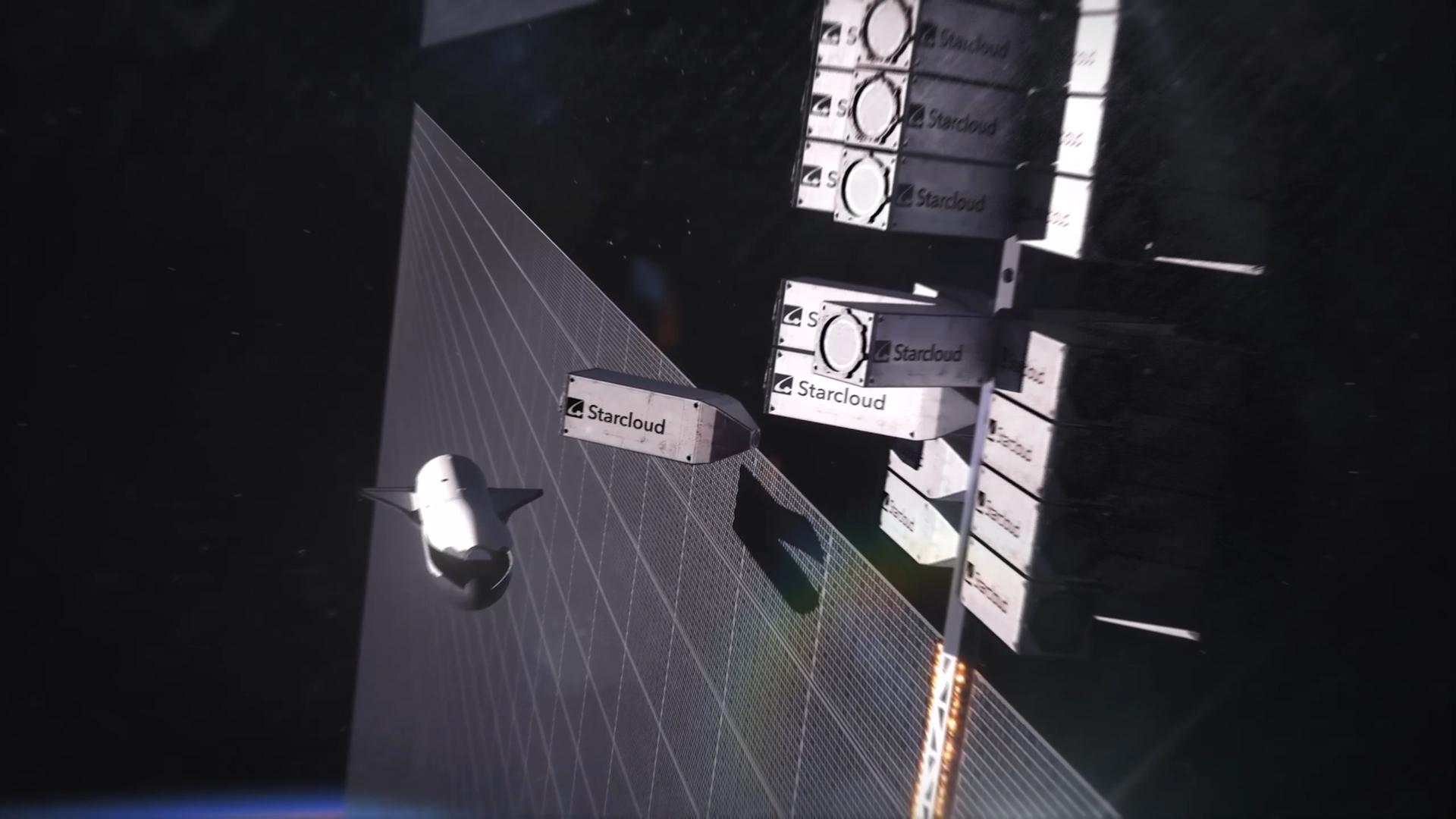

Musk’s proposed solution sounds like science fiction but comes from a very “systems engineering” mindset: put data centers in orbit. In space, vast solar energy could be harvested without many of the land, permitting, and transmission bottlenecks that limit energy expansion on Earth. He believes that once the logistics are solved—getting hardware to orbit, deploying it at scale, and keeping it connected—the economic case could tilt toward space-based AI computing within roughly 30 to 36 months.

He also claims SpaceX already has key pieces that could make orbital data centers possible. Starship would serve as the heavy-lift transportation system to move equipment into orbit in large quantities, while Starlink would provide the networking backbone to connect orbital compute to customers and services on the ground. If that infrastructure matures the way Musk expects, he suggests the limiting factor in space won’t be electricity—it will be chips.

That leads to his second concern: semiconductor supply. Musk says that even now, chip availability and delivery timelines are tight, and that scaling AI fast enough will put even more strain on manufacturing capacity. He notes efforts to work with major fabrication partners across multiple regions, including facilities in the United States, Taiwan, South Korea, and Texas. But in his view, current capacity expansion isn’t happening quickly enough. This is where he has floated the idea of building a “TeraFab,” a massive new chip manufacturing initiative aimed at meeting the coming surge in demand.

The concept of data centers in space isn’t completely new. There have already been early experiments with high-end AI hardware operating beyond Earth, showing that it’s technically possible to run advanced compute in orbit. The bigger leap is scale. Moving from a one-off demonstration to multi-gigawatt orbital data centers would be a massive engineering and economic challenge, raising questions about maintenance, cooling, radiation, hardware replacement cycles, launch cadence, debris risk, and overall cost per computation.

Still, Musk is positioning orbital computing as both a practical answer to Earth’s energy bottlenecks and a strategic stepping stone toward broader space ambitions. He’s even described it as a “side quest” that could accelerate the industrial capabilities needed for Mars—while also opening up a new business frontier for SpaceX.

Whether space-based AI data centers become a near-term reality or remain a long-range vision, the underlying issue Musk highlights is already reshaping the AI industry: power is becoming just as important as performance. As AI models grow and the appetite for compute explodes, the race won’t only be about faster chips—it will be about who can secure the energy to run them.