NVIDIA is gearing up to revolutionize the AI landscape with its upcoming Rubin and Rubin Ultra GPUs, as well as the next-gen Vera CPUs. These new additions are set to redefine the boundaries of AI computing, promising to bring unprecedented power and efficiency to the field by 2026 and 2027.

Following the impressive enhancements seen with the Blackwell Ultra platform this year, which offers up to 288 GB of HBM3e memory, NVIDIA is poised to take yet another leap with its Vera Rubin system. Expected to roll out in the latter half of 2026, the Vera Rubin platform will scale existing NVL72 solutions to NVL144, featuring innovative cooling systems designed for optimal performance.

The Vera Rubin NVL144 platform will debut with notable advancements, utilizing the cutting-edge Rubin GPU powered by two reticle-sized chips. These advancements translate to a staggering 50 PFLOPs of FP4 performance and 288 GB of the latest HBM4 memory. Complementing this is an 88-core Vera CPU boasting a custom Arm architecture, capable of handling 176 threads and integrating seamlessly with an impressive 1.8 TB/s of NVLINK-C2C interconnect.

The significance of these enhancements becomes evident when examining their impact: the NVIDIA Vera Rubin NVL144 platform is set to deliver 3.6 Exaflops of FP4 inference and 1.2 Exaflops of FP8 Training capabilities. This marks a 3.3-fold improvement over the GB300 NVL72, alongside a boost to 13 TB/s of HBM4 memory and a 60% uplift in fast memory capacity. Additionally, the NVLINK and CX9 capabilities will see a substantial double increase, reaching speeds up to 260 TB/s and 28.8 TB/s, respectively.

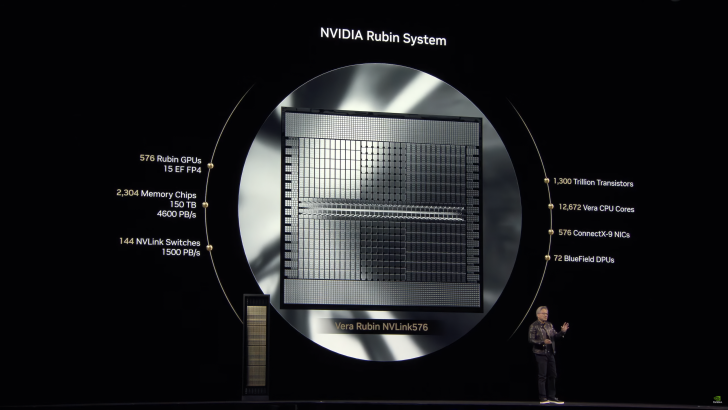

But the innovation doesn’t stop there. By the second half of 2027, NVIDIA plans to introduce the Rubin Ultra platform, further elevating the capabilities of AI systems. This platform will escalate the NVL system from 144 to an impressive 576, maintaining the same groundbreaking CPU architecture. However, the Rubin Ultra GPU will push the envelope further with four reticle-sized chips, yielding up to 100 PFLOPs of FP4 performance and a breathtaking 1 TB of HBM4e distributed across 16 sites.

The Rubin Ultra NVL576 platform promises transformative performance, delivering 15 Exaflops of FP4 inference and 5 Exaflops of FP8 Training capabilities. These figures represent a spectacular 14-fold increase compared to the GB300 NVL72, alongside 4.6 PB/s of HBM4 memory and a remarkable 365 TB of fast memory, an 8-fold uplift over previous iterations. Furthermore, NVLINK and CX9 capabilities will soar to new heights, with respective rates of up to 1.5 PB/s and 115.2 TB/s.

NVIDIA’s ambitious roadmap sets the stage for groundbreaking innovations in AI technology, heralding a new era of computational power and efficiency that will undoubtedly shape the future of artificial intelligence.