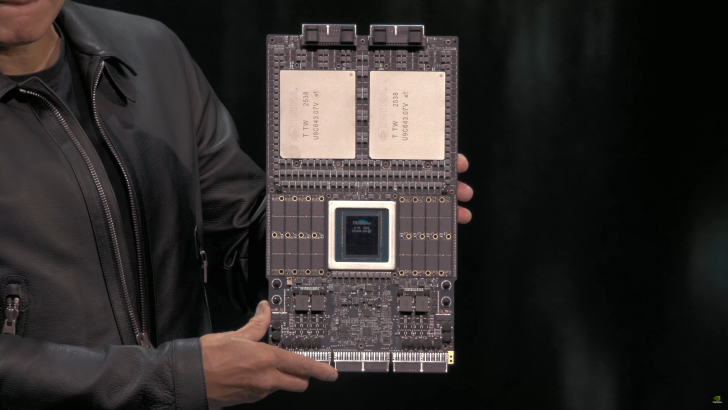

NVIDIA just pulled back the curtain on its next major AI platform, debuting the Vera Rubin Superchip at GTC in Washington. It’s the first public look at a working motherboard that pairs a Vera CPU with two massive Rubin GPUs and a sea of on-board memory—an early preview of the hardware expected to drive the next wave of large-scale AI.

Early silicon is already in the lab. Rubin GPU samples have returned from TSMC, marking a key milestone on the road to volume production. NVIDIA’s rollout plan targets mass production around Q3–Q4 2026, overlapping with the ongoing ramp of Blackwell Ultra GB300 systems.

What NVIDIA showed

– A full Vera Rubin Superchip motherboard hosting a Vera CPU and two Rubin GPUs

– 32 LPDDR system memory sites on the board, complemented by HBM4 residing on the GPUs

– Each Rubin GPU built from two reticle-sized dies with eight HBM4 sites

– Vera CPU featuring 88 custom Arm cores and 176 threads

– NVLINK-C2C interconnect up to 1.8 TB/s between components

Vera Rubin NVL144: targeting 2H 2026

This first-generation system centers on the Rubin GPU and Vera CPU.

– Rubin GPU: two reticle-sized GPU dies, up to 50 PFLOPs of FP4 performance, and 288 GB of next-gen HBM4

– Vera CPU: 88 custom Arm cores, 176 threads

– Interconnect: up to 1.8 TB/s via NVLINK-C2C

Platform-level performance and scaling for NVL144:

– Up to 3.6 exaflops of FP4 inference

– Up to 1.2 exaflops of FP8 training

– 3.3x the performance of GB300 NVL72

– 13 TB/s of HBM4 bandwidth and 75 TB of fast memory

– 2x the NVLINK and CX9 capabilities of GB300, rated up to 260 TB/s (NVLINK) and 28.8 TB/s (CX9)

– Estimated launch window: second half of 2026

Rubin Ultra NVL576: targeting 2H 2027

The second platform scales everything up significantly.

– Rubin Ultra GPU: four reticle-sized chips, up to 100 PFLOPs of FP4 performance, and 1 TB of HBM4e across 16 HBM sites

– Same CPU architecture foundation as Vera

– Dramatically expanded interconnect and memory

Platform-level performance and scaling for NVL576:

– Up to 15 exaflops of FP4 inference

– Up to 5 exaflops of FP8 training

– 14x the performance of GB300 NVL72

– 4.6 PB/s of HBM4 bandwidth and 365 TB of fast memory

– 12x the NVLINK and 8x the CX9 capabilities of GB300, rated up to 1.5 PB/s (NVLINK) and 115.2 TB/s (CX9)

– Estimated launch window: second half of 2027

How it fits the data center roadmap

NVIDIA’s data center GPU roadmap continues its rapid cadence: Blackwell and Blackwell Ultra are rolling out through 2024–2025, followed by Rubin-based systems in 2026 and Rubin Ultra in 2027. A subsequent generation, codenamed Feynman, is slated for 2028, with memory technologies progressing from HBM3e to HBM4 and HBM4e, and potentially beyond.

Why it matters

With Vera Rubin, NVIDIA is aiming to push exaflop-class inference and multi-exaflop training into mainstream deployment windows, while massively increasing memory bandwidth and fabric throughput. For organizations building and serving frontier-scale AI models, these systems promise higher density, faster time to train, and greater inference efficiency—exactly the ingredients needed to sustain the next leap in generative AI and high-performance computing.