NVIDIA Rubin GPUs enter production as HBM4 samples land from every major supplier

NVIDIA’s next-generation Rubin platform is moving faster than expected. The company has begun production of Rubin GPUs and has secured HBM4 memory samples from all major vendors—key milestones that set the stage for what’s shaping up to be the most advanced AI accelerator lineup of 2026.

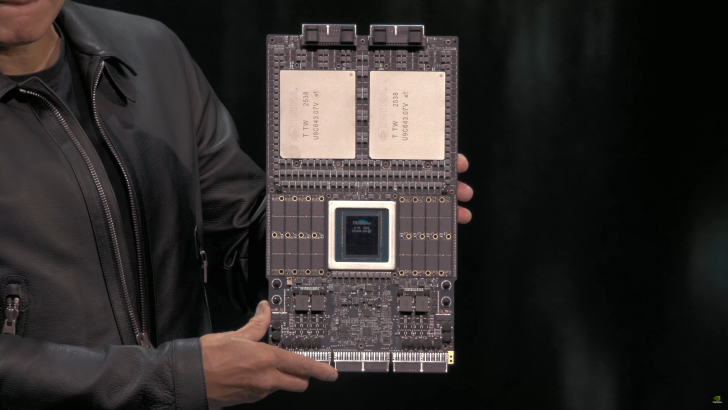

The momentum follows NVIDIA’s first public reveal of the Vera Rubin Superchip at GTC 2025 in Washington. The design pairs two massive Rubin GPUs with the next-gen Vera CPU, surrounded by banks of LPDDR memory, laying the groundwork for a new era of AI compute in hyperscale data centers.

During a recent visit to Taiwan, where Jensen Huang met with TSMC, he noted that Rubin has already entered the production line—just days after confirming the first Rubin silicon had reached NVIDIA’s labs. That rapid shift from initial silicon to production highlights how aggressively NVIDIA and its partners are moving to meet surging AI demand.

NVIDIA’s current Blackwell and Blackwell Ultra GPUs continue to see overwhelming demand, and it’s not just about accelerators. The company is also building CPUs, networking chips, and switches tied to the same ecosystem. To keep up, TSMC is boosting 3nm output by roughly 50%, with NVIDIA requesting significantly more wafers and finished parts. Exact figures remain under wraps, but the scale is clearly massive.

On the memory front, NVIDIA has already obtained HBM4 samples from multiple suppliers. Diversifying DRAM sources has long been part of the company’s strategy, and after recent shortages across the industry, onboarding every major manufacturer is a prudent move ahead of Rubin’s ramp.

NVIDIA has previously indicated that Rubin should reach mass production around Q3 2026, or earlier if everything aligns. Entering production now isn’t the same as hitting mass production—that’s the high-volume stage that feeds large-scale customer rollouts—but the timeline is tightening. Rubin is also at the center of a reported $100 billion partnership with OpenAI, which plans to leverage these next-gen accelerators for future data center deployments.

What this means for the AI compute landscape:

– Rubin is progressing on schedule with silicon already on the production line and early HBM4 validation underway.

– TSMC’s expanded 3nm capacity is a strong signal of industry-wide confidence in NVIDIA’s roadmap beyond Blackwell.

– A diversified HBM4 supply chain should help mitigate memory bottlenecks as deployments scale in 2026.

– The Vera Rubin Superchip architecture—dual GPUs plus the Vera CPU—targets massive throughput and efficiency for training and inference at cloud scale.

Key takeaways

– Rubin GPUs have entered production and are on track for mass production around Q3 2026.

– HBM4 samples are in NVIDIA’s hands from all major suppliers, reducing memory risk.

– TSMC is lifting 3nm output by about 50% to support surging demand and the Rubin ramp.

– Blackwell and Blackwell Ultra demand remains extremely strong as NVIDIA expands its full-stack portfolio of GPUs, CPUs, networking, and switches.

– Rubin is poised to power next-generation AI data centers, including large-scale deployments planned by major partners like OpenAI.