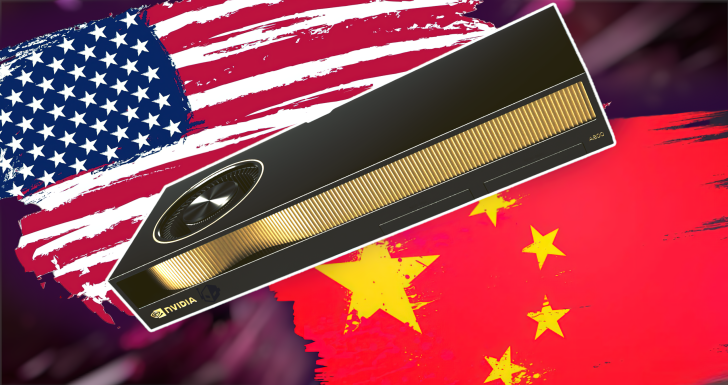

NVIDIA’s next export-compliant AI accelerator for China, reportedly called B30A, is shaping up to be a major step forward, pairing Blackwell-era architecture with HBM3E memory and a modern chiplet design to unlock meaningful performance gains over the current H20.

Analysts indicate the B30A will retain several familiar elements to stay within regulatory limits, including 8-Hi HBM3E and TSMC’s N4P manufacturing process, plus NVLink interconnect support rated at 900 GB/s. Where the real leap comes is under the hood: the B30A is expected to move from the H20’s monolithic die to a dual-die chiplet layout, bringing it in line with the design philosophy behind NVIDIA’s higher-end Blackwell parts like the B200 and B300.

Early guidance suggests the B30A targets roughly half the core-level scale of the B300, with memory configurations around 141 GB of HBM3E (8-Hi). Even with similar process technology and interconnect bandwidth, the shift to a Blackwell-based dual-die setup should deliver substantial architectural uplift for training and inference workloads, improving utilization, efficiency, and overall throughput versus Hopper-derived export models.

This approach signals NVIDIA’s strategy for China: preserve compatibility and ease of deployment through known components like HBM3E and NVLink, while tapping Blackwell’s advanced architecture and chiplet packaging to raise real-world performance without breaching compliance thresholds. For Chinese hyperscalers and AI startups racing to build and scale large language models and generative AI services, that combination could be compelling—especially as domestic vendors work to replace foreign tech stacks and compete on price and performance.

Timing will be key. The B30A is being discussed with a potential debut as early as Q4 2025, pending regulatory clearance. With export rules still evolving, moving quickly on approvals will be critical for NVIDIA to defend share in a market where alternatives are gaining traction.

What to watch next:

– Finalized B30A specs and how closely they mirror half-scale B300 configurations

– Real-world bandwidth and efficiency metrics leveraging HBM3E and NVLink at 900 GB/s

– Software stack compatibility and migration paths from H20-based deployments

– Regulatory milestones that could pull the timeline forward or push it back

Bottom line: If the B30A lands as projected, it could become the go-to Blackwell option for China—maintaining compliance while delivering meaningful architectural gains over H20, aided by a dual-die chiplet design, HBM3E capacity, and proven N4P manufacturing. For buyers balancing policy constraints with the need for faster training and inference, this looks like the sweet spot NVIDIA needs to stay competitive.