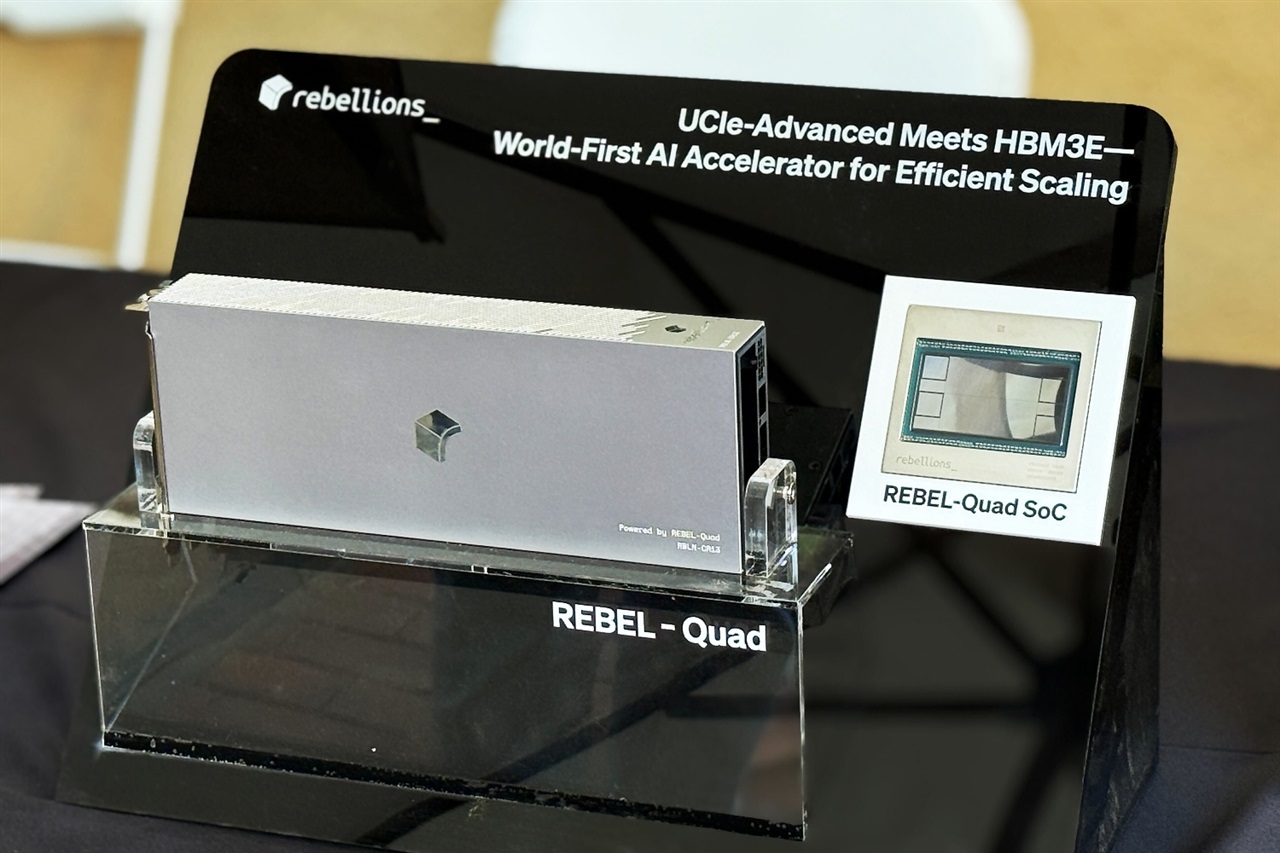

Rebellions unveils REBEL-Quad AI accelerator at Hot Chips 2025, taking aim at Nvidia’s Blackwell-class dominance. The Korea-based company introduced its next-generation processor in California, positioning it as a direct competitor for hyperscale data centers hungry for more efficient, scalable compute for generative AI.

Billed as a first-of-its-kind neural processing unit, REBEL-Quad is designed to push beyond conventional GPU-centric architectures. The company is emphasizing a chiplet-based approach that promises modular scalability, improved yields, and better performance-per-watt—key factors for training and serving large language models at cloud scale. By decoupling compute into smaller, interoperable silicon blocks, chiplet designs can allow data center operators to right-size deployments, ramp capacity faster, and potentially lower total cost of ownership.

Why this matters: the generative AI boom is straining power budgets, supply chains, and datacenter footprints. Every point of efficiency and every watt saved translates to meaningful cost and sustainability gains. A viable alternative to incumbent GPU platforms could give hyperscalers more flexibility in how they build and scale clusters for both training and inference.

While detailed specifications were not disclosed here, Rebellions’ message is clear: REBEL-Quad is engineered for the heaviest AI workloads, from giant transformer models to high-throughput inference, with an eye toward consistent scaling across racks and regions. The “first-of-its-kind” positioning underscores a focus on architectural innovation rather than incremental speed bumps.

Key takeaways

– REBEL-Quad debuted at Hot Chips 2025 in California as Rebellions’ next-gen AI accelerator.

– Positioned as a direct challenger to Nvidia’s Blackwell platform.

– Described as a first-of-its-kind neural processing unit leveraging a chiplet-centric design for hyperscale environments.

– Targets performance-per-watt, scalability, and rapid deployment for large AI models and high-volume inference.

– Aims to give cloud providers and enterprises another high-performance option as AI infrastructure demand accelerates.

As the AI infrastructure landscape evolves, all eyes will be on how REBEL-Quad integrates into real-world clusters, how its software stack supports popular AI frameworks, and how it measures up on cost and efficiency at scale. Rebellions has set an ambitious target; the next phase will be proving it in production.