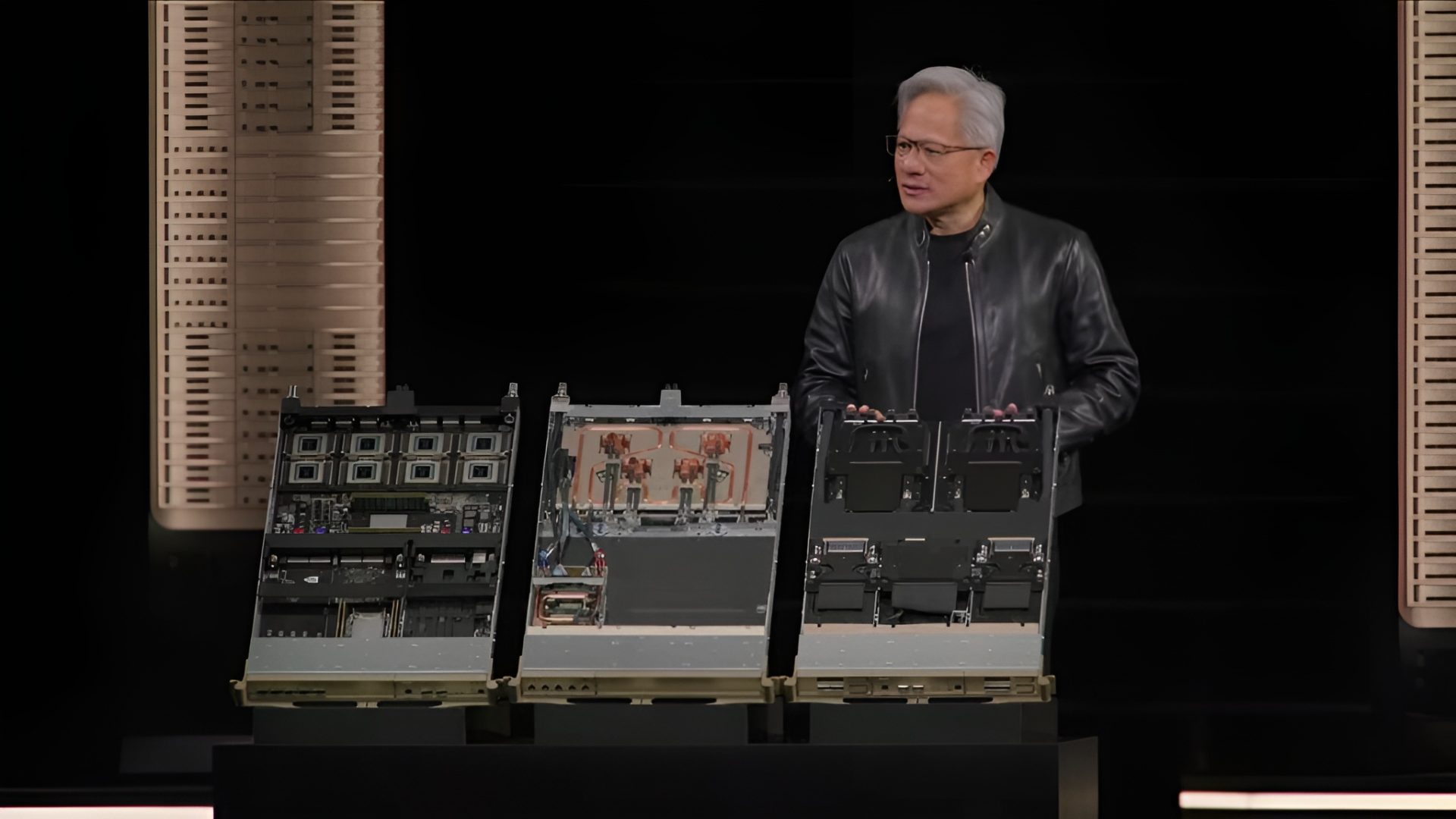

NVIDIA and Groq are no longer just a “rumored pairing” in the AI hardware world. The collaboration is now taking a concrete shape, with NVIDIA CEO Jensen Huang revealing a new hybrid compute tray at GTC 2026 that places Groq’s third-generation LPU technology directly inside an NVIDIA Vera Rubin rack design.

The big goal is clear: dominate high-speed AI inference, especially workloads where latency and throughput matter as much as raw compute. NVIDIA is positioning this Rubin + Groq hybrid approach as a way to close the gap in the inference race and challenge specialized rivals that have been building systems primarily for serving AI models efficiently at scale.

At the center of the announcement is the Groq 3 LPX hybrid compute tray. NVIDIA says pairing LPX with Rubin delivers a dramatic leap in inference efficiency, claiming up to a 35x increase in inference throughput per megawatt. That kind of performance-per-watt improvement goes straight to the heart of what cloud providers, enterprises, and AI platforms care about when deploying large models in production: lower cost, higher serving capacity, and faster responses under heavy demand.

The LPX tray is designed as a dense inference-focused building block. In the rack configuration described, it scales to 256 LPU units, bringing 128GB of on-chip SRAM and a massive 640 TB/s of scale-up bandwidth. NVIDIA’s strategy is to combine the strengths of its next-generation Rubin GPUs with Groq’s LPUs so it can accelerate both key parts of inference: prefill (processing the prompt and building context) and decode (generating tokens quickly and consistently). This approach is aimed at making NVIDIA more competitive in inference deployments where specialized architectures have had a head start.

Groq 3 chip specifications, as shared, reinforce why NVIDIA is interested. Each Groq3 chip is described as offering 500MB of SRAM, 150 TB/s of SRAM bandwidth, and up to 1.2 PFLOPs of FP8 compute. When combined within the Rubin + Groq LPX tray, Jensen says total AI inference compute can reach as high as 315 PFLOPs, highlighting just how aggressive this hybrid platform is meant to be for real-world serving at scale.

NVIDIA is also framing the platform around the next wave of model requirements: trillion-parameter AI models and extremely long context windows reaching into million-token territory. The company says the co-designed LPX architecture, paired with Vera Rubin, is engineered to maximize efficiency across power, memory, and compute. The promise is better throughput per watt and stronger token performance, enabling a tier of premium inference that’s tuned for enormous models and massive context lengths—exactly the direction cutting-edge AI systems are moving.

Strategically, NVIDIA appears to be treating Groq’s role in inference as similar to how past acquisitions and partnerships strengthened its platform in other areas, like networking. The underlying message is that as AI shifts toward more agentic systems—models that plan, act, call tools, and respond in near real time—latency-sensitive inference becomes mission-critical. By integrating Groq’s LPU approach into its Rubin-era infrastructure, NVIDIA is aiming to secure an advantage in the segment of AI compute where speed, responsiveness, and efficiency can matter as much as benchmark peak performance.

If NVIDIA delivers these gains in production deployments, this Rubin + Groq hybrid compute tray could become a major new option for AI providers looking to serve advanced models faster, at lower energy cost, and with better scalability for next-generation inference workloads.