NVIDIA is moving surprisingly fast with its next-generation Rubin AI platform. Even though earlier expectations pointed to a launch window in the second half of 2026, the company has now confirmed Rubin is already in full production as of Q1 2026. That accelerated schedule signals a clear strategy from NVIDIA: stay ahead in the AI infrastructure race by shortening the gap between major architecture generations.

Rubin isn’t a sudden pivot, either. NVIDIA says development began roughly three years ago, and production work has been happening in parallel with Blackwell. That long runway helps explain how the company can compress timelines while still delivering major changes across compute, interconnect, and networking.

What’s especially notable is how NVIDIA’s real-world cadence has been getting tighter than the “annual” rhythm many people assume. Blackwell production ramped in the second half of 2025, Blackwell Ultra entered mass production in Q3 2025, and now Rubin is already entering full production far earlier than previously discussed. The takeaway is simple: NVIDIA is pushing generational turnover at a pace few competitors can match, which reinforces its position at the center of large-scale AI training and deployment.

On the demand side, Rubin is expected to be aggressively pursued by hyperscalers and fast-growing cloud AI providers. OpenAI has been officially disclosed as a commitment, and the broader market appetite for next-gen NVIDIA platforms remains intense as organizations race to expand training capacity and inference throughput.

NVIDIA also indicates that Rubin-based products will reach partners in the second half of 2026, with major cloud platforms among the first expected to deploy Vera Rubin-based instances in 2026. That early deployment list includes AWS, Google Cloud, Microsoft, and OCI, along with NVIDIA cloud partners such as CoreWeave, Lambda, Nebius, and Nscale. If those timelines hold, Rubin could begin shipping to customers by the second half of 2026 and quickly become a key revenue driver alongside continued Blackwell Ultra shipments.

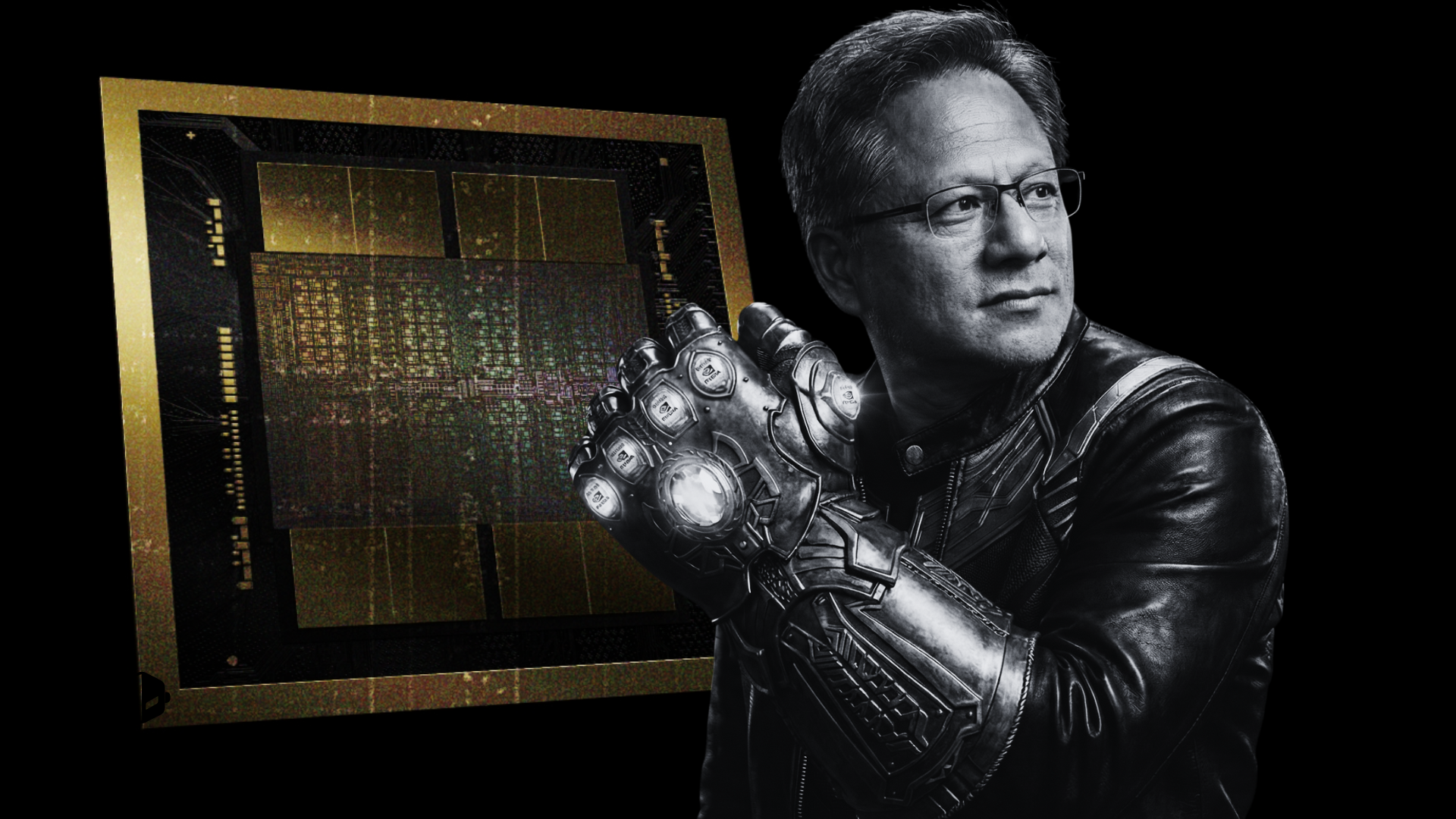

Rubin is more than just a new GPU. NVIDIA describes the Rubin platform as a six-chip lineup designed as a full-stack AI infrastructure solution, spanning compute, CPU, switching, networking, and photonics. The components include:

The Rubin GPU, featuring 336 billion transistors

The Vera CPU, featuring 227 billion transistors

An NVLink 6 switch to power high-speed interconnect

CX9 and BF4 networking components

Spectrum-X 102.4T CPO, targeting silicon photonics connectivity

With Rubin entering full production earlier than expected, NVIDIA is making its intent clear: accelerate the rollout of next-generation AI systems and keep its lead in AI training infrastructure, where performance, scale, and time-to-deploy are becoming just as important as raw compute.