NVIDIA’s next rack-scale platform, Kyber, aims to deliver a huge leap in GPU density and power efficiency for the era of AI factories

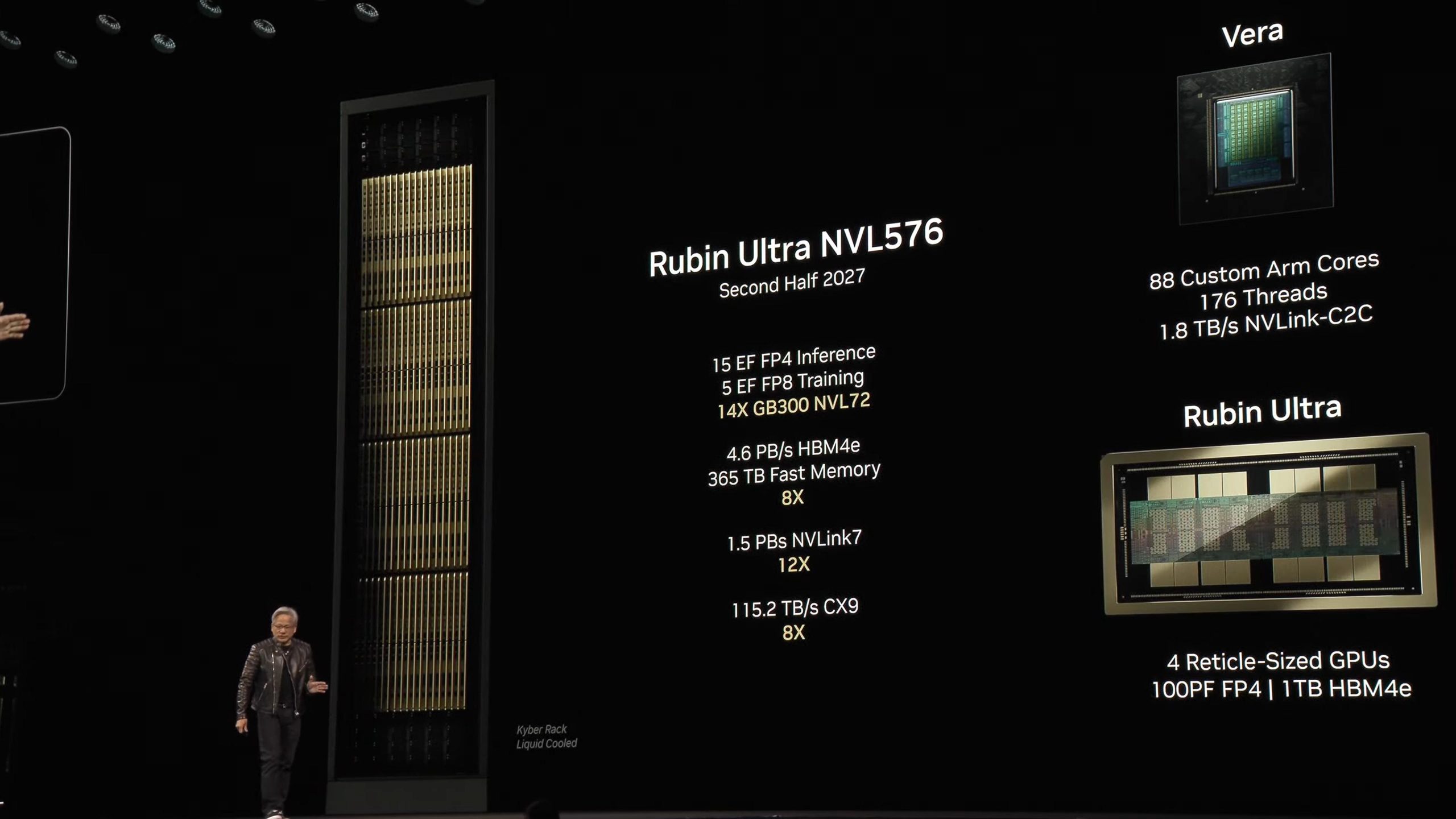

At the OCP Global Summit, NVIDIA outlined the next phase of its AI compute roadmap, spotlighting Kyber, a new rack-scale generation designed to replace today’s Oberon-based designs. The goal is ambitious: by 2027, Kyber racks are planned to host high-density platforms with up to 576 Rubin Ultra GPUs in a single configuration, referred to as NVL576. For data centers racing to train and serve ever-larger AI models, that kind of per-rack scale is a major unlock.

If you’re new to the terminology, Oberon and Kyber refer to rack-level architectures that define how accelerators, networking, power, and cooling are organized. Oberon has underpinned Blackwell systems (GB200/GB300). Kyber is the successor and is being engineered specifically for Rubin Ultra-era deployments, bringing major changes to how compute is packaged and powered.

One of the biggest shifts is a move to vertical, book-like compute blades. By stacking trays vertically, Kyber can cram more GPUs into the same footprint while improving cable management and airflow pathways. The design also integrates NVLink switch blades directly into the rack enclosure, simplifying scale-out and making maintenance more straightforward. With NVLink switching built in, clusters can grow with lower latency and less external complexity.

Kyber also overhauls power delivery. Instead of relying on the legacy 415/480 VAC three-phase approach, facilities move to an 800 VDC facility-to-rack model. That higher-voltage DC feed significantly improves efficiency and allows roughly 150% more power to be delivered over the same copper gauge. The implication is big: higher rack power budgets without an explosion in copper usage, which NVIDIA projects could translate into substantial cost savings at hyperscale. Liquid cooling and refined mechanical design within the OCP ecosystem round out Kyber’s push for higher performance per rack with better thermals and serviceability.

Why Kyber matters for AI infrastructure

– Higher GPU density per rack to accelerate training and inference at scale

– Integrated NVLink switch blades for easier scaling and lower-latency interconnects

– 800 VDC facility-to-rack power delivery for better efficiency and higher power throughput

– Liquid cooling and optimized mechanics to support sustained high-performance operation

– A path to NVL576 systems with up to 576 Rubin Ultra GPUs targeted around 2027

Taken together, this is a clear signal of where data center design is headed as AI workloads explode. By packing more compute into each rack and delivering it more efficiently, Kyber sets the stage for the next wave of large-scale AI clusters—maximizing performance, minimizing overhead, and keeping the upgrade path open for the Rubin Ultra generation and beyond.