Nvidia is responding to growing questions about DLSS 5, and the company’s latest clarification is likely to intensify the debate rather than settle it. After backlash to the initial DLSS 5 announcement and a wave of uncertainty about what the feature actually does, YouTuber Daniel Owen reached out directly to Nvidia’s Jacob Freeman for a clearer, plain-language explanation. Nvidia responded by email instead of doing a live discussion, but the key takeaway is now much easier to understand.

According to Nvidia’s clarification, DLSS 5 works by taking what is already on your screen as its starting point. In other words, it uses a 2D frame (essentially a screenshot of the current display output) plus motion vectors, then runs that input through a generative AI model to “enhance” the image. The resulting output is then rendered on top of the game’s normal rendering, rather than being a traditional improvement derived from the game engine’s underlying scene data.

That immediately raises a major question: is DLSS 5 actually aware of real 3D geometry, depth, and the full scene information that artists and developers build inside the engine? Nvidia’s wording suggests it is not. The company emphasized that DLSS 5 is trained “end to end” to understand scene semantics such as characters, hair, fabric, translucent skin, and different lighting conditions—all from analyzing a single frame. Notably, that explanation focuses on interpreting the final image rather than accessing true 3D geometry.

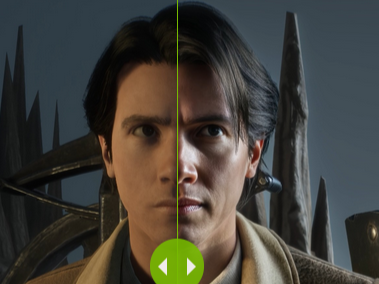

This distinction matters because some early examples appear to show subtle but real changes—textures and details that look different from the original render. Owen highlighted one example where a character appears to gain slightly more hair in a specific area after DLSS 5 is applied. When asked about these discrepancies, Nvidia held to its position that the underlying geometry remains unchanged and argued that what’s been shown so far is only an early preview.

Still, the concern remains: even if the underlying geometry and textures in the engine haven’t been altered, what the player ultimately sees on the screen could be changed by the AI’s interpretation. That can be a big deal for visual consistency and for preserving artistic intent, especially if the AI “decides” a slightly different look is more “realistic.”

Another point of friction involves materials and lighting—specifically PBR (physically based rendering), the standard approach developers use to define how surfaces should look under different lighting. Nvidia has stated that DLSS 5 can enhance PBR properties on materials. Owen pressed for clarification on whether DLSS 5 reads the developer-authored PBR inputs from the engine (like roughness values, normal maps, and other material parameters), or if it’s guessing those properties from the final image.

Nvidia’s reply: DLSS 5 does not take developer PBR specifications as inputs. It only uses the rendered frame and motion vectors, and it infers material properties from that image. Critics argue this makes earlier messaging about “enhancing PBR properties” sound more like AI interpretation than a faithful, engine-guided improvement—because the system isn’t consulting the original material data authored by the developer.

Then there’s the issue that’s arguably most important for many players and creators: control. If DLSS 5 is effectively repainting the final image, can developers tell it what to do—or what not to do—beyond basic tuning? Owen raised the example of a scene where a character appears without makeup in the original presentation, but looks like she’s wearing makeup with DLSS 5 enabled. The practical question is simple: if that change conflicts with the intended art direction, can developers instruct DLSS 5 to preserve the original look for that character or that scene?

Nvidia provided a lengthy response that largely repeated prior official language, without clearly confirming whether developers can direct the model to avoid specific alterations at the object or character level. As of now, based on the clarification given, it’s hard to conclude that the current early version offers strong tools to guarantee artistic intent is preserved—though Nvidia’s “early preview” framing suggests the company expects iteration and improvement.

Owen also pressed on technical limitations: is DLSS 5 strictly screen-space? Nvidia confirmed that it is limited to screen space. That means it only knows what is visible in the frame it’s enhancing and does not have awareness of off-screen elements that can affect lighting and reflections. Nvidia’s response also implies DLSS 5 isn’t truly “understanding” ray tracing data in the way many would assume from a next-generation graphics feature.

That leads to an obvious follow-up that many gamers will ask: if DLSS 5 doesn’t incorporate the deeper scene calculations, what’s the point of enabling expensive effects like ray tracing—especially if DLSS 5 is producing a final enhanced image without truly accounting for those complex lighting interactions? The bigger worry is that some people may interpret DLSS 5 as a replacement for physically accurate rendering rather than a complement to it, potentially shifting the visual goal from “render the scene correctly” to “generate a more appealing-looking frame.”

Finally, Owen asked a broader industry question about the future: with machine learning acceleration becoming more hardware-agnostic through new platform support, could proprietary technologies like DLSS 5 eventually become less locked down? Nvidia’s answer was noncommittal, saying it has nothing to announce right now—neither confirming nor fully shutting the door on potential changes down the road.

For now, Nvidia’s clarification makes DLSS 5’s direction much clearer: it’s a generative, screen-space enhancement layer that works from the final rendered frame and motion vectors, not from full engine-level geometry and material inputs. Whether that becomes a groundbreaking leap in perceived image quality or a controversial step away from creator-controlled rendering will likely depend on two things: how much control developers are ultimately given, and how consistently the AI can enhance visuals without rewriting the look of characters, materials, and scenes in ways that players and artists didn’t intend.