China has announced a major new high-performance computing project in Shenzhen: the LineShine supercomputer. Unveiled at a conference hosted by the National Supercomputing Center in Shenzhen, LineShine is being positioned as the country’s fastest domestic supercomputer initiative and is designed to push beyond 2 ExaFLOPS of computing performance.

If the project delivers as described, LineShine will sit firmly in the top tier of global supercomputers. Its headline target is more than 2 ExaFLOPS of compute, alongside an architecture built for sustained performance rather than just theoretical peaks. Unlike many modern supercomputers that lean heavily on GPU acceleration, LineShine is described as a CPU-only platform, built around highly efficient processors, high-bandwidth memory, and a high-speed interconnect to keep data moving quickly between nodes.

A key part of the announcement is the focus on supply chain independence. The system is intended to be built using domestically produced technology, with no reliance on international vendors. Beyond hardware, the platform is also being developed with a full software ecosystem in mind, including a complete toolchain made up of compiler support, debugging, and performance tuning tools—an important requirement for real-world scientific computing and large-scale AI deployments.

Two-phase build plan with large-scale expansion in mind

LineShine is planned in two phases. The first is a pilot verification system based on 100 servers using Huawei Kunpeng, adding up to 12,800 CPU cores. The second phase, described as an industrial complex, will significantly scale the machine with 1,580 blade servers built around x86 CPUs. This phase is said to total 101,120 cores and deliver a theoretical peak performance of more than 10 petaflops.

The configuration details shared include a 16 four-way server layout totaling 2,048 cores, plus four eight-way servers totaling 1,280 cores. While these numbers represent specific building blocks rather than the full final system, they highlight the modular approach underpinning the broader scale-out strategy.

Massive compute footprint, interconnect, and storage ambitions

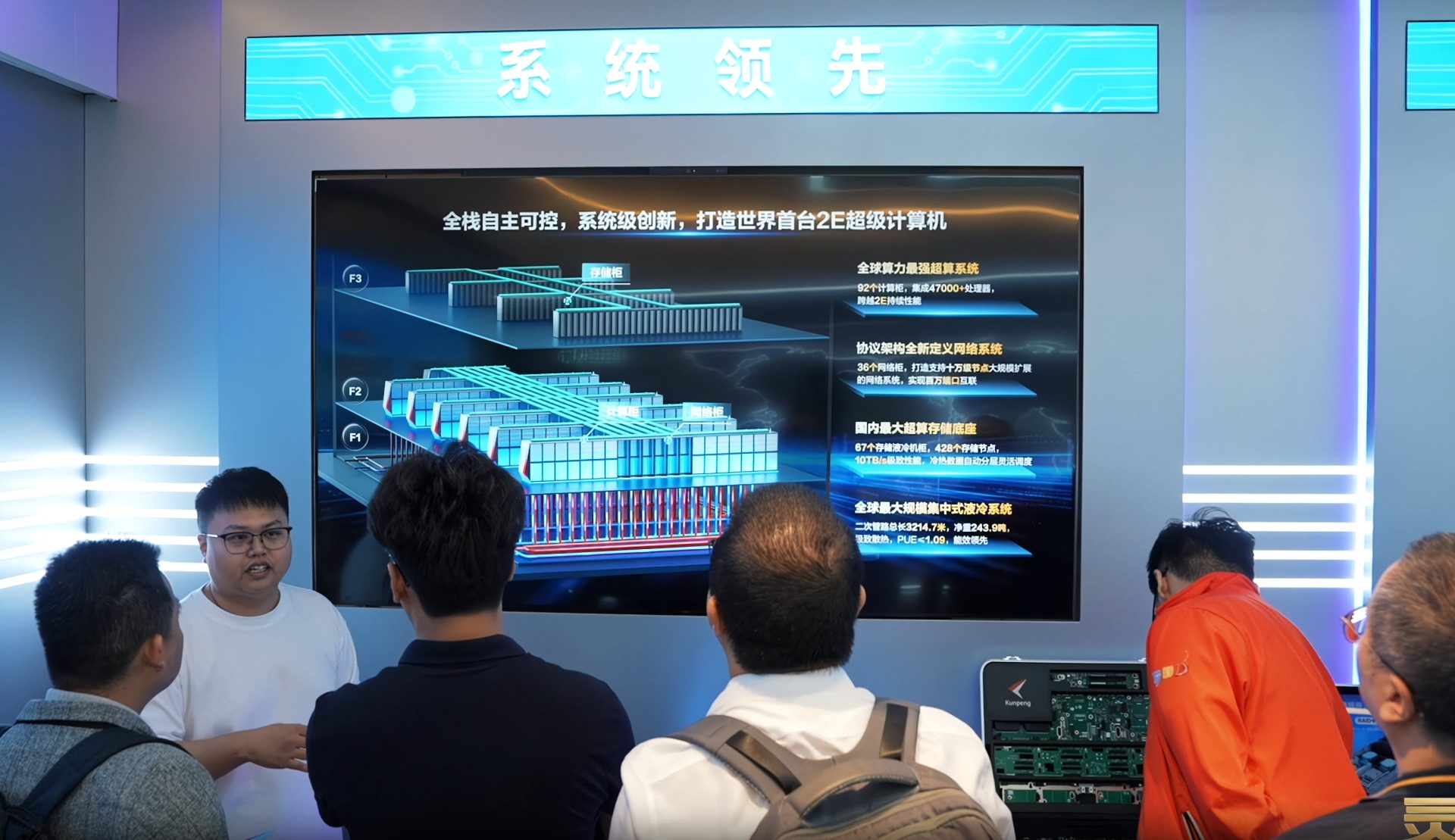

The overall buildout described for LineShine is enormous. The full system is said to include 92 compute cabinets and around 47,000 CPUs, alongside 36 network cabinets designed for expansion to hundreds of thousands of nodes. The interconnect plan is equally ambitious, with a million-port network cited—suggesting a design meant to scale aggressively as demand grows.

Storage is another centerpiece. The project aims to form what is described as China’s largest supercomputing storage base, pairing high capacity with high throughput. The storage system is expected to include 67 liquid-cooled storage cabinets, 428 storage nodes, and bandwidth of up to 10 TB/s. Total storage capacity planned for the full system is stated as 650 PB, a level intended to support data-heavy HPC workloads and modern AI training pipelines.

Liquid cooling at extreme scale

To manage power and thermal density, LineShine is also being framed as the world’s largest liquid-cooling solution for a supercomputing platform. The cooling infrastructure is described in unusually concrete terms: secondary piping spanning 3,214.7 meters, with a net weight of 243.9 tons. Those figures signal just how physically large and industrial this installation is intended to be.

Built for AI and HPC, with mixed-precision support

While LineShine is presented as a broad-purpose supercomputing system, AI is clearly a major target. The design includes a “Fusion Architecture” that integrates SMT accelerators aimed at improving matrix and mathematical operations. It also emphasizes full-stack mixed-precision support—FP64, FP32, FP16, and INT8—which is essential for serving everything from traditional scientific simulations to modern deep learning workloads efficiently.

In early testing, LineShine reportedly measured per-CPU throughput on DeepSeek at 578 tokens per second, with an expectation that overall system throughput will be 100 times higher at scale. The platform is also positioned to support mainstream models such as Qwen, along with various domestically produced AI models.

Beyond AI model training and inference, LineShine is being aimed at classic high-performance computing applications including remote sensing, materials science, bioinformatics, meteorology, pharmaceuticals, oil exploration, life sciences, and electromagnetic simulation—use cases where compute density, memory bandwidth, fast networking, and massive storage all matter.

When will LineShine go online?

No official operational timeline was provided in the announcement. However, based on the scale of deployment and the complexity of bringing up a new domestic ecosystem at this level, a realistic window for the system to come online could be around 2029 to 2030.

With its stated goals—over 2 ExaFLOPS, CPU-only design, domestic production, extreme liquid cooling, and a storage footprint measured in hundreds of petabytes—LineShine is shaping up to be one of the most ambitious supercomputing projects in the world, and one that could significantly expand China’s capacity for both AI and scientific computing.