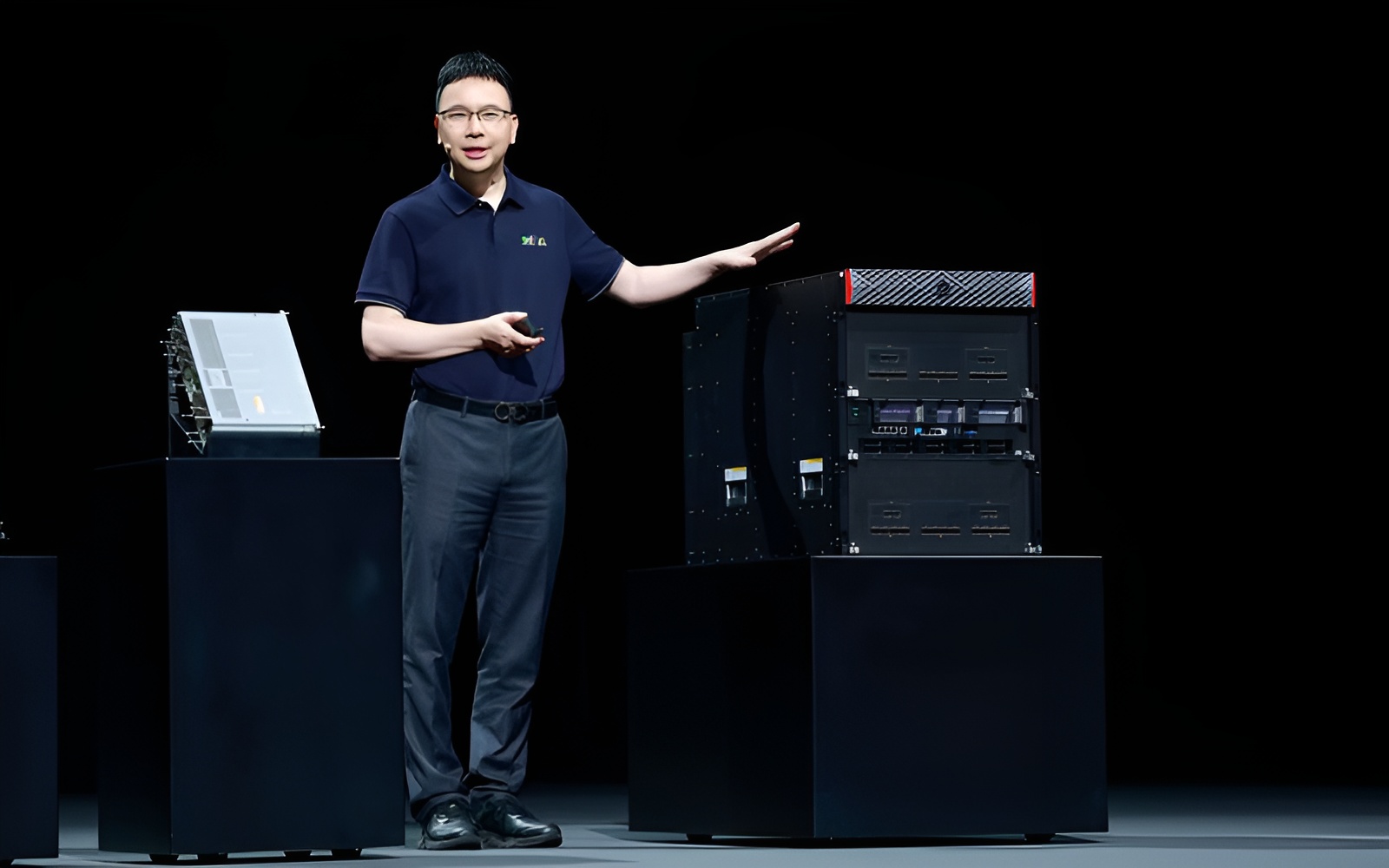

Huawei is preparing to pull back the curtain on its most powerful AI computing system yet, and it’s doing it on one of the biggest stages in the industry. At this year’s Mobile World Congress (MWC), the company plans to publicly showcase the Atlas SuperPoD 950 AI cluster for the first time, positioning it as a serious alternative to NVIDIA’s next-generation Vera Rubin-style AI racks.

The move is notable not just because of the hardware involved, but because of where Huawei is choosing to unveil it. Bringing the Atlas SuperPoD 950 to Europe signals that Huawei wants to be viewed as a global contender in AI infrastructure, not only a domestic option for Chinese buyers. It also feeds into a larger push across China to reduce reliance on American technology and build a more self-sufficient AI computing ecosystem—an effort that has intensified as the country accelerates investment into homegrown chips and data center platforms.

So what exactly is Huawei promising with the Atlas SuperPoD 950?

According to Huawei’s own figures, the system is designed around a massive deployment of Ascend 950 AI chips—up to 8,192 of them in a single cluster. The company claims this adds up to a cumulative performance of 8 exaFLOPS (EFLOPS) at FP8 precision and 16 EFLOPS at FP16, performance levels aimed squarely at modern AI training and large-scale inference workloads. Just as important for today’s largest models, Huawei lists total memory capacity at 1,152TB, reflecting how memory scale has become a key bottleneck for cutting-edge generative AI.

Interconnect is another major pillar of the pitch. Huawei says the Atlas SuperPoD 950 uses a new fabric called UnifiedBus, presented as an alternative to NVIDIA’s NVLink-style approach for fast chip-to-chip and node-to-node communication. In Huawei’s disclosures, the cluster reaches total interconnect bandwidth of 16.3PB/s—an attention-grabbing number in a market where networking and communication overhead can make or break real-world AI performance.

Huawei has also published comparisons framing these specifications against Vera Rubin rack configurations such as NVL144 and NVL576, emphasizing what it describes as a sizable advantage on paper. The key phrase there is “on paper.” As with any large AI system announcement, the real test will be independent performance validation, software maturity, and availability at scale.

There are also practical realities behind the headline stats. Packing thousands of AI chips into a single rack-scale system raises obvious questions around power delivery, cooling, and operating efficiency. It’s worth noting the suggestion that Huawei may be pushing aggressive power and thermal limits to hit the performance levels it’s advertising. In the same vein, Huawei’s disclosed physical footprint is eye-catching too, with claims that a single Atlas 950 rack can span up to 1,000 square meters—highlighting just how infrastructure-heavy these ultra-dense AI deployments can be.

The Atlas SuperPoD 950 debut at MWC makes one thing clear: Huawei is escalating its push into the AI compute race and wants customers and industry watchers to take its data center ambitions seriously. Still, the biggest challenge may not be engineering bravado—it may be adoption and supply. Even as Huawei and other Chinese chipmakers gain momentum, the long-term question is whether Huawei can meet customer demand consistently as it scales production, expands deployments, and convinces organizations that its platform is a dependable alternative in real-world AI data centers.