NVIDIA has become one of the most influential companies in artificial intelligence, largely because its GPUs and software platform have turned into the default toolkit for training and running many of today’s AI systems. That powerful ecosystem has helped push NVIDIA’s market value to extraordinary heights and cemented its position as the headline name in AI computing.

Now, a new perspective is putting a spotlight on a potential challenger with the scale and technology to truly pressure NVIDIA: Google. Stephen Witt, author of The Thinking Machine, recently argued that Google could be the company’s toughest obstacle in the AI race. In his view, if Google’s AI strategy pays off—especially through its Gemini large language models and its in-house TPU (Tensor Processing Unit) hardware—NVIDIA could face serious trouble.

Why does this matter? The competition isn’t only about raw performance. The TPU vs. NVIDIA GPU debate reaches into everything that determines who “wins” AI infrastructure: which platform developers prefer, which chips are available in volume, how efficiently models can be trained and served, and which architecture becomes the most widely adopted beyond a company’s own walls.

Google’s TPU approach is often described as one of the strongest examples of custom AI silicon (ASIC) in the market, designed specifically for machine learning workloads. That focus can translate into impressive efficiency and tight integration with Google’s AI stack. At the same time, Google reportedly faces manufacturing pressure tied to advanced packaging bottlenecks—an issue that can limit how quickly even the best chip designs can scale. NVIDIA, by contrast, is widely seen as having supply capacity planned out far ahead, which has been a major advantage during the recent surge in AI demand.

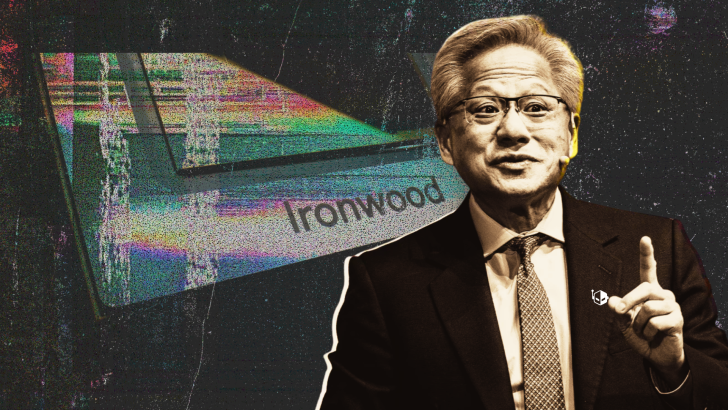

Witt also pointed to something more personal and arguably more revealing about how NVIDIA stays ahead: the mindset of CEO Jensen Huang. Rather than being fueled primarily by optimism, Witt suggests Huang is driven by fear of failure—emotions like guilt and even shame—pushing him to work relentlessly to keep NVIDIA in front. The argument is that this intensity has helped NVIDIA move quickly across every major AI shift, from pre-training to inference, by investing early and broadly so customers continue to see NVIDIA as the safest and most capable choice.

That urgency may be more important now than ever. The AI hardware market is no longer a one-company story. Rivals such as AMD, Google, and Amazon are accelerating their own AI chip plans, each aiming to offer alternative paths for training and deploying models at scale. With more viable competitors and more organizations looking to reduce dependence on any single vendor, NVIDIA’s next moves will be scrutinized closely.

The big question going forward is straightforward: can NVIDIA maintain its leadership as more companies push custom AI processors and full-stack AI platforms, or will Google’s TPUs and AI model ecosystem become the disruptive force that reshapes the balance of power in AI computing?