Bolt Graphics says its Zeus GPU is now a real piece of silicon, not just a set of ambitious slides. The company has confirmed that its Zeus test chip has successfully taped out at TSMC using a 12nm FFC process, marking a major milestone on the road to a commercial launch.

Zeus is positioned as more than a gaming graphics card. Bolt Graphics describes it as a GPU architecture built to span multiple markets, including HPC (high-performance computing) and AI, with an emphasis on practicality: keeping cost, power consumption, and rack space under control. The architecture has reportedly been validated on FPGA hardware and evaluated with customers over the past four years, and the company says the broader Zeus platform pairs custom hardware with a complete software stack to form a unified system for different compute workloads. While the current test chip is on 12nm, Bolt also notes that the scalable design can target advanced nodes, including 5nm.

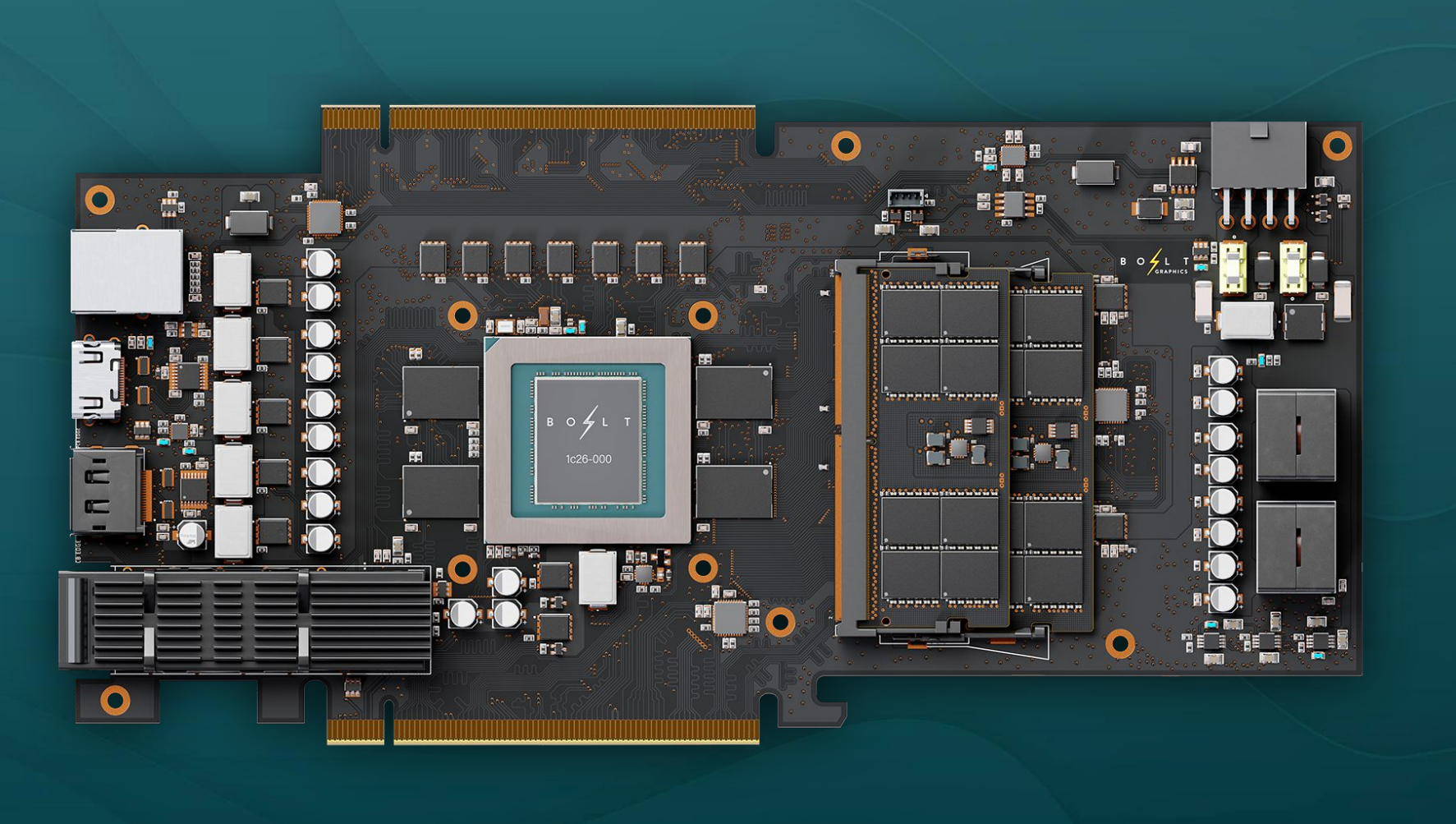

For deployment, Zeus is planned in two main form factors: PCIe add-in cards and 2U server configurations. Bolt outlines two core card families: a single-chip version called Bolt Zeus 1c26 and a dual-chiplet version called Bolt Zeus 2c26, with multiple memory options.

The Bolt Zeus 1c26 (single-chip) is described as a single-slot, full-length PCIe card rated at 120W total board power. It targets 5/10/20 TFLOPs for FP64/FP32/FP16 vector compute, along with 307.2/614.4 TFLOPs for INT16/INT8 matrix performance. It includes 128MB of on-chip cache and comes with 32GB of LPDDR5X, plus two DDR5 SO-DIMM slots. Bolt also lists “up to 160GB” capacity at 363GB/s bandwidth. For ray workloads, it’s rated at 77 gigarays of path tracing performance, and it supports media encode/decode for AV1 and H.264/H.265 at up to 2x 8K60 streams.

The Bolt Zeus 2c26 (dual-chiplet) scales the design into a dual-slot, full-length PCIe form factor with a 250W board power rating. Compute scales up to 10/20/40 TFLOPs (FP64/FP32/FP16) and 614.4/1228.8 TFLOPs (INT16/INT8). Cache doubles to 256MB on-chip, and memory options are listed as 64GB or 128GB of LPDDR5X, paired with four DDR5 SO-DIMM slots. Bandwidth is quoted at 725GB/s, with capacity figures ranging from “up to 320GB” to “up to 384GB” depending on configuration. Path tracing is listed at 154 gigarays, and media capability rises to 4x 8K60 streams.

Across the PCIe cards, Bolt Graphics also highlights modern connectivity and I/O, including 400GbE (QSFP-DD) networking, PCIe 5.0 x16 links, and display output support such as DisplayPort 2.1a and HDMI 2.1b (as listed for the card configurations).

On the server side, Bolt points to a 2U Zeus system that ramps things dramatically. The company claims this platform can scale to as much as 2GB of on-chip cache and reach 1228 gigarays of path tracing capability, with extremely large memory capacity figures and bandwidth targets (the release mentions multi-terabyte-per-second bandwidth and multi-terabyte memory scaling in the rack/server context).

The biggest attention-grabber is Bolt’s updated performance positioning versus NVIDIA’s high-end RTX 5090. Bolt claims up to 5x higher path tracing performance when comparing a 250W Zeus 2c26 configuration against a 575W RTX 5090. For HPC workloads, the company cites up to a 6x uplift. It also mentions a massive 300x increase in EM (electromagnetic) simulation performance, though that number is based on comparing a four-chip Zeus setup against a single RTX 5090, which is an important detail for anyone weighing the claim.

Bolt also argues that Zeus could deliver a compelling total cost of ownership advantage by relying on LPDDR5X and DDR5 memory rather than GDDR-style graphics memory. The company’s pitch is that this approach can enable much higher memory capacities in rack-scale configurations—while aiming to keep overall platform costs lower for HPC and path tracing-focused workflows.

As for when you’ll actually be able to buy one, Bolt Graphics currently targets mass production and product availability near the end of 2027. If memory pricing and supply stabilize by then, Zeus could arrive at a moment when large-memory accelerators are in especially high demand across AI, simulation, rendering, and research computing.