Tenstorrent has offered an early look at a new, optimized AI video generation model running on its Blackhole-based servers, and the results point to a major leap in real-time video creation performance.

AI video generation is typically limited by compute power. The more capable the accelerators and the better the model is tuned for the hardware, the faster you can turn prompts into usable clips. Tenstorrent is pushing that idea hard with its upcoming model, Wan2.2-14B, a 14-billion-parameter system trained and operated across servers packed with several hundred Blackhole accelerators.

In a live demo presented to EETimes, Tenstorrent showed the model generating a 720p video (81 frames, 40 steps) in about five seconds. What makes that especially notable is that the finished clip was also five seconds long, essentially matching generation time to playback time. Tenstorrent also says it has achieved an even faster run: 2.4 seconds to generate a similar five-second video, which the company claims is roughly 10 times quicker than competing solutions.

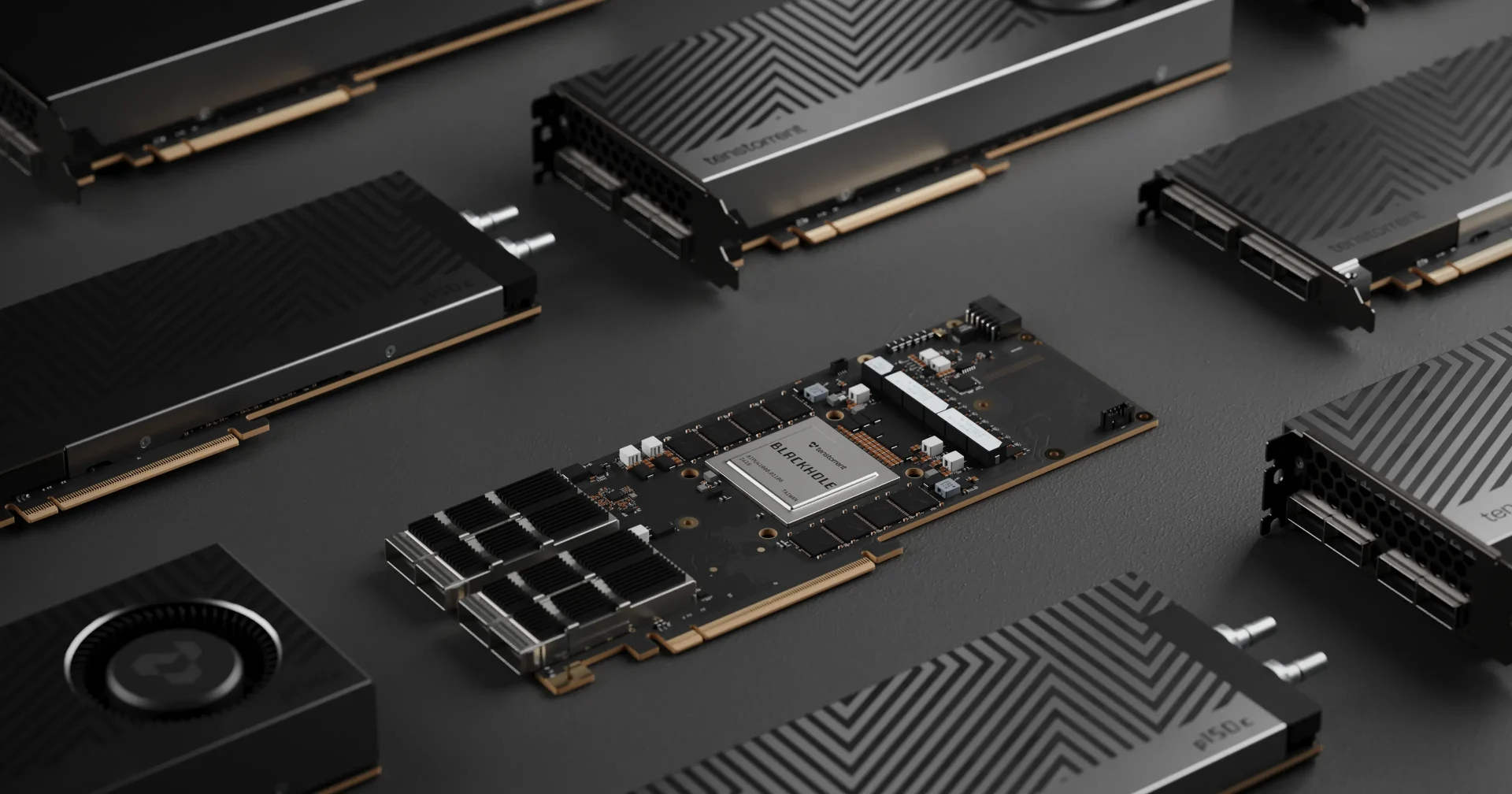

This speed comes from a combination of model optimization and dense accelerator deployment. Tenstorrent is using an optimized variant of Wan2.2-14B created by Prodia and running it across four Galaxy servers equipped with 256 Blackhole accelerators in total. Each Blackhole accelerator includes 16 RISC-V CPU cores paired with 32 GB of GDDR6 memory, a configuration designed to keep AI workloads fed with both compute and bandwidth. Blackhole sits at the top of Tenstorrent’s AI product stack and is positioned as a flagship part of its platform.

Tenstorrent attributes the performance jump to its unified architecture approach. Instead of leaning on proprietary technology, the company emphasizes an open compute platform where processing cores, memory, and networking are designed to work together smoothly when running large AI models. The goal is to reduce friction and bottlenecks that can slow down end-to-end inference, especially for demanding tasks like high-resolution video generation.

This is being framed as a preview rather than a final launch. Tenstorrent is expected to share additional performance demonstrations in the coming week as it gears up to introduce next-generation, cluster-scale systems aimed at even larger deployments and faster AI inference.