During the vibrant celebrations of the Lunar New Year, Chinese AI startup DeepSeek captured significant attention by igniting a thought-provoking discussion in the tech world: Are advancements in AI model efficiency going to increase or decrease the demand for chip hardware? The industry finds itself at a crossroads, with experts expressing differing views.

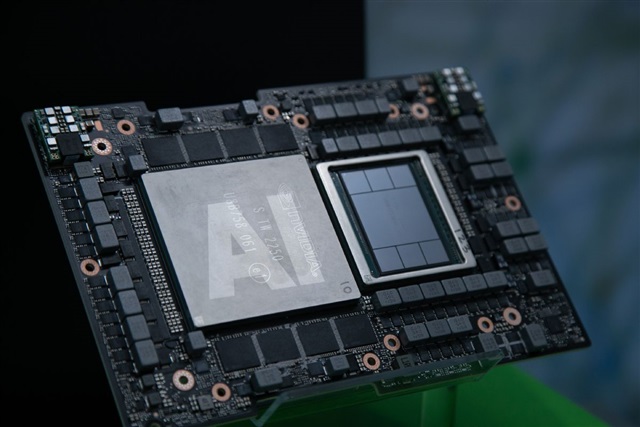

Some argue that as AI models become more efficient, the need for high-performance chip hardware might decline. The logic here is simple—improved algorithms and software could potentially handle more complex tasks on existing hardware, reducing the pressure to continuously upgrade physical components.

On the flip side, there are proponents who believe that increased efficiency in AI models could actually spur demand for more advanced chipsets. As AI capabilities expand and evolve, the potential applications and use-cases multiply, possibly requiring more sophisticated hardware to handle newfound, intensive tasks.

Regardless of the outcome, one thing is clear: the conversation around AI and its hardware requirements is as dynamic and evolving as the technology itself. This debate is likely to play a pivotal role in shaping the future of AI development and the tech industry’s trajectory as it grapples with balancing innovation and practicality.