As AI inference workloads surge and modern models continue to balloon in size, the pressure on data centers is intensifying—especially around memory speed, bandwidth, and power efficiency. To meet that demand, Samsung Electronics and SK Hynix are accelerating the development of next-generation high-bandwidth memory (HBM) while also pushing a major shift in how memory is used: integrating processing-in-memory (PIM) features that bring computation closer to where data actually lives.

The problem is simple but costly. Many AI tasks aren’t limited by raw compute anymore. They’re increasingly constrained by how quickly massive volumes of data can move between processors and memory. That constant back-and-forth creates bottlenecks, adds latency, and burns power—pain points that grow worse as inference becomes more widespread across cloud services, enterprise systems, and edge deployments.

That’s where HBM and PIM come in.

HBM has become foundational for AI accelerators because it delivers extremely high memory bandwidth and improves efficiency compared to older approaches. It’s designed to keep GPUs and AI chips fed with data at the pace modern machine learning workloads require. As generative AI and recommendation engines expand, faster and denser HBM stacks are becoming a critical building block for competitive AI hardware platforms.

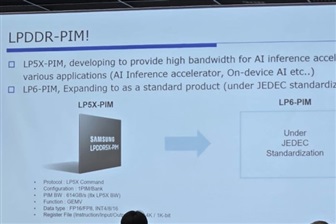

PIM aims to tackle an adjacent challenge: reducing unnecessary data movement. Instead of sending data from memory to a processor for every operation, PIM enables certain computations to be performed inside the memory itself. By doing more work where the data is stored, PIM can cut bandwidth strain, reduce latency, and potentially lower power consumption—benefits that matter greatly for always-on inference and large-scale AI services.

Samsung Electronics and SK Hynix are aligning these two approaches by continuing to push advanced HBM while integrating PIM-style capabilities that can better support real-world AI inference needs. The direction is clear: the next leap in AI performance won’t come from compute alone, but from smarter memory architectures that keep pace with model growth and the exploding demand for inference efficiency.

As AI moves from experimentation to everyday deployment, memory innovation is emerging as one of the biggest competitive differentiators in the hardware stack. With HBM evolving rapidly and PIM gaining momentum as a practical method to reduce bottlenecks, the race is on to build memory systems that can power the next wave of AI applications—faster, cooler, and at lower cost per inference.