OpenAI is doubling down on AI compute at an unprecedented scale, entering a sweeping partnership with NVIDIA that points to a $100 billion buildout of next-generation infrastructure. The plan centers on multi-gigawatt deployments of NVIDIA systems, with OpenAI targeting at least 10 gigawatts of capacity to power its upcoming AI stack.

At the heart of the collaboration is NVIDIA’s Vera Rubin platform. OpenAI is expected to procure Rubin-based AI clusters, including NVL144 configurations and the newer Rubin CPX platform, positioning the company among a select group with early access to one of the most powerful AI compute platforms on the horizon. It’s a strategic move designed to secure massive scale, tighter integration, and a competitive edge for training and serving advanced models.

The implied scale is staggering. Based on power consumption alone, the deal could translate into as many as 40,000 Rubin AI racks for OpenAI once deployments ramp, a remarkable figure for hardware that hasn’t yet hit the market. NVIDIA’s Rubin lineup is slated for volume production in the second half of 2026, lining up with when industry heavyweights are preparing their next wave of data center expansions.

This partnership underscores how rapidly the AI infrastructure race is accelerating. NVIDIA has been stitching together multibillion-dollar agreements to expand global compute capacity, and this latest commitment signals a push to deliver end-to-end platforms tuned for extreme training and inference workloads. From the first DGX supercomputers to the breakout of ChatGPT, the two companies have continually pushed each other forward; the 10-gigawatt milestone is set to power a new phase of AI capabilities.

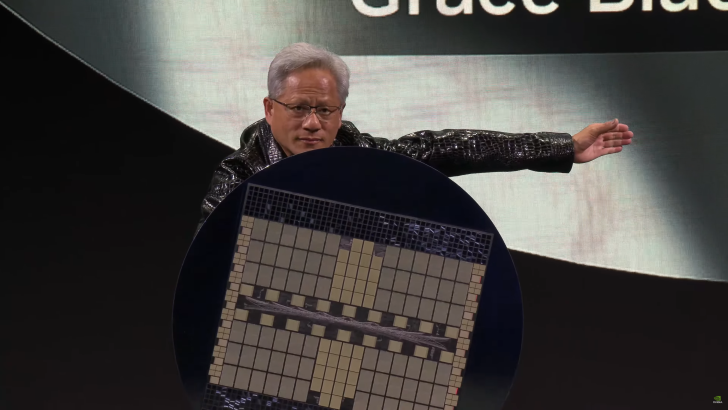

Why Rubin matters: it represents a generational leap over Blackwell, with major gains in performance, efficiency, and scalability for hyperscale data centers. For OpenAI, standardizing on Rubin AI racks could streamline deployment, accelerate model training cycles, and reduce total cost of ownership at scale—key advantages when pushing the frontier of large-scale AI systems.

If executed on schedule, the OpenAI–NVIDIA buildout will reshape the AI compute landscape, concentrate cutting-edge capacity in a few highly optimized clusters, and set a new bar for what it takes to train and serve the next wave of frontier models. All eyes now turn to the 2026 production timeline—and to how quickly this multi-gigawatt vision can be transformed into real, operational AI power.