NVIDIA’s upcoming Vera Rubin AI server platform could become one of the biggest new drivers of SSD demand in the data center world, with analysts warning it may even contribute to a NAND flash supply shock over the next few years.

The pressure point is a growing bottleneck in “agentic AI” environments. As AI systems handle more complex queries and longer workflows, they generate massive temporary memory logs to maintain context. This working set is commonly referred to as the KV cache. Right now, much of that data lives in high-bandwidth memory (HBM), but AI clusters are scaling so quickly that HBM alone can’t provide enough capacity onboard to keep up with what next-generation inference workloads want.

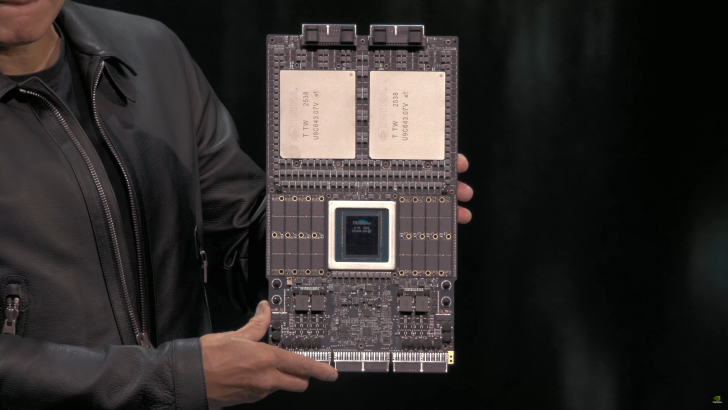

To address that, NVIDIA introduced a new approach at CES 2026: BlueField-4 DPUs tied to a dedicated storage layer called Inference Memory Context Storage (ICMS). The idea is to expand the available “context memory” pool by leaning on fast SSD storage, which can help improve throughput and keep inference pipelines fed. The catch is that shifting large chunks of AI context to SSDs doesn’t just change server design—it potentially reshapes the entire NAND supply-and-demand picture.

According to Citi’s estimates cited in the post, each Vera Rubin server system could require about 1,152TB of additional SSD NAND to support ICMS. Another way to look at it: roughly 16TB of NAND per GPU in a rack, adding up to 1,152TB for a single NVL72 configuration. If Vera Rubin shipments reach around 30,000 units in 2026 and scale to 100,000 units in 2027, the resulting NAND demand could surge dramatically. At the 100,000-unit level, the math points to 115.2 million terabytes of NAND demand driven by ICMS needs alone—about 9.3% of projected global NAND demand in the coming years.

That’s a massive number, especially because the NAND market is already being pulled in several directions at once. Ongoing data center expansion, the broader inference boom, and the race to build larger AI clusters are all increasing SSD consumption. If NVIDIA’s ICMS architecture becomes a standard requirement for competitive agentic AI deployments, it could push storage vendors and supply chains into a tighter position than many have planned for.

The concern is that NAND could begin to resemble today’s DRAM situation, where AI demand pressures availability and pricing. AI builders are unlikely to slow down, since better compute capability and faster inference throughput translate directly into business advantage. If supply tightens, it may not stay confined to hyperscalers and enterprise buyers either—everyday consumers could eventually feel it through higher SSD prices and reduced availability of general-purpose storage products.

In short, NVIDIA’s move to expand inference context capacity beyond HBM with ICMS could be a meaningful leap for next-gen AI performance, but it also risks absorbing a surprisingly large slice of global NAND output—enough to rattle the SSD market if supply can’t ramp fast enough.