Nvidia has officially revealed Rubin, its next-generation AI computing architecture designed to make running and training AI models dramatically cheaper than today’s top-tier systems. The big headline: Rubin aims to cut AI “token” costs by up to 10x compared with Nvidia’s current Blackwell generation, while also shortening training time and reducing the amount of hardware needed to reach the same results.

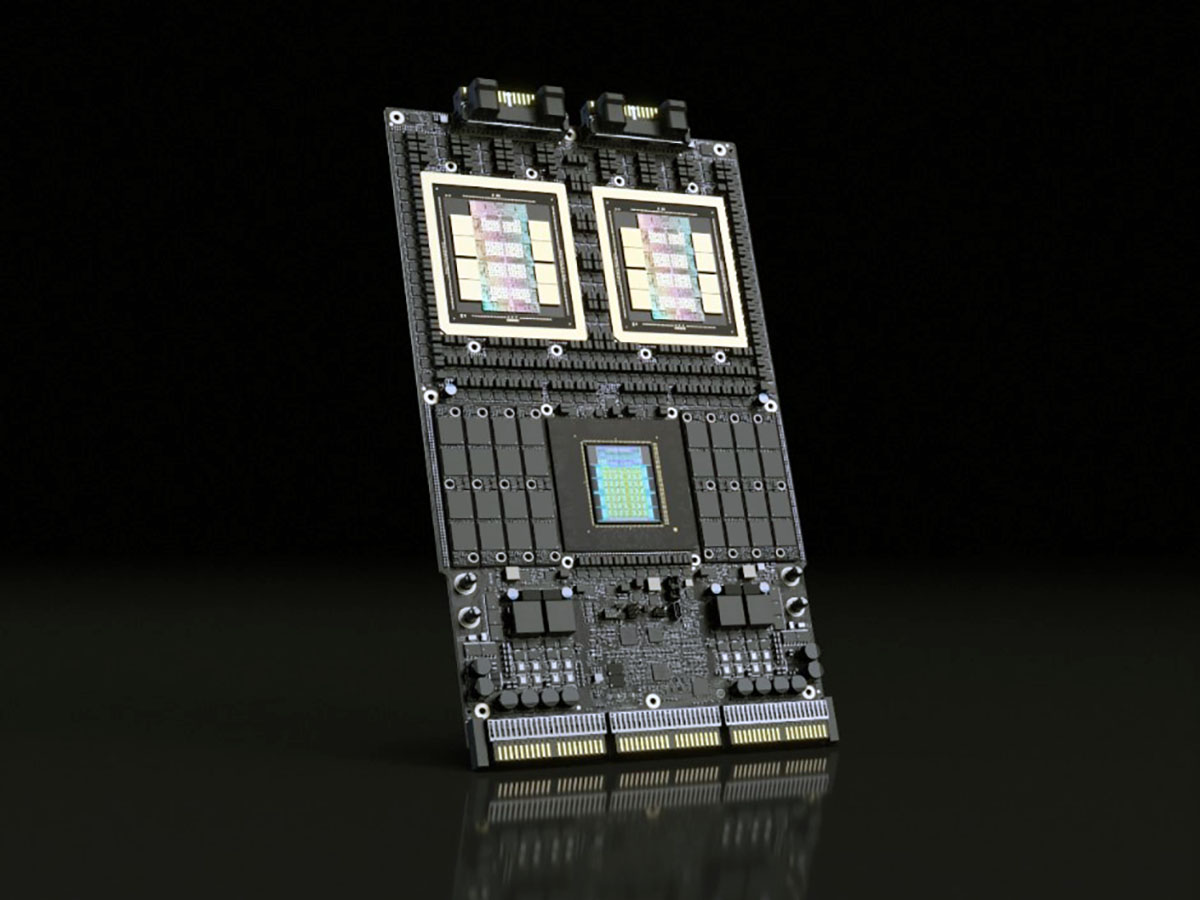

Rubin is built as a tightly integrated platform rather than a single chip upgrade. Nvidia says the architecture is “codesigned” across six major subsystems that are meant to work as one optimized stack: the Vera CPU, the new Rubin GPU, a third-generation NVLink 6 Switch, the ConnectX-9 SuperNIC, the BlueField-4 DPU, and the Spectrum-6 Ethernet Switch. These components are manufactured on advanced TSMC process nodes and include interface-level improvements focused on faster data movement, better synchronization, and lower overall cost per unit of AI work.

What makes Rubin especially notable is Nvidia’s claim that this deep co-optimization allows training large models using only a quarter of the GPUs required by comparable Blackwell-based setups. If that holds up in real deployments, it’s a major shift for organizations where hardware availability, datacenter power limits, and AI operating costs are now the biggest constraints—not just raw performance.

Cost efficiency is also the battleground where global AI competition is intensifying. China has highlighted low token pricing by combining open models and large clusters of midrange accelerators. Rubin appears positioned as Nvidia’s answer not only to performance pressure, but to the economics of inference at scale—where the highest costs often show up after a model is already trained and needs to serve millions (or billions) of requests.

Even high-profile AI leaders are paying attention. Elon Musk described Rubin as a “rocket engine for AI,” pointing to its potential to help deploy edge AI models at scale. He has also talked about a similar tenfold token-cost reduction target with Tesla’s next-generation AI hardware, though that system isn’t expected to reach mass production until next year.

One of the most intriguing parts of Rubin isn’t the GPU—it’s the new Nvidia Vera CPU. Nvidia positions Vera as a processor “engineered for data movement and agentic reasoning across accelerated systems,” and it includes full confidential computing support. Vera can be paired with Nvidia GPUs for tightly coupled acceleration, or it can run standalone to handle analytics, cloud orchestration, storage, and high-performance computing workloads, with full Arm compatibility.

On the specifications side, the Vera CPU includes 88 custom cores and delivers up to 1.2 TB/s of LPDDR5X memory bandwidth, with an emphasis on a relatively frugal power profile. Another key piece is NVLink-C2C integration, which enables synchronized CPU–GPU memory access. This is part of the broader set of platform optimizations Nvidia says helps Rubin deliver an order-of-magnitude efficiency jump over its predecessor.

With Rubin, Nvidia is clearly signaling that the next phase of AI isn’t only about building bigger models—it’s about making them practical to train and affordable to run. If Rubin delivers on the promise of drastically lower token costs and fewer GPUs needed per training run, it could reshape how enterprises, cloud providers, and AI developers budget for scaling AI in 2026 and beyond.