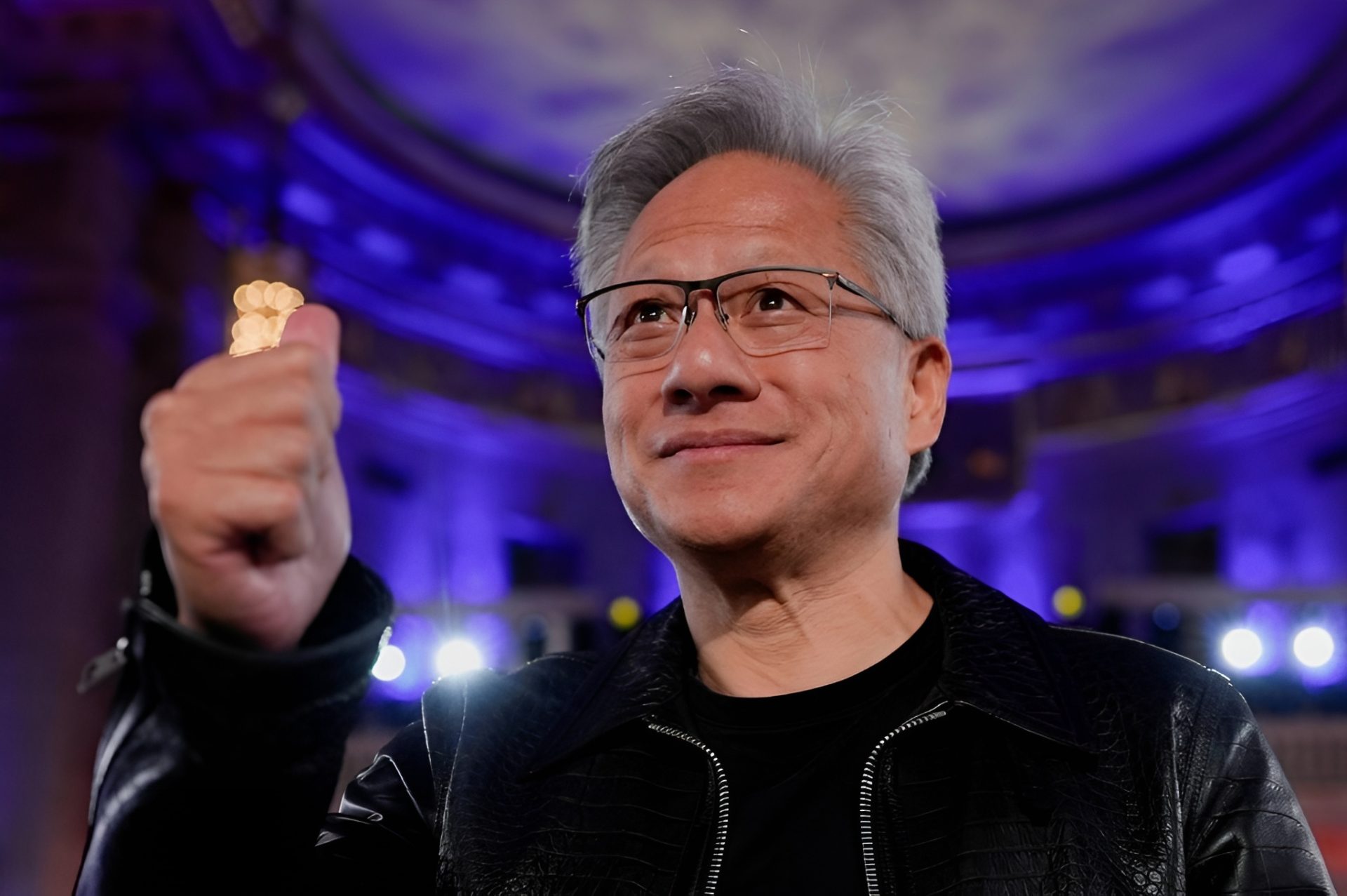

NVIDIA’s dominance in today’s AI boom isn’t just about having the most in-demand GPUs. It’s also about how the company, led by CEO Jensen Huang, is shaping the wider artificial intelligence ecosystem through aggressive investments, strategic partnerships, and customer-backed deals that help lock in long-term demand for NVIDIA hardware.

Right now, demand for AI compute is coming from every direction. Major AI labs building large language models need massive training capacity, hyperscalers are racing to add AI features across their platforms, and fast-growing “neocloud” providers are buying GPUs at scale to rent out to businesses that can’t secure enough capacity on their own. This nonstop appetite for AI chips has turned NVIDIA into one of the most profitable companies in the world, and the company has been using that rapidly expanding war chest to become something else as well: one of the most influential financiers in the AI industry.

The core idea behind NVIDIA’s investment strategy is straightforward. Huang has repeatedly framed these moves as “paving the way” for the AI revolution, not only in model training but also in the technologies that make modern AI infrastructure possible. That includes everything from next-gen inference performance to networking, optics, and the broader compute stack that supports deploying AI in real-world products. In other words, NVIDIA’s reach isn’t limited to what happens inside a server rack. It increasingly extends to the companies building the industry around those racks.

A particularly telling example involves Groq. As AI products expanded, the market quickly shifted from a training-only mindset to a world where fast inference matters just as much—sometimes more. AI labs began pushing for quicker responses and better real-time performance, creating pressure for inference solutions that weren’t always ideal in a “standalone” NVIDIA-only setup. As discussions formed around how to meet this new demand, NVIDIA moved quickly to secure a deal that helped it accelerate its position in inference.

That Groq agreement became one of NVIDIA’s biggest strategic moves in this area. It’s also described as a major step in NVIDIA’s push into inference platforms, helping set the stage for what industry watchers view as a more complete approach to serving both training and inference workloads. It’s the kind of move that reflects a broader pattern: NVIDIA doesn’t just follow where the AI market goes—it tries to get there first, especially when new workloads appear and competition heats up.

Another major pillar of NVIDIA’s strategy is its relationship with neoclouds, especially CoreWeave. These providers have become essential players in the AI economy by supplying on-demand GPU capacity to startups, mid-sized companies, and even larger enterprises trying to scale quickly. CoreWeave, in particular, has grown into NVIDIA’s most significant neocloud partner, backed by a series of large financial commitments.

The relationship isn’t limited to simple hardware sales. Recent deals include a $2 billion investment, and earlier arrangements included a $6.3 billion compute buyback-style agreement designed to reduce risk if CoreWeave is unable to lease its GPU capacity to customers by 2032. Deals like these can make it easier for partners to keep buying and deploying NVIDIA hardware, but they can also create powerful incentives that steer future purchasing decisions. The report suggests this dynamic can foster a sense of obligation, potentially making partners less willing to adopt alternatives from competing chipmakers.

Taken together, these alliances and investments add a less-discussed layer to NVIDIA’s AI story. It’s not only the world’s most sought-after AI compute supplier—it’s also using capital, partnerships, and customer-support mechanisms to influence how AI infrastructure demand grows and how the next generation of AI models, both open and closed, gets built and deployed.

If current trends hold, the scale and structure of these deals could play a meaningful role in determining which platforms win the next phase of AI—especially as inference expands, AI deployments move closer to real-time user experiences, and the industry’s infrastructure choices become harder to reverse.