ASIC-based accelerators are often touted as the looming threat to NVIDIA’s AI stronghold. Yet the company appears to have the playbook—and the pace—to keep that threat in check.

In the AI hardware world, ASICs are custom chips built to do one job exceptionally well. Tech giants like Meta, Amazon, and Google are investing heavily in their own silicon to tailor compute to their needs and reduce reliance on third-party suppliers. On paper, that’s a direct challenge to NVIDIA. In practice, NVIDIA’s rapid-fire roadmap and expanding ecosystem are making it hard for rivals to catch up.

The biggest differentiator is cadence. NVIDIA refreshes its AI product lineup on a roughly six- to eight-month cycle. Many competitors, by contrast, move annually. That velocity means NVIDIA can align hardware to fast-shifting customer demands—especially as the market pivots from pure training to massive-scale inference—undercutting the case for building narrowly focused ASICs that may arrive late to evolving requirements.

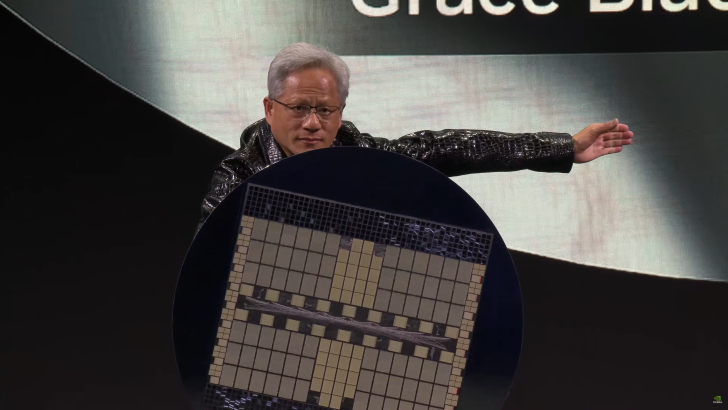

Consider Rubin CPX, a surprise addition that zeroes in on inference efficiency at a moment when inference is exploding across data centers. NVIDIA is also narrowing the gap between generations: the window between Blackwell Ultra and Rubin is expected to be around eight months. That relentless rhythm keeps customers on a clear, predictable upgrade path and compresses the window for alternative solutions to gain traction.

At the same time, NVIDIA is bolstering its moat through ecosystem strategy. NVLink Fusion is designed to make custom silicon from partners such as Intel and Samsung interoperable with NVIDIA platforms, bringing third-party chips into the fold rather than pushing them to the fringes. Add in high-profile partnerships—such as work with Intel and OpenAI—and the result is an AI stack that feels less like a product and more like critical infrastructure.

The business case is where NVIDIA leans hardest. As Jensen Huang has argued, even if a competitor priced chips at zero, the total cost of operating the system—think land, power, networking, and infrastructure—still favors NVIDIA when measured by performance per watt, per dollar, and per square foot. In other words, the economics of throughput and efficiency matter more than sticker price in AI-scale deployments.

None of this means the competition isn’t real. Amazon’s Trainium, Google’s TPUs, including the Ironwood generation, and Meta’s MTIA are serious efforts with clear momentum. More choice benefits the entire AI ecosystem, and innovation across training and inference will only accelerate from here. But for now, NVIDIA’s mix of rapid iteration, inference-focused silicon like Rubin CPX, deep interconnect tech such as NVLink Fusion, and ecosystem partnerships gives the company a formidable defensive and offensive stance.

Bottom line: ASICs will keep pushing the boundaries, but NVIDIA’s speed, scalability, and total cost-of-ownership story continue to set the pace for AI computing.