Meta is making it clear that its push into custom AI chips is not slowing down. In fact, the company’s newest updates show it’s doubling down on ASIC development under its Meta Training and Inference Accelerator (MTIA) program, with a major emphasis on inference-class performance for generative AI.

That strategy reflects a bigger trend across hyperscalers: demand for AI compute has grown so fast that relying solely on off-the-shelf GPUs is no longer enough. Building in-house silicon tailored to specific internal workloads can unlock major efficiency and performance gains, and Meta is positioning MTIA as a long-term pillar of its infrastructure.

Meta says its MTIA roadmap is progressing on schedule, and with one of the most aggressive development cadences in the industry. The plan is to roll out four new MTIA chips within the next two years, each tuned for different AI tasks ranging from recommendation training to large-scale generative AI inference. Rather than betting on a one-size-fits-all accelerator, Meta is splitting the roadmap into specialized parts so each generation targets a clear workload and bottleneck.

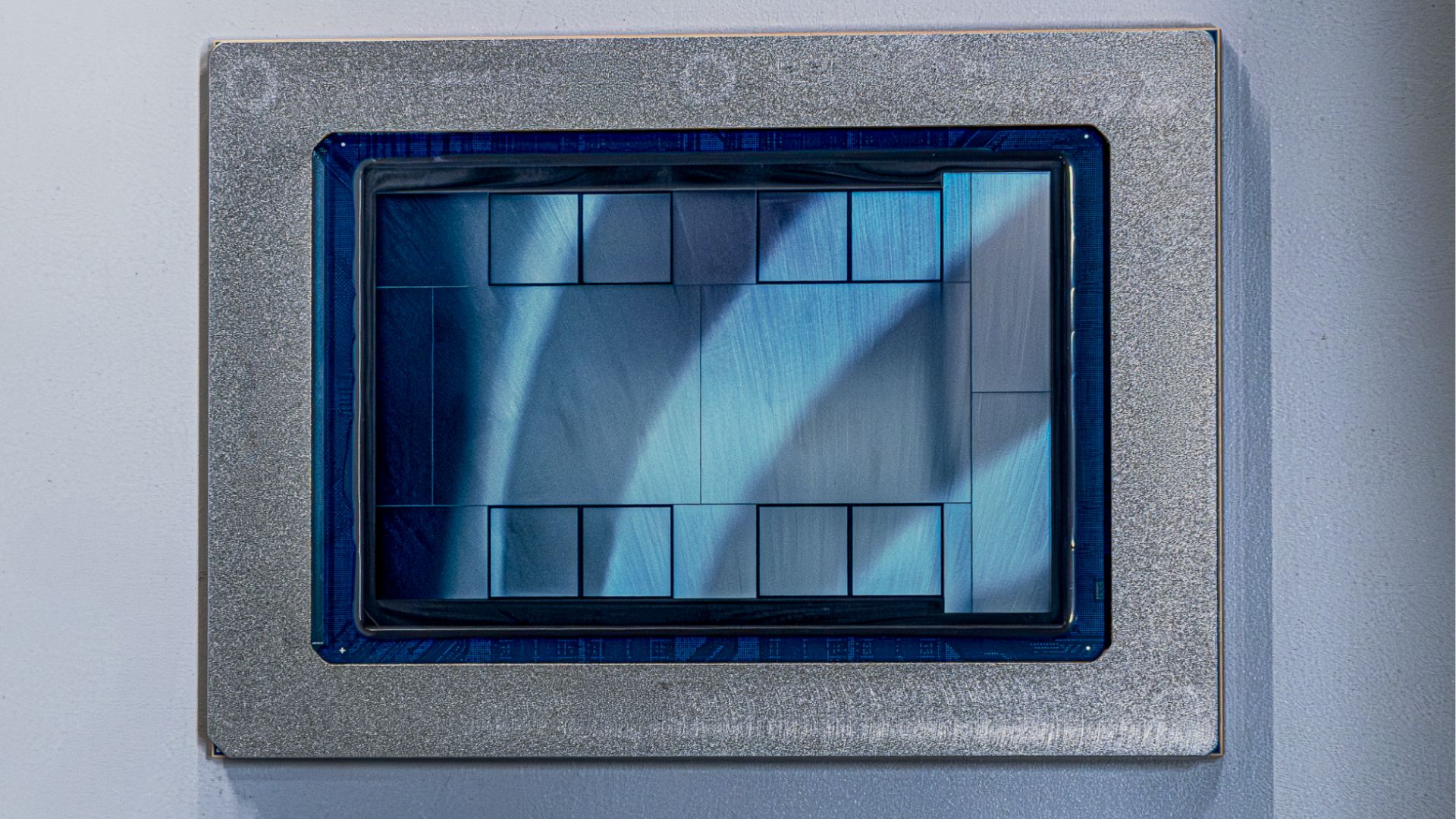

The MTIA 300 is built primarily for ranking and recommendation workloads. To support that focus, it leans into scale-out networking at 200 GB/s. The module combines one compute chiplet with two network chiplets, and it’s paired with multiple HBM stacks delivering 216 GB of memory capacity and about 6.12 TB/s of bandwidth. Meta describes this chip as the foundation that enabled the next step up.

That next step is MTIA 400, where Meta’s message is straightforward: more raw performance. Compared with the previous generation, MTIA 400 brings a large jump in key AI metrics, including roughly 400% higher FP8 throughput and about 51% more HBM bandwidth. It’s also designed around a 72-chip scale-up configuration connected through a switched backplane, a sign Meta is pushing hard into large, tightly connected accelerator domains. Meta indicates MTIA 400 is already moving toward deployment, suggesting internal confidence in its competitiveness.

The most attention-grabbing entries on the roadmap are MTIA 450 and MTIA 500, because they go directly after the growing demand for generative AI inference. Instead of simply chasing compute, these accelerators focus heavily on high-bandwidth memory capacity and bandwidth, two of the most important levers for serving large language models and other GenAI systems efficiently at scale.

Across the roadmap, Meta also highlights why it believes it can keep shipping chips quickly: a modular chiplet approach. By swapping individual chiplets generation to generation, Meta can upgrade performance and capabilities without repeatedly redesigning the entire platform from scratch. That kind of reuse helps maintain a fast product cadence and reduces disruption to the broader data center infrastructure.

Here’s how the roadmap stacks up based on the disclosed figures:

MTIA 300: Focused on ranking and recommendation training, with an 800 W module TDP, 216 GB HBM, and 6.1 TB/s HBM bandwidth. FP8/MX8 performance is listed at 1.2 PFLOPs and BF16 at 0.6 PFLOPs. Scale-up domain size is 16, with 1 TB/s scale-up networking and 200 GB/s scale-out networking.

MTIA 400: Positioned as a general accelerator, moving to 1200 W module TDP, 288 GB HBM, and 9.2 TB/s bandwidth. It reaches 6 PFLOPs FP8/MX8 and 3 PFLOPs BF16, with 12 PFLOPs in MX4. It supports a 72-chip scale-up domain, with 1.2 TB/s scale-up networking and 100 GB/s scale-out.

MTIA 450: A generative AI inference-focused part at 1400 W, still with 288 GB HBM but a major bandwidth jump to 18.4 TB/s. It’s listed at 7 PFLOPs FP8/MX8, 3.5 PFLOPs BF16, and 21 PFLOPs MX4. Scale-up remains 72 chips with 1.2 TB/s scale-up and 100 GB/s scale-out.

MTIA 500: Also geared toward GenAI inference, pushing to 1700 W and scaling HBM up to 384–512 GB, with bandwidth reaching 27.6 TB/s. Performance figures rise to 10 PFLOPs FP8/MX8, 5 PFLOPs BF16, and 30 PFLOPs MX4. It keeps the 72-chip scale-up design, along with 1.2 TB/s scale-up and 100 GB/s scale-out bandwidth.

Meta’s broader point is that it expects to compete with commercially available AI accelerators by moving faster and by building hardware that matches its own workloads rather than adapting workloads to fit general-purpose GPUs. That inference-first direction, especially in MTIA 450 and MTIA 500, signals that Meta is preparing for the sustained cost and capacity pressure that comes with serving generative AI at massive scale.

While recent partnerships and outside speculation suggested Meta might step back from custom silicon, the company’s latest statements suggest the opposite: it believes its engineering strategy is working and is committing to an accelerated rollout. Meta expects these MTIA generations to be deployed across 2026 and 2027, aiming to ease compute bottlenecks and expand AI capacity as demand continues to climb.