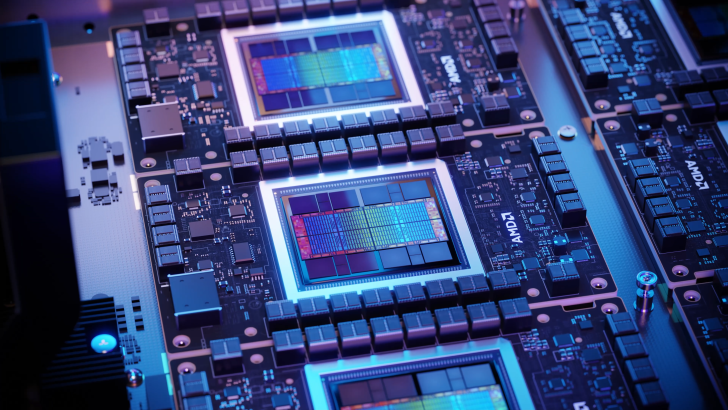

In the fast-paced tech industry, a new player, Celestial AI, is making waves by introducing an innovative interconnect solution that marries DDR5 and HBM memory, promising to transform chiplet efficiency. There’s buzz in the tech circles that AMD could be among the pioneers to adopt this groundbreaking approach.

Celestial AI’s initiative is set to challenge the limitations associated with traditional interconnects, leveraging the advancements in silicon photonics—a blend of laser technology and silicon capabilities—to create its unique Photonic Fabric solution. This technology is poised to be a significant advancement over the existing interconnect methods, such as NVIDIA’s NVLINK, traditional Ethernet, and AMD’s Infinity Fabric, which are becoming increasingly inadequate due to their limitations in efficiency and expandability.

The company’s co-founder, Dave Lazovsky, revealed that Celestial AI’s Photonic Fabric has already generated considerable interest among potential clients, as evidenced by their successful initial funding round of $175 million and notable industry support, including AMD. This indicates the potential impact of their interconnect method.

Regarding capability, Photonic Fabric’s first-generation technology could enable 1.8 Tb/sec per square millimeter, with the subsequent version expected to quadruple that performance. However, certain constraints with HBM stacking could limit memory capacity. To address this, Celestial AI suggests integrating DDR5 memory with HBM stacks. This hybrid approach would allow for expansive memory capacity, mingling two HBM stacks with four DDR5 DIMMs to achieve a combined 72 GB and up to 2 TB of memory. The integration of DDR5 also offers a more cost-effective price-to-capacity ratio, paving the way for a more efficient system overall.

The Photonic Fabric aims to serve as the connective backbone for this solution, and Celestial AI envisions this method as a highly cost-effective alternative to other complex architectures. However, the company anticipates that their interconnect solution will likely become commercially available by 2027. By then, the competition in silicon photonics will have intensified, challenging Celestial AI to differentiate itself in a crowded market that’s also eyed by heavyweights like TSMC and Intel.

The integration of high-bandwidth memory (HBM) with DDR5 heralds a potential 90% reduction in power consumption, an enticing prospect for industries focused on maintaining high performance while minimizing energy usage. This development could notably influence the design of next-generation chiplets, and AMD’s involvement could accelerate the adoption of this technology across the market.

Celestial AI’s journey into the tech market will be one to watch, as it could have a profound impact on the efficiency, capacity, and power consumption of future computing systems—factors that are becoming increasingly critical in the data-driven and environmentally conscious world we live in.