As next-generation AI server platforms move from pilot testing into large-scale deployment, a new pressure point is forming across the hardware supply chain. While high-bandwidth memory (HBM) has dominated headlines as the key limiter for AI server production, fresh industry analysis suggests the next wave of constraints is spreading into printed circuit boards (PCBs) and the specialized materials needed to build them.

The reason is simple: today’s AI servers are far more complex than conventional enterprise systems. They require advanced PCB designs to support massive data throughput, higher power delivery, denser component layouts, and strict signal integrity requirements. That higher specification raises the bar for manufacturing capacity and makes it harder for suppliers to scale quickly—especially when demand ramps all at once.

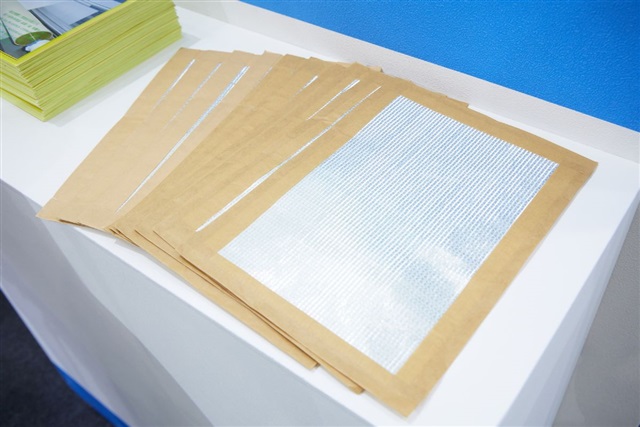

One of the most critical upstream pinch points is high-end fiberglass cloth, a foundational material used in advanced PCB laminates. As AI-related server orders accelerate, demand for premium fiberglass cloth is tightening supply further up the chain, creating ripple effects that can slow PCB output. Even if GPU availability and HBM supply improve, limited access to these high-grade PCB materials can still delay final server shipments.

What this means for the market is that AI server supply constraints are no longer a “single component” problem. Production timelines can now be impacted by multiple interlocking factors—HBM allocation, advanced PCB capacity, and the availability of key raw materials that aren’t easily substituted. For data center operators, cloud providers, and enterprise buyers planning AI infrastructure rollouts, these bottlenecks could translate into longer lead times, tighter pricing, and more competition for production slots.

In the months ahead, expect AI server supply chain updates to focus not just on memory and accelerators, but also on PCB manufacturing capacity and upstream materials like high-end fiberglass cloth. As AI infrastructure continues scaling, the ability to secure these less-discussed components may become a major differentiator in who can deliver next-generation AI servers on schedule.