Nvidia’s push toward 800V high-voltage power supplies for AI servers signals a major shift in how cutting-edge data centers will be designed, powered, and scaled. With most top-tier power semiconductor makers now tied into the supply chain, the spotlight is on whether their technologies can deliver the efficiency, reliability, and power density this new era demands.

What 800V means for AI infrastructure

– Higher efficiency and lower losses: By raising voltage, current drops for the same power, cutting I2R losses and enabling leaner cabling and busbar designs.

– More power per rack: AI clusters need massive, steady power. 800V architectures open headroom for higher rack densities without ballooning copper and cooling costs.

– Streamlined distribution: High-voltage distribution reduces bottlenecks between the grid, power shelves, and server boards, improving overall power path efficiency and helping lower PUE.

– Better scalability: As model sizes and GPU counts grow, 800V power rails help operators scale without wholesale electrical overhauls.

The GaN vs. SiC showdown

– Silicon carbide (SiC) shines at high voltage: Expect SiC devices to dominate front-end stages such as PFC and AC-DC conversion, where ruggedness, high breakdown voltage, and thermal robustness are critical.

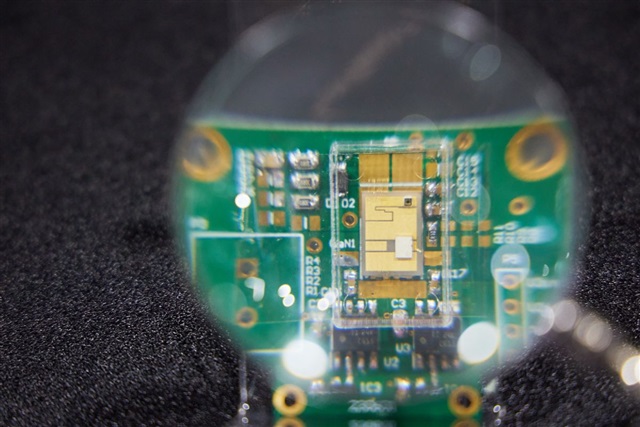

– Gallium nitride (GaN) drives density and speed: GaN’s fast switching and low losses make it ideal for high-frequency DC-DC stages, enabling smaller magnetics, tighter footprints, and superior transient response.

– Hybrid topologies win: Many designs will blend SiC at the HV front end with GaN in downstream converters to balance efficiency, size, thermals, and cost.

Why the industry is watching closely

– Efficiency at scale: Every fraction of a percent in conversion efficiency translates to major energy savings when multiplied across thousands of accelerators.

– Thermal management: Higher densities demand advanced cooling strategies, tighter airflow planning, and careful layout to avoid hot spots.

– Safety and compliance: 800V systems raise the bar for insulation, creepage/clearance, arc-flash mitigation, and interlock design, while meeting stringent global certifications.

– EMI and power quality: Fast-switching devices must deliver clean power with controlled harmonics, both for grid friendliness and for sensitive compute loads.

– Supply chain maturity: With nearly all leading power semiconductor players engaged, consistency, yield, and long-term availability will be under the microscope.

What data center leaders should ask vendors

– End-to-end efficiency: What is the cumulative efficiency from AC input to point-of-load at realistic operating points?

– Thermal headroom: How are thermal margins maintained under full-load and transient conditions, and what cooling assumptions are required?

– Reliability metrics: What are MTBF figures, derating policies, and protections at 800V? How are soft-fault and hard-fault scenarios handled?

– Modularity and serviceability: Can power shelves, rectifiers, and converters be swapped with minimal downtime? How well do they integrate with existing monitoring and orchestration?

– Cost and TCO: Beyond CAPEX, what are the projected energy savings, maintenance profiles, and lifecycle costs relative to 48V or other architectures?

– Roadmap and capacity: Can suppliers support rapid scaling, multi-site deployments, and next-gen upgrades without redesigning the entire power path?

The bottom line

Nvidia’s endorsement of 800V power in AI servers is more than a specification change—it’s a blueprint for the next generation of high-performance computing infrastructure. The outcome will hinge on how effectively GaN and SiC technologies are combined to deliver uncompromising efficiency, reliability, and density. If the supply chain meets the moment, 800V could become the default standard for hyperscale AI, cutting energy use per compute, simplifying power distribution, and unlocking the capacity needed for ever-larger AI workloads.