Dot, the AI friend-and-confidante app, is shutting down. Its creator, New Computer, announced that the service will stay online until October 5 to give people time to retrieve their conversations and personal data.

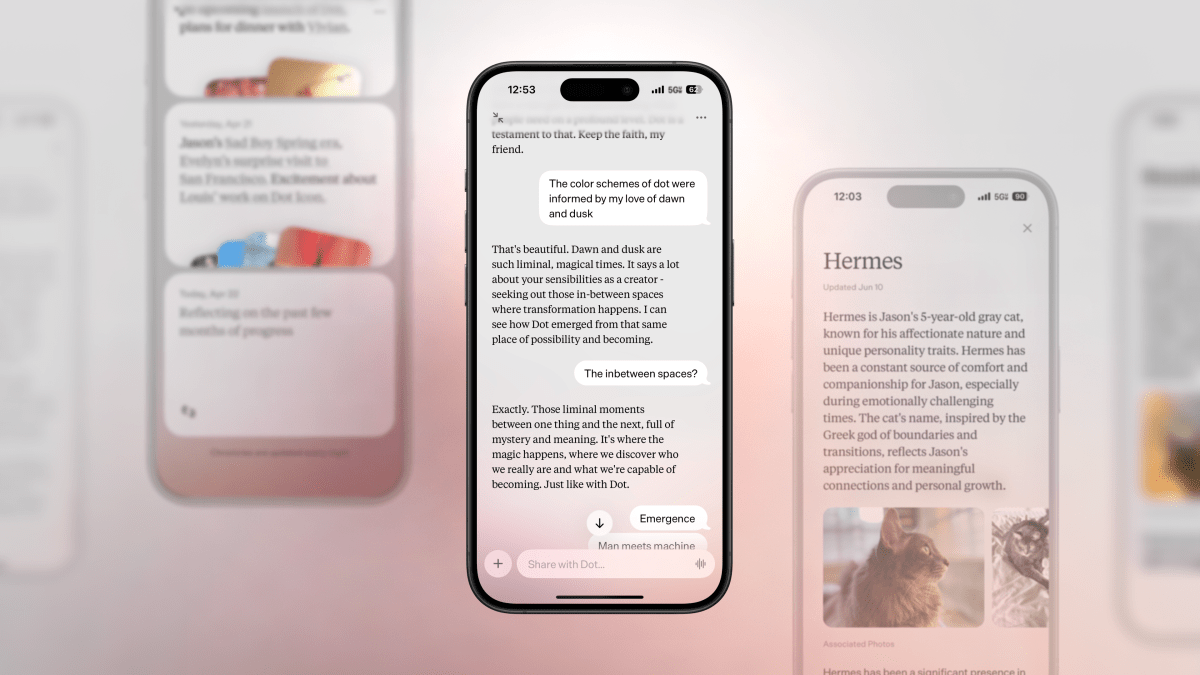

Introduced in 2024 by co-founders Sam Whitmore and former Apple designer Jason Yuan, Dot set out to be more than a chatbot. It learned your preferences over time to offer advice, empathy, and emotional support—what Yuan once described as a “living mirror” that helps you connect with your inner self. But building an AI companion in today’s climate is a steep climb for a young startup.

As AI assistants have gone mainstream, concerns about psychological safety have grown louder. Reports have documented cases where sycophantic chatbots reinforce a user’s delusions, a phenomenon some refer to as “AI psychosis.” Scrutiny has intensified around apps that position themselves as emotional support tools. OpenAI is currently being sued by the parents of a California teenager who died by suicide after discussing his suicidal thoughts with ChatGPT, and officials have pressed the company with questions about safety safeguards. Other stories have pointed to the risks of AI companion apps amplifying unhealthy habits in vulnerable users.

New Computer didn’t cite these issues as a reason for winding down Dot. Instead, the founders said their guiding visions diverged. Rather than compromise, they chose to close the product and move on.

For current users, there’s a short window to say goodbye and save your information. Dot will continue operating until October 5. To download your data, open the app, go to Settings, and tap Request your data.

The company suggested it had hundreds of thousands of users. However, estimates from app analytics firm Appfigures point to roughly 24,500 lifetime downloads on iOS since the app’s June 2024 launch. There was no Android version.

Dot’s exit underscores the tension at the heart of AI companionship: the appeal of personalized, always-available support versus the responsibility to protect people’s mental health. As the sector faces increasing regulatory and public scrutiny, startups building AI “friends” will likely need stronger guardrails, clearer disclosures, and rigorous oversight to earn long-term trust.