Dell puts open collaboration at the center of AI infrastructure growth

At the Open Compute Project Global Summit in San Jose, Dell Technologies CTO and senior vice president Ihab Tarazi spotlighted a clear message: the next wave of AI scale will be powered by open collaboration. His remarks underscored how shared standards, interoperable designs, and community-driven innovation are becoming essential to build, deploy, and operate AI at data center and edge scale.

Why open collaboration matters for AI

– Interoperability at scale: AI training and inference depend on seamless integration across compute, storage, networking, and software. Open, standards-based architectures reduce integration friction and speed time to value.

– Faster innovation cycles: Community-driven specifications and shared reference designs help teams test, validate, and deploy new technologies more quickly and with less risk.

– Choice without lock-in: Multi-vendor ecosystems lower total cost of ownership and keep supply chains resilient while allowing organizations to mix the best components for their workloads.

– Efficiency and sustainability: Shared best practices accelerate adoption of high-density designs, advanced cooling, and energy-aware operations—critical for AI’s growing power footprint.

Key priorities for scaling AI infrastructure

– Modular, rack-scale design: Standardized building blocks make it easier to expand capacity, rebalance resources, and support rapidly evolving AI models.

– Open networking fabrics: Standards-based networking simplifies deployment of high-performance AI clusters and makes it easier to scale out without proprietary constraints.

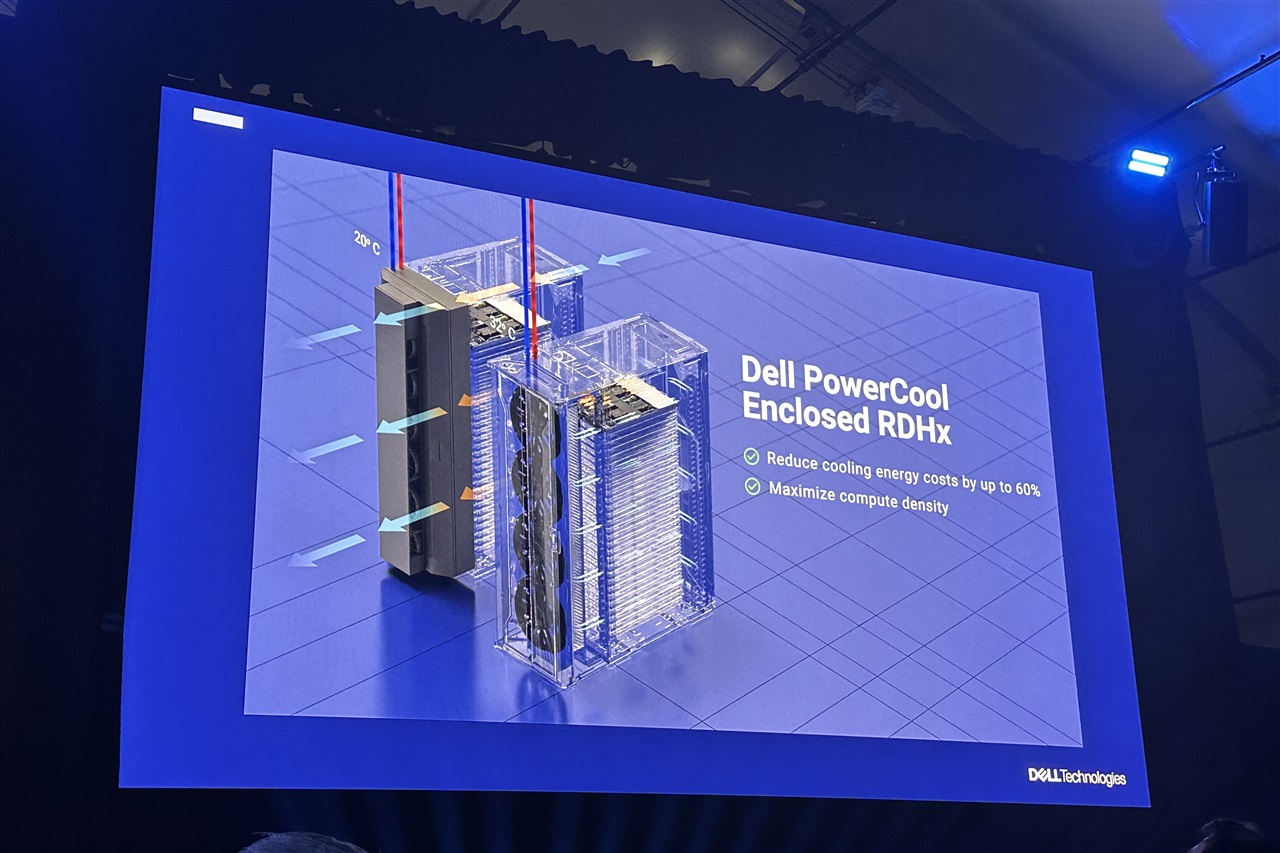

– Power and cooling at high density: As GPUs and accelerators push thermal limits, designs that embrace liquid cooling and efficient power delivery improve reliability and sustainability.

– Data pipelines and governance: AI outcomes depend on moving, curating, and securing data across core, cloud, and edge with consistent policies and tools.

– Automation and observability: Open management frameworks and APIs enable orchestration, telemetry, and lifecycle operations across multi-vendor environments.

– Edge-to-core consistency: Common architectures from the edge to the data center ensure predictable performance and easier management for real-time and batched AI workloads.

How the OCP community accelerates AI

– Shared specifications and best practices guide everything from server and rack design to power distribution and cooling approaches.

– Open reference patterns reduce integration complexity, helping teams stand up training and inference clusters with confidence.

– Cross-industry collaboration aligns silicon, systems, and software roadmaps, increasing performance while protecting long-term investments.

What this means for enterprises building AI

– Prioritize open standards when selecting compute, networking, storage, and management tools.

– Design for scalability with modular, rack-scale architectures that can evolve as models and accelerators change.

– Plan early for high-density power and cooling, including evaluating liquid cooling where appropriate.

– Build a robust data foundation with secure, governed pipelines across hybrid and multi-cloud environments.

– Automate operations with open APIs and observability to manage cost, performance, and uptime at scale.

The takeaway

Tarazi’s message is straightforward: AI is moving too fast, and growing too large, for closed, siloed approaches to keep up. Open collaboration—through shared designs, interoperable systems, and community-driven engineering—offers the most reliable path to scale AI infrastructure efficiently, sustainably, and with the flexibility organizations need as workloads evolve.