NVIDIA is putting even more weight behind its fast-growing partnership with CoreWeave, signaling a major push toward the next phase of AI infrastructure. The company’s CEO Jensen Huang confirmed that CoreWeave will be the first provider to roll out NVIDIA’s upcoming Vera CPUs as a standalone product, a move that could reshape how AI data centers are built and scaled.

NVIDIA is also backing that relationship with fresh capital. The company plans to invest an additional $2 billion into CoreWeave by purchasing Class A common stock priced at $87.20 per share. The goal is to help CoreWeave accelerate its long-term expansion plan, which includes building 5 gigawatts of AI factory capacity by 2030. For NVIDIA, the deal strengthens what it describes as a long-standing relationship while giving the company a high-profile launch partner for its next-generation server CPU strategy.

What makes this announcement particularly important is the “standalone” CPU angle. Huang highlighted that Vera CPUs won’t be locked behind an all-or-nothing platform bundle. Instead, customers using NVIDIA GPUs will also be able to run CPU workloads directly on NVIDIA CPUs within the same infrastructure ecosystem. In practical terms, that means companies may be able to standardize more of their AI stack around NVIDIA—without necessarily having to buy a full rack-scale solution every time they want top-tier CPU performance.

That approach hints at two big realities in today’s AI race.

First, CPUs are turning into a bigger bottleneck in the AI supply chain. As “agentic AI” grows—systems that can plan, reason, call tools, and execute multi-step tasks—the CPU side of the workflow matters more. AI isn’t only about raw GPU horsepower; it also depends on feeding data efficiently, coordinating tasks, managing memory, and orchestrating complex pipelines. If GPUs are the engines, CPUs are increasingly the traffic control system—and weak traffic control slows everything down.

Second, standalone Vera CPU availability gives customers more flexibility and could lower the cost of entry. Not every buyer needs (or can justify) a full high-end rack solution, but many still want premium CPU capabilities to pair with their existing accelerators and infrastructure. A standalone option can be a more accessible path while still keeping them inside NVIDIA’s ecosystem.

So what is Vera, and why is it getting so much attention?

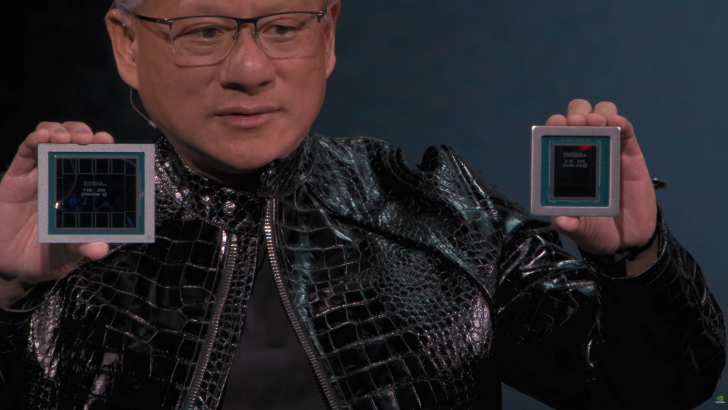

Vera is positioned as one of NVIDIA’s most capable CPU offerings yet, bringing major gains over the company’s previous-generation platform. It’s based on a next-generation custom ARM architecture codenamed Olympus and is designed specifically with modern AI data center needs in mind. The specs NVIDIA is pointing to are aimed at high throughput, fast memory movement, and large-scale deployment:

– 88 CPU cores and 176 threads, supported by NVIDIA Spatial Multi-Threading

– 1.8 TB/s NVLink-C2C coherent memory interconnect

– Up to 1.5 TB of system memory (noted as 3x the prior Grace level)

– 1.2 TB/s memory bandwidth using SOCAMM LPDDR5X

– Rack-scale confidential compute features for security-focused deployments

These capabilities speak directly to the core challenges of AI infrastructure in 2026 and beyond: moving huge amounts of data quickly, keeping accelerators fed, and supporting secure multi-tenant environments where multiple customers may share the same AI factory.

Huang also suggested this is only the beginning, mentioning that NVIDIA hasn’t announced its CPU “design wins” yet and that there will be many. That comment points to broader adoption across the industry—potentially beyond one cloud partner. It also raises interest around NVIDIA’s wider ARM ambitions, including the possibility of ARM-based SoCs aimed at next-generation AI PC workloads, sometimes discussed in connection with upcoming consumer-focused chips.

The bigger takeaway is clear: NVIDIA isn’t treating CPUs as a side project anymore. With Vera, the company is moving aggressively to expand from being the dominant GPU supplier into offering a more complete AI computing platform—one that addresses the full stack of performance constraints as AI workloads become more autonomous, more complex, and more infrastructure-hungry. CoreWeave getting first access to standalone Vera CPUs is a strong signal of where AI data centers are headed next.