A little-known Chinese AI chip startup called Iluvatar CoreX has stepped into the spotlight with an ambitious timeline: matching the performance of NVIDIA’s latest AI platforms within the next couple of years. In a global AI hardware race increasingly defined by compute scale, supply chain access, and export restrictions, the company’s roadmap is an eye-catching signal that China’s domestic GPU and accelerator push is far from slowing down.

According to a report from Chinese media outlet MyDrivers, Iluvatar CoreX believes it can take on NVIDIA’s Blackwell-class performance as soon as this year, then reach parity with the next-generation Vera Rubin platform by next year. If those targets are even partially achieved, it would be a major development for China’s hyperscalers and AI labs that have been working to reduce reliance on imported accelerators.

What sets Iluvatar CoreX apart from several other Chinese chip efforts is its positioning. The company is described as China’s first HPC-focused player, concentrating on high-performance computing and large-scale AI workloads rather than splitting attention between consumer graphics and data center acceleration. That focus matters in the AI era, where performance is measured not just by peak numbers, but by how well an accelerator handles training and inference at scale, across clusters, under real power and cooling limits.

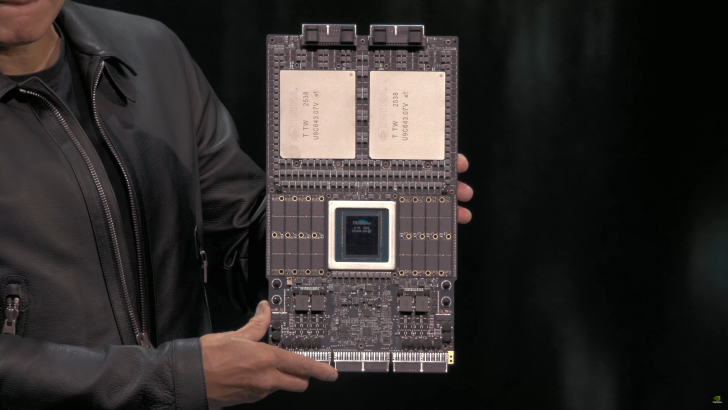

The report claims Iluvatar CoreX is building a proprietary, native architecture under a product family referred to as “Tianshu Zhixin.” While technical specifics on the upcoming roadmap are still scarce, the company is said to already have products that aim to compete with NVIDIA’s older Ampere generation, including chips named TianGai-100 and TianGai-150. However, publicly available details on specs, software compatibility, memory configurations, and large-scale deployment performance remain limited—exactly the areas that typically determine whether a new accelerator can move from bold claims to real adoption.

Iluvatar CoreX also isn’t the first China-based company to talk about matching NVIDIA’s future AI platforms. Similar competitive messaging has surfaced elsewhere, including plans around dense rack-scale solutions designed to pack thousands of AI chips into large clusters. These systems are intended to go head-to-head with the kind of integrated “supercluster” configurations that NVIDIA promotes for next-generation AI training. As always, the practical questions come down to deployment realities: how efficiently the chips run at high utilization, how well they scale across networking fabrics, and whether power delivery and thermal design can support sustained performance in production environments.

That brings the discussion to the biggest challenge facing Chinese AI chip startups: the semiconductor ecosystem. Strong architectural ideas and aggressive roadmaps can generate attention, but reaching true performance parity with the world’s leading AI infrastructure suppliers typically requires more than silicon design. It demands mature manufacturing access, advanced packaging, high-bandwidth memory supply, stable yields, and a robust software stack that developers can actually use. Without that full pipeline, even promising designs can end up feeling more like marketing than market-shaping products.

Still, Iluvatar CoreX’s roadmap highlights how intense the AI accelerator competition has become. Whether the company can deliver on its Blackwell- and Rubin-level ambitions will depend on execution, production capability, and software readiness—but the message is clear: China’s push for domestically developed AI compute is accelerating, and more challengers are lining up to take on the industry’s biggest name.