Intel has officially pulled the curtain back on Clearwater Forest, its upcoming “Xeon 6+” server processors built on the company’s advanced Intel 18A process technology. The target is clear: help operators and network builders prepare for the next wave of connectivity—6G—and the surge of Edge AI workloads that need fast, efficient compute closer to users.

As the industry looks beyond 5G, the message Intel is amplifying is that winning the 6G era won’t require ripping out today’s networks and starting over. Operators want evolution, not disruption. The priority is to build on the compute foundations already proven in 5G, then scale intelligence across existing infrastructure in a way that’s responsible, secure, and economically realistic.

What operators say they need most for the road to 6G and Edge AI

Intel points to several themes it’s hearing repeatedly across the telecom ecosystem.

First, AI inference needs to feel inherent to the network, not bolted on. Instead of forcing new accelerators everywhere or demanding major architectural changes, operators want AI capabilities that fit naturally into the systems they already run.

Second, efficiency is no longer optional. Power savings, infrastructure consolidation, and a lower total cost of ownership are driving decisions—especially as operators try to free budget and capacity for new, revenue-generating services while still meeting unpredictable, fast-changing user demand.

Third, openness matters. Carriers want platforms that are secure, production-ready, stable, and proven in real commercial deployments, with a low-risk upgrade path toward 6G.

One platform for network functions, security, services, and AI inference

Intel’s broader pitch is that operators should be able to run critical network workloads—core network functions, security, enterprise services, and AI inference—on a common, open compute foundation. Today, Intel highlights products such as Xeon 6 with E-cores, Xeon 6 SoC, and the Intel Ethernet 800 and 600 series as part of this approach. The idea is straightforward: modernize each generation without “rip-and-replace,” reduce complexity, and improve the economics of running large-scale networks.

That same approach is framed as beneficial for end users too, enabling more reliable connectivity, more personalized experiences, and better cost-efficiency as AI-driven services expand.

Why “CPU vs GPU” isn’t the right way to think about AI in telecom networks

Intel argues that it’s a mistake to treat AI infrastructure decisions as a simple CPU-versus-GPU battle. Networks evolve by placing the right compute in the right place, depending on the workload’s performance targets, power limits, cost sensitivity, and deployment realities.

In particular, Intel suggests that a GPU-first mindset, applied broadly to inference-heavy network workloads, can introduce higher cost and complexity, create new operational silos, and force architectural changes that don’t always match what the workload actually needs.

The real challenge for operators isn’t whether AI can run—it’s whether AI can be deployed at scale without re-architecting everything, and without blowing up long-term power and cost budgets.

Xeon 6 SoC approach: built-in AI acceleration inside the vRAN server

For the Radio Access Network (RAN), Intel is emphasizing that AI should be deployed without defaulting to separate, discrete accelerators everywhere. Xeon 6 SoC integrates AI acceleration into the virtualized RAN stack using Intel Advanced Matrix Extensions (AMX) and Intel vRAN Boost, aiming to run the majority of inference directly on the server.

Intel positions this as a practical path to lower total cost of ownership, better use of existing infrastructure, and AI deployment in live networks without a major overhaul. For operators balancing AI ambitions with affordability and efficiency, the promise is predictable performance, simpler operations, and easier scaling across large deployments.

Examples of Xeon 6 SoC in operators’ networks

Intel points to operators already using the Xeon 6 SoC approach today.

Rakuten Mobile is working with Intel to use the built-in AI acceleration, focusing on training, optimizing, and deploying AI models designed for demanding RAN workloads that require ultra-low, real-time latency.

Vodafone has committed to adopting Xeon 6 SoCs as part of large-scale Open RAN and vRAN modernization across Europe, building on earlier deployments where Intel-powered systems supported initial commercial rollouts.

Xeon 6 with E-cores: momentum in mobile core deployments

Intel also highlights traction for Xeon 6 with E-cores in mobile core environments.

SK Telecom is deploying Xeon 6 with E-cores alongside Intel Ethernet 800 Series products in its production mobile core environment.

NTT DOCOMO has selected Xeon 6 with E-cores and an Intel Ethernet E830 network adapter for next-generation mobile core deployments.

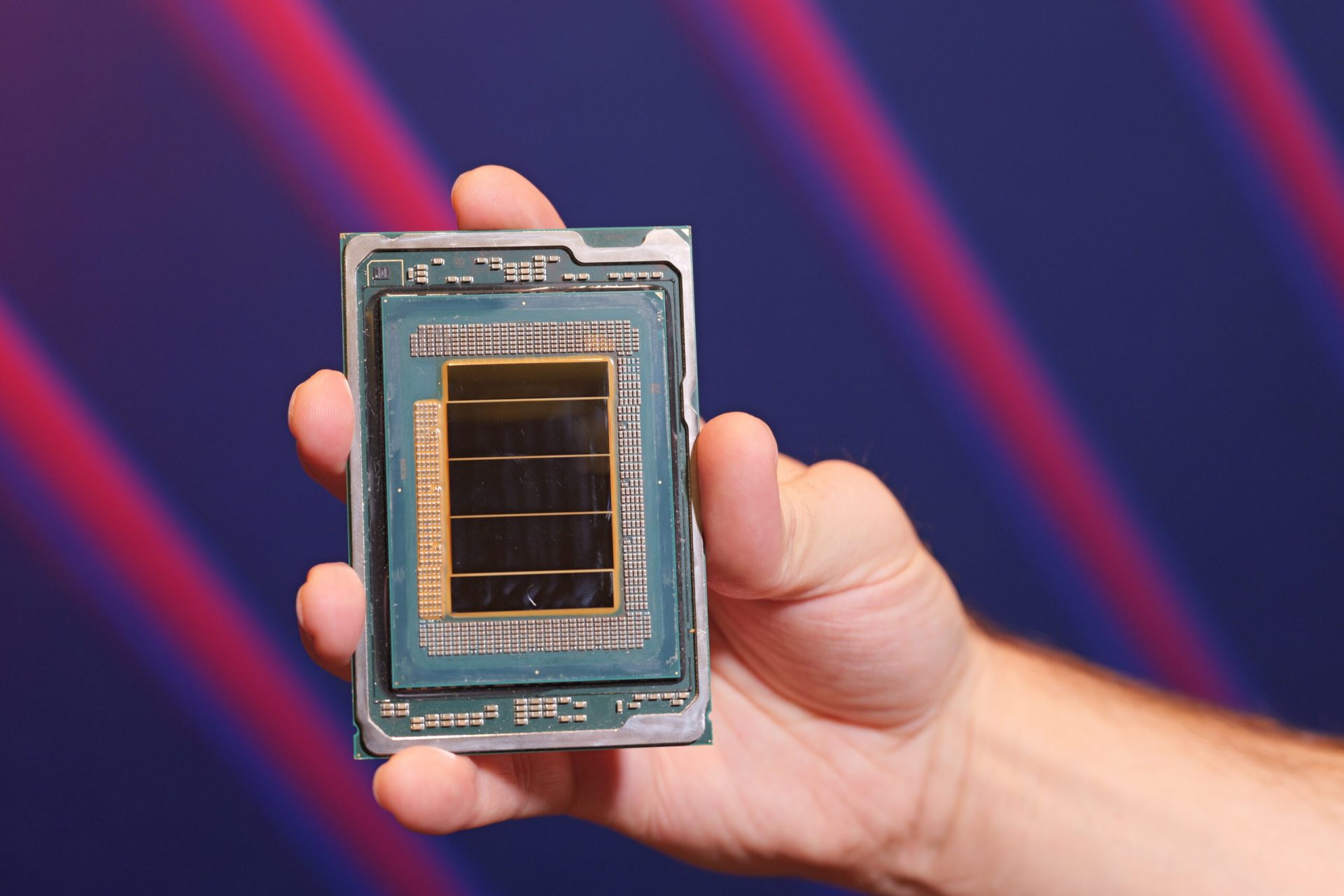

Introducing Intel Xeon 6+: Clearwater Forest and the efficiency push

With Clearwater Forest, Intel says it’s taking the next step in the Xeon 6 roadmap. Built on Intel 18A and designed with efficiency as a centerpiece, Xeon 6+ is intended to scale demanding workloads, cut energy use, and enable more intelligent network services—while increasing core density and reducing power consumption to improve total cost of ownership.

Intel positions the platform as relevant across 5G infrastructure and cloud-native applications, with the goal of reshaping data-center economics as networks move toward 6G.

Performance and power claims, plus expected timeframe

Intel also cites testing information attributed to Ericsson: a single Xeon 6990E+ “Clearwater Forest” processor with 288 cores reportedly delivered a 38% reduction in runtime rack power, over 60% better performance per watt, and 30% higher overall performance compared with a dual-socket Xeon 6780E “Sierra Forest” setup with the same total core count.

The Clearwater Forest family is expected to arrive by 2027, with Intel indicating that additional details will be shared in the months ahead as the next generation of network and edge computing infrastructure takes shape.