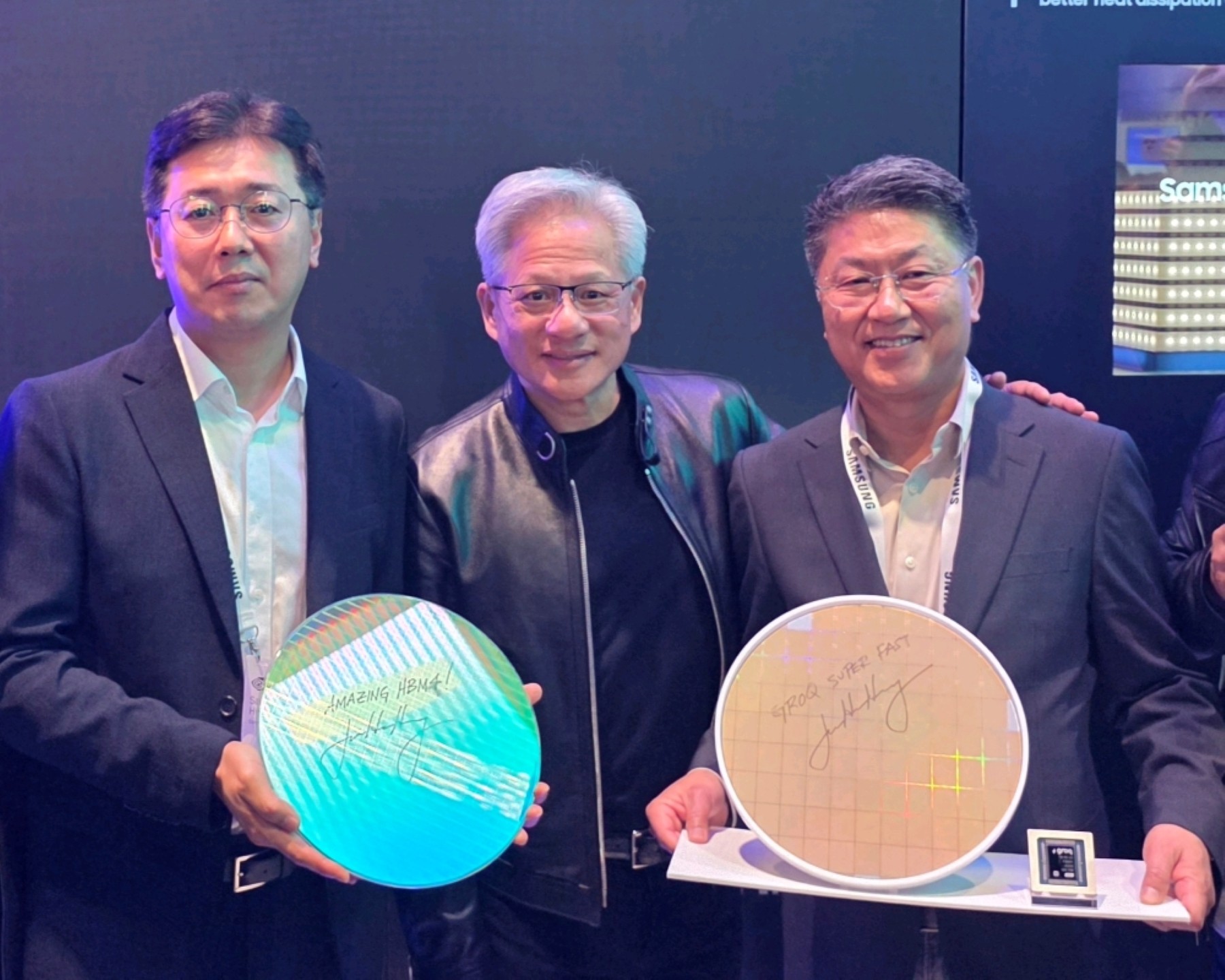

Samsung is turning up the heat in AI hardware with a new wave of memory and storage technologies aimed squarely at NVIDIA’s current and next-generation computing platforms. At GTC 2026, the company gave a closer look at its latest HBM and server components, including next-gen HBM4E high-bandwidth memory, PCIe Gen6 enterprise SSDs, and SOCAMM2 server RAM designed to help AI systems scale faster and run more efficiently.

HBM4E takes center stage with massive bandwidth and higher capacity

One of the biggest highlights is Samsung’s HBM4E, a next-generation high-bandwidth memory solution built to feed the extreme demands of modern AI GPUs. Samsung showed HBM4E running with 16 Gbps I/O speeds, enabling up to 4.0 TB/s of bandwidth per stack. The company is also targeting 16-Hi stacking, which pushes per-stack capacity to 48 GB.

Those figures matter most in the context of NVIDIA’s future “Rubin Ultra” platform. The design approach for Rubin Ultra is expected to scale up compute aggressively by using four GPU chiplets and 16 HBM memory sites. If that platform uses 16-Hi HBM4E stacks, it could reach up to 384 GB of total HBM capacity and as much as 64 TB/s of total memory bandwidth at 16 Gbps. That’s a huge leap compared to baseline Rubin configurations often cited at 288 GB of HBM4 and up to 22 TB/s of bandwidth.

HBM4 enters mass production, with HBM4E debuting at GTC 2026

Samsung also emphasized its broader HBM roadmap. The company says its sixth-generation HBM4 is now in mass production and is designed for NVIDIA’s Vera Rubin platform. Samsung expects HBM4 to help accelerate future AI application development, quoting consistent processing speeds of 11.7 Gbps—above an 8 Gbps industry baseline—with the potential to scale to 13 Gbps.

Alongside that, Samsung presented HBM4E publicly at GTC 2026 for the first time, positioning it as the next step up with 16 Gbps per pin and 4.0 TB/s bandwidth.

To help make even taller stacks practical, Samsung also highlighted its hybrid copper bonding (HCB) method. The company claims HCB can enable next-generation HBM to reach 16 layers or more while cutting heat resistance by over 20% compared to thermal compression bonding (TCB), an important improvement as AI accelerators push power and thermal limits.

SOCAMM2 and PCIe Gen6 SSDs target high-efficiency AI infrastructure

Beyond HBM, Samsung’s booth spotlighted technologies aimed at server scalability and system integration for AI data centers. SOCAMM2—Samsung’s low-power DRAM-based server memory module—was presented as a high-bandwidth option designed for flexible integration in next-gen AI infrastructure. Samsung also noted SOCAMM2 is already in mass production, describing it as the first in the industry to hit that milestone.

On the storage side, Samsung showcased the PM1763, an enterprise SSD built on the PCIe 6.0 interface to support faster data transfers and high capacities for AI workloads. Samsung also demonstrated PM1763 performance on servers using the NVIDIA SCADA programming model.

In addition, Samsung’s PM1753 SSD appears as part of the NVIDIA BlueField-4 STX reference architecture for accelerated storage within the Vera Rubin platform. The message here is clear: storage efficiency and throughput are becoming just as critical as GPU compute for inference-heavy deployments, and Samsung is aiming to boost energy efficiency as well as overall system performance.

Memory and storage for local AI and personal devices

Samsung didn’t limit the showcase to data centers. The company also presented memory and NAND solutions intended to improve local AI performance on personal systems. This included PM9E3 and PM9E1 NAND solutions for NVIDIA DGX Spark, framed as building blocks for “personal AI supercomputers” and local inference-focused workflows.

For mobile and edge AI, Samsung displayed LPDDR5X and LPDDR6 DRAM designed for premium smartphones, tablets, and wearables. LPDDR5X is rated at up to 25 Gbps per pin while reducing power consumption by up to 15%, targeting smoother gaming, responsive on-device AI features, and high-performance multitasking without draining battery life.

LPDDR6 pushes further with scalable 30–35 Gbps per pin bandwidth and introduces power-management features such as adaptive voltage scaling and dynamic refresh control. The goal is to deliver the sustained performance needed for upcoming edge-AI applications while keeping efficiency under control.

Big picture: Samsung is building the backbone for the next AI leap

From ultra-high bandwidth HBM4E for future multi-chip GPU platforms, to SOCAMM2 memory modules and PCIe Gen6 SSDs for modern AI servers, Samsung’s GTC 2026 showcase signals an all-out push to supply the full stack of components AI computing needs. As NVIDIA’s Vera Rubin and Rubin Ultra eras move closer, the race is increasingly about total platform performance—compute, memory, storage, thermals, and efficiency—and Samsung is positioning its next-gen hardware to be ready for that demand.