NVIDIA’s next big push in AI infrastructure is shaping up to center on its Blackwell Ultra GB300 AI servers, and the numbers suggest hyperscalers are preparing for a major expansion. With demand for large-scale AI computing still accelerating, GB300 is widely expected to become NVIDIA’s most important server platform for cloud giants in 2026, driving a sharp increase in shipments.

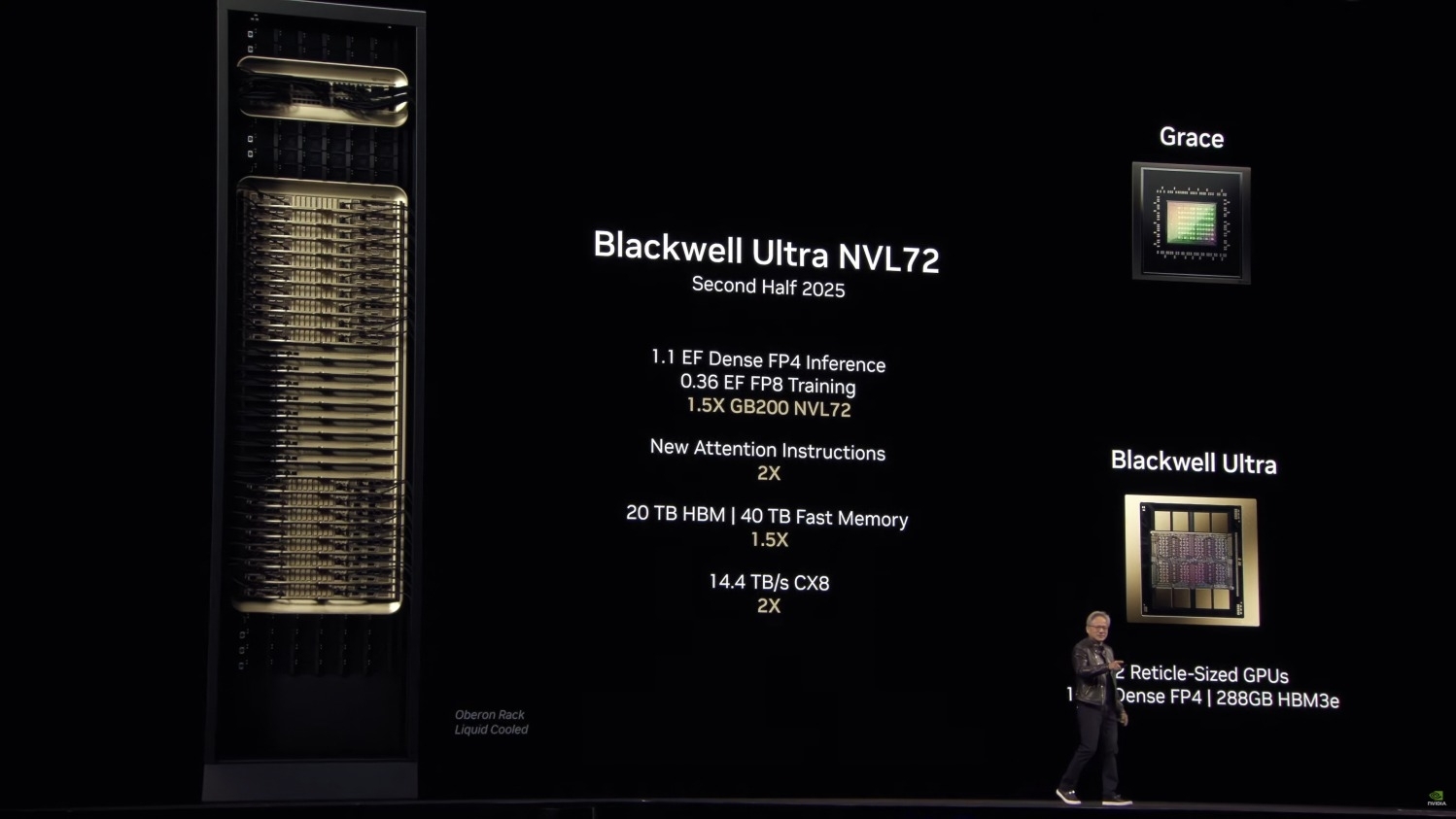

Production of GB300 systems has reportedly improved in a meaningful way, thanks to a mix of supply chain stabilization and practical design decisions. NVIDIA has been following an annual release rhythm, and Blackwell Ultra was introduced in Q2 2025 during the company’s GTC keynote. While the product was announced earlier in the year, the volume ramp didn’t really take off until the second half of 2025, which is why many hyperscalers focused heavily on deploying Blackwell GB200 server configurations throughout Q3 and Q4.

Looking ahead to 2026, industry expectations are shifting. A report cited by United News Daily claims NVIDIA’s GB300 shipments could climb 129% year over year, fueled by adoption from major AI and cloud players such as Microsoft, Amazon, and Meta. Some estimates go even further, suggesting Blackwell Ultra may reach as many as 60,000 racks shipped across 2026—an enormous figure that speaks to how quickly AI data centers are scaling.

One reason GB300 appears to be in a stronger position now is manufacturing readiness. Large manufacturing partners, including Foxconn, are said to have adapted to the requirements of producing these advanced AI server racks at scale. At the same time, NVIDIA reportedly chose to stick with the established “Bianca” board configuration for Blackwell Ultra, rather than leaning into the more complex “Cordelia” design. That decision matters because it can simplify production and help suppliers achieve better yield rates—an essential factor when the goal is to ship tens of thousands of racks.

In terms of what’s changing with Blackwell Ultra, the GB300 platform is expected to retain the overall structure that defined the earlier Blackwell systems, while delivering key gains through the new B300 AI chips. The industry is still in the early stages of understanding exactly how much performance and efficiency GB300 will unlock at scale, but expectations are high. The previous-generation GB200 NVL72 platform has already been associated with running frontier-class AI work, and GB300 is positioned to push those limits further with improved performance and next-level data center capability.

GB300 is also expected to serve as a bridge to what comes next: NVIDIA’s Rubin AI racks. Rubin is described as a major step forward across the full AI stack, including chips, networking, and rack-level configuration. Current expectations point to Rubin arriving in the market in the second half of 2026, with a formal spotlight planned for GTC in March.

Taken together, the story is straightforward: GB300 is not just another AI server update—it’s likely to be the platform hyperscalers lean on most heavily in 2026 as they race to build out larger AI clusters, train more advanced models, and expand inference capacity. If production yields and ramp volumes continue improving, Blackwell Ultra could become the defining AI infrastructure deployment of the year.