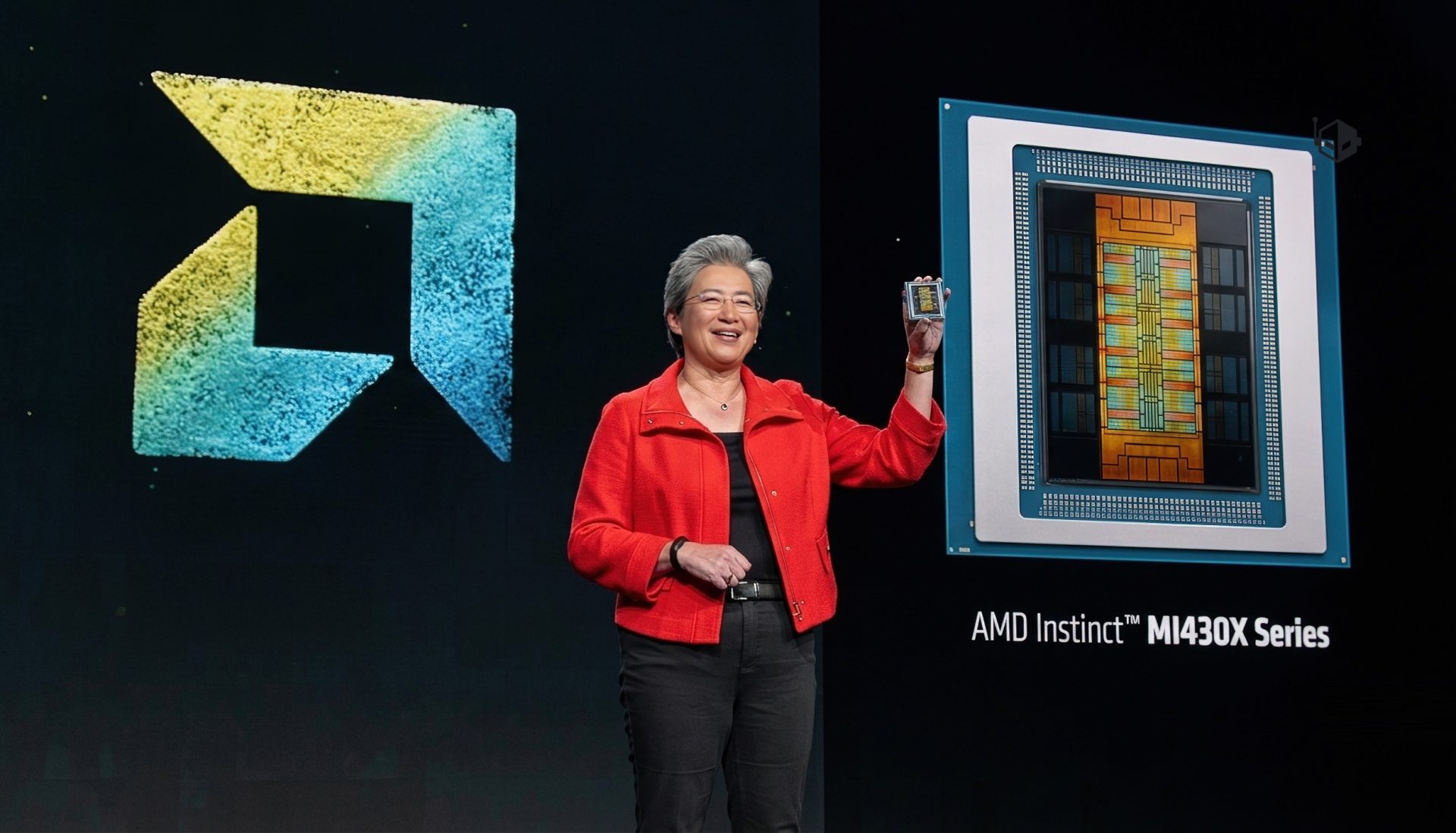

AMD is getting ready to make a major statement in high-performance computing with its next-generation Instinct MI430X GPU, a chip the company says will deliver the fastest native FP64 performance ever seen on an HPC-focused accelerator. While much of today’s AI boom is driven by lower-precision math formats like FP4, FP6, and FP8, classic supercomputing workloads still depend heavily on double-precision FP64 for accuracy in simulations, modeling, and scientific research. That’s where AMD is aiming to widen the gap.

According to the preview, the AMD Instinct MI430X is expected to reach up to 200 TFLOPs of raw FP64 performance. If that figure holds, it would place the MI430X at the top of the FP64 leaderboard for HPC GPUs. AMD attributes this leap to its CDNA-based design direction along with advanced manufacturing and packaging techniques, plus large pools of high-bandwidth HBM4 memory designed to keep data moving fast during demanding compute jobs.

The MI430X is part of AMD’s broader MI400 series, which will include multiple accelerators targeting different needs. In the same family, AMD positions the MI450X as its primary AI-focused accelerator, while the MI430X is being framed as the standout choice for organizations that prioritize traditional HPC performance but still want strong AI capabilities in the same platform.

In performance terms, AMD is also drawing a sharp comparison with NVIDIA’s upcoming Rubin generation for FP64-heavy computing. The preview claims MI430X can offer up to 6x the FP64 compute capability versus Rubin in classic HPC-style workloads. The key point here is native FP64 throughput: AMD is quoting 200 TFLOPs FP64 for MI430X, while Rubin is described as delivering 33 TFLOPs of FP64 vector compute, with higher FP64 figures possible through tensor-core-based approaches. For HPC buyers who care about straightforward, native double-precision performance, AMD is clearly trying to make the argument that MI430X is the more direct FP64 powerhouse.

AMD also emphasizes that MI430X won’t be limited to scientific computing. The company says the accelerator will pair its double-precision strength with competitive low-precision AI performance, positioning it as a unified solution for modern supercomputing environments that increasingly blend simulation, analytics, and AI.

Two major supercomputing deployments were highlighted as evidence that FP64-driven HPC remains a priority. In the United States, AMD Instinct MI430X GPUs are planned for the Discovery system at Oak Ridge National Laboratory, with deployment targeted for 2028. The system is expected to combine large numbers of MI430X accelerators with AMD EPYC CPUs and is described as a flagship platform intended to support breakthroughs across areas like energy research, biology, national security, advanced materials, and manufacturing innovation.

In Europe, AMD says MI430X accelerators will also be deployed with next-generation EPYC processors in the Alice Recoque system, a project aiming to become a leading exascale-class supercomputer in the region.

Taken together, the message is clear: even as AI continues to push FLOPs with low-precision compute, AMD is betting that double-precision FP64 performance will remain essential for the world’s most important scientific and engineering workloads—and the Instinct MI430X is being built to dominate that space while still keeping one foot firmly in AI acceleration.